From the Seven Hills of Rome to the Seven Sages of China’s Bamboo Grove, from the Seven Wonders of the Ancient World to the Seven Heavens of Islam, from the Seven Final Sayings of Jesus to Snow White and the Seven Dwarfs, the number seven has always struck us as a special one. Hironobu Sakaguchi and his crew at Square, the people behind the Final Fantasy series, were no exception. In the mid-1990s, when the time came to think about what the seventh entry in the series ought to be, they instinctively felt that this one had to be bigger and better than any that had come before. It had to double down on all of the series’s traditional strengths and tropes to become the ultimate Final Fantasy. Sakaguchi and company would achieve these goals; the seventh Final Fantasy game has remained to this day the best-selling, most iconic of them all. But the road to that seventh heaven was not an entirely smooth one.

The mid-1990s were a transformative period, both for Square as a studio and for the industry of which it was a part. For the former, it was “a perfect storm, when Square still acted like a small company but had the resources of a big one,” as Matt Leone of Polygon writes. Meanwhile the videogames industry at large was feeling the ground shift under its feet, as the technologies that went into making and playing console-based games were undergoing their most dramatic shift since the Atari VCS had first turned the idea of a machine for playing games on the family television into a popular reality. CD-ROM drives were already available for Sega’s consoles, with a storage capacity two orders of magnitude greater than that of the most capacious cartridges. And 3D graphics hardware was on the horizon as well, promising to replace pixel graphics with embodied, immersive experiences in sprawling virtual worlds. Final Fantasy VII charged headlong into these changes like a starving man at a feast, sending great greasy globs of excitement — and also controversy — flying everywhere.

The controversy came in the form of one of the most shocking platform switches in the history of videogames. To fully appreciate the impact of Square’s announcement on January 12, 1996, that Final Fantasy VII would run on the new Sony PlayStation rather than Nintendo’s next-generation console, we need to look a little closer at the state of the console landscape in the years immediately preceding it.

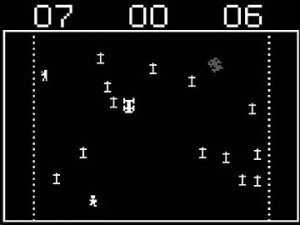

Through the first half of the 1990s, Nintendo was still the king of console gaming, but it was no longer the unchallenged supreme despot it had been during the 1980s. Nintendo had always been conservative in terms of hardware, placing its faith, like Apple Computer in an adjacent marketplace, in a holistic customer experience rather than raw performance statistics. As part and parcel of this approach, every game that Nintendo agreed to allow into its walled garden was tuned and polished to a fine sheen, having any jagged edges that might cause anyone any sort of offense whatsoever painstakingly sanded away. An upstart known as Sega had learned to live in the gaps this business philosophy opened up, deploying edgier games on more cutting-edge hardware. As early as December of 1991, Sega began offering its Japanese customers a CD-drive add-on for its current console, the Mega Drive (known as the Sega Genesis in North America, which received the CD add-on the following October). Although the three-year-old Mega Drive’s intrinsic limitations made this early experiment in multimedia gaming for the living room a somewhat underwhelming affair — there was only so much you could do with 61 colors at a resolution of 320 X 240 — it perfectly illustrated the differences in the two companies’ approaches. While Sega threw whatever it had to hand at the wall just to see what stuck, Nintendo held back like a Dana Carvey impression of George Herbert Walker Bush: “Wouldn’t be prudent at this juncture…”

Sony was all too well-acquainted with Nintendo’s innate caution. As the co-creator of the CD storage format, it had signed an agreement with Nintendo back in 1988 to make a CD drive for the upcoming Super Famicom console (which was to be known as the Super Nintendo Entertainment System in the West) as soon as the technology had matured enough for it to be cost-effective. By the time the Super Famicom was released in 1990, Sony was hard at work on the project. But on May 29, 1991, just three days before a joint Nintendo/Sony “Play Station” was to have been demonstrated to the world at the Summer Consumer Electronics Show in Chicago, Nintendo suddenly backed out of the deal, announcing that it would instead be working on CD-ROM technology with the Dutch electronics giant Philips — ironically, Sony’s partner in the creation of the original CD standard.

Nintendo’s reason for pulling out seems to have come down to the terms of the planned business relationship. Nintendo, whose instinct for micro-management and tough deal-making was legendary, had uncharacteristically promised Sony a veritable free hand, allowing it to publish whatever CD-based software it wanted without asking Nintendo’s permission or paying it any royalty whatsoever. In fact, given that a contract to that effect had already been signed long before the Consumer Electronics Show, Sony was, legally speaking, still free to continue with the Play Station on its own, piggybacking on the success of Nintendo’s console. And initially it seemed inclined to do just that. “Sony will throw open its doors to software makers to produce software using music and movie assets,” it announced at the show, promising games based on its wide range of media properties, from the music catalog of Michael Jackson to the upcoming blockbuster movie Hook. Even worse from Nintendo’s perspective, “in order to promote the Super Disc format, Sony intends to broadly license it to the software industry.” Nintendo’s walled garden, in other words, looked about to be trampled by a horde of unwashed, unvetted, unmonetized intruders charging through the gate Sony was ready and willing to open to them. The prospect must have sent the control freaks inside Nintendo’s executive wing into conniptions.

It was a strange situation any way you looked at it. The Super Famicom might soon become the host of not one but two competing CD-ROM solutions, an authorized one from Philips and an unauthorized one from Sony, each using different file formats for a different library of games and other software. (Want to play Super Mario on CD? Buy the Philips drive! Want Michael Jackson? Buy the Play Station!)

In the end, though, neither of the two came to be. Philips decided it wasn’t worth distracting consumers from its own stand-alone CD-based “multimedia box” for the home, the CD-i.[1]Philips wasn’t, however, above exploiting the letter of its contract with Nintendo to make a Mario game and three substandard Legend of Zelda games available for the CD-i. Sony likewise began to wonder in the aftermath of its defiant trade-show announcement whether it was really in its long-term interest to become an unwanted squatter on Nintendo’s real estate.

Still, the episode had given some at Sony a serious case of videogame jealousy. It was clear by now that this new industry wasn’t a fad. Why shouldn’t Sony be a part of it, just as it was an integral part of the music, movie, and television industries? On June 24, 1992, the company held an unusually long and heated senior-management debate. After much back and forth, CEO Norio Ohga pronounced his conclusion: Sony would turn the Play Station into the PlayStation, a standalone CD-based videogame console of its own, both a weapon with which to bludgeon Nintendo for its breach of trust and — and ultimately more importantly — an entrée to the fastest-growing entertainment sector in the world.

The project was handed to one Ken Kutaragi, who had also been in charge of the aborted Super Famicom CD add-on. He knew precisely what he wanted Sony’s first games console to be: a fusion of CD-ROM with another cutting-edge technology, hardware-enabled 3D graphics. “From the mid-1980s, I dreamed of the day when 3D computer graphics could be enjoyed at home,” he says. “What kind of graphics could we create if we combined a real-time, 3D computer-graphics engine with CD-ROM? Surely this would develop into a new form of entertainment.”

It took him and his engineers a little over two years to complete the PlayStation, which in addition to a CD drive and a 3D-graphics system sported a 32-bit MIPS microprocessor running at 34 MHz, 3 MB of memory (of which 1 MB was dedicated to graphics alone), audiophile-quality sound hardware, and a slot for 128 K memory cards that could be used for saving game state between sessions, ensuring that long-form games like JRPGs would no longer need to rely on tedious manual-entry codes or balky, unreliable cartridge-mounted battery packs for the purpose.

In contrast to the consoles of Nintendo, which seemed almost self-consciously crafted to look like toys, and those of Sega, which had a boy-racer quality about them, the Sony PlayStation looked stylish and adult — but not too adult. (The stylishness came through despite the occasionally mooted comparisons to a toilet.)

The first Sony PlayStations went on sale in Tokyo’s famed Akihabara electronics district on December 3, 1994. Thousands camped out in line in front of the shops the night before. “It’s so utterly different from traditional game machines that I didn’t even think about the price,” said one starry-eyed young man to a reporter on the scene. Most of the shops were sold out before noon. Norio Ohga was mobbed by family and friends in the days that followed, all begging him to secure them a PlayStation for their children before Christmas. It was only when that happened, he would later say, that he fully realized what a game changer (pun intended) his company had on its hands. Just like that, the fight between Nintendo and Sega — the latter had a new 32-bit CD-based console of its own, the Saturn, while the former was taking it slowly and cautiously, as usual — became a three-way battle royal.

The PlayStation was an impressive piece of kit for the price, but it was, as always, the games themselves that really sold it. Ken Kutaragi had made the rounds of Japanese and foreign studios, and found to his gratification that many of them were tired of being under the heavy thumb of Nintendo. Sony’s garden was to be walled just like Nintendo’s — you had to pay it a fee to sell games for its console as well — but it made a point of treating those who made games for its system as valued partners rather than pestering supplicants: the financial terms were better, the hardware was better, the development tools were better, the technical support was better, the overall vibe was better. Nintendo had its own home-grown line of games for its consoles to which it always gave priority in every sense of the word, a conflict of interest from which Sony was blessedly free.[2]Sony did purchase the venerable British game developer and publisher Psygnosis well before its console’s launch to help prime the pump with some quality games, but it largely left it to manage its own affairs on the other side of the world. Game cartridges were complicated and expensive to produce, and the factories that made them for Nintendo’s consoles were all controlled by that company. Nintendo was notoriously slow to approve new production runs of any but its own games, leaving many studios convinced that their smashing success had been throttled down to a mere qualified one by a shortage of actual games in stores at the critical instant. CDs, on the other hand, were quick and cheap to churn out from any of dozens of pressing plants all over the world. Citing advantages like these, Kutaragi found it was possible to tempt even as longstanding a Nintendo partner as Namco — the creator of the hallowed arcade classics Galaxian and Pac-Man — into committing itself “100 percent to the PlayStation.” The first fruit of this defection was Ridge Racer, a port of a stand-up arcade game that became the new console’s breakout early hit.

Square was also among the software houses that Ken Kutaragi approached, but he made no initial inroads there. For all the annoyances of dealing with Nintendo, it still owned the biggest player base in the world, one that had treated Final Fantasy very well indeed, to the tune of more than 9 million games sold to date in Japan alone. This was not a partner that one abandoned lightly — especially not with the Nintendo 64, said partner’s own next-generation console, due at last in 1996. It promised to be every bit as audiovisually capable as the Sony PlayStation or Sega Saturn, even as it was based around a 64-bit processor in place of the 32-bit units of the competition.

Indeed, in many ways the relationship between Nintendo and Square seemed closer than ever in the wake of the PlayStation’s launch. When Yoshihiro Maruyama joined Square in September of 1995 to run its North American operations, he was told that “Square will always be with Nintendo. As long as you work for us, it’s basically the same as working for Nintendo.” Which in a sense he literally was, given that Nintendo by now owned a substantial chunk of Square’s stock. In November of 1995, Nintendo’s president Hiroshi Yamauchi cited the Final Fantasy series as one of his consoles’ unsurpassed crown jewels — eat your heart out, Sony! — at Shoshinkai, Nintendo’s annual press shindig and trade show. As its farewell to the Super Famicom, Square had agreed to make Super Mario RPG: Legend of the Seven Stars, dropping Nintendo’s Italian plumber into a style of game completely different from his usual fare. Released in March of 1996, it was a predictably huge hit in Japan, while also, encouragingly, leveraging the little guy’s Stateside popularity to become the most successful JRPG since Final Fantasy I in those harsh foreign climes.

But Super Mario RPG wound up marking the end of an era in more ways than Nintendo had imagined: it was not just Square’s last Super Famicom RPG but its last major RPG for a Nintendo console, full stop. For just as it was in its last stages of development, there came the earthshaking announcement of January 12, 1996, that Final Fantasy was switching platforms to the PlayStation. Et tu, Square? “I was kind of shocked,” Yoshihiro Maruyama admits. As was everyone else.

The Nintendo 64, which looked like a toy — and an anachronistic one at that — next to the PlayStation.

Square’s decision was prompted by what seemed to have become an almost reactionary intransigence on the part of Nintendo when it came to the subject of CD-ROM. After the two abortive attempts to bring CDs to the Super Famicom, everyone had assumed as a matter of course that they would be the storage medium of the Nintendo 64. It was thus nothing short of baffling when the first prototypes of the console were unveiled in November of 1995 with no CD drive built-in and not even any option on the horizon for adding one. Nintendo’s latest and greatest was instead to live or die with old-school cartridges which had a capacity of just 64 MB, one-tenth that of a CD.

Why did Nintendo make such a counterintuitive choice? The one compelling technical argument for sticking with cartridges was the loading time of CDs, a mechanical storage medium rather than a solid-state one. Nintendo’s ethos of user-friendly accessibility had always insisted that a game come up instantly when you turned the console on and play without interruption thereafter. Nintendo believed, with considerable justification, that this quality had been the not-so-secret weapon in its first-generation console’s victorious battle against floppy-disk-based 8-bit American microcomputers that otherwise boasted similar audiovisual and processing capabilities, such as the Commodore 64. The PlayStation CD drive, which could transfer 300 K per second into memory, was many, many times faster than the Commodore 64’s infamously slow disk drive, but it wasn’t instant. A cartridge, on the other hand, for all practical purposes was.

Fair enough, as far as it went. Yet there were other, darker insinuations swirling around the games industry which had their own ring of truth. Nintendo, it was said, was loath to give up its stranglehold on the means of production of cartridges and embrace commodity CD-stamping facilities. Most of all, many sensed, the decision to stay with cartridges was bound up with Nintendo’s congenital need to be different, and to assert its idiosyncratic hegemony by making everyone else dance to its tune while it was at it. The question now was whether it had taken this arrogance too far, was about to dance itself into irrelevance while the makers of third-party games moved on to other, equally viable alternative platforms.

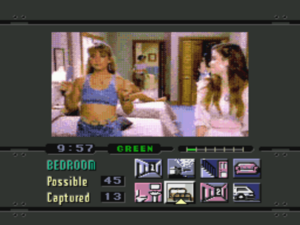

Exhibit Number One of same was the PlayStation, which seemed tailor-made for the kind of big, epic game that every Final Fantasy to date had strained to be. It was far easier to churn out huge quantities of 3D graphics than it was hand-drawn pixel art, while the staggering storage capacity of CD-ROM gave Square someplace to keep it all — with, it should not be forgotten, the possibility of finding even more space by the simple expedient of shipping a game on multiple CDs, another affordance that cartridges did not allow. And then there were those handy little memory cards for saving state. Those benefits were surely worth trading a little bit of loading time for.

But there was something else about the PlayStation as well that made it an ideal match for Hironobu Sakaguchi’s vision of gaming. Especially after the console arrived in North America and Europe in September of 1995, it fomented a sweeping change in the way the gaming hobby was perceived. “The legacy of the original Playstation is that it took gaming from a pastime that was for young people or maybe slightly geeky people,” says longtime Sony executive Jim Ryan, “and it turned it into a highly credible form of mass entertainment, really comparable with the music business and the movie business.” Veteran game designer Cliff Bleszinski concurs: “The PlayStation shifted the console from having an almost toy-like quality into consumer electronics that are just as desired by twelve-year-olds as they are by 35-year-olds.”

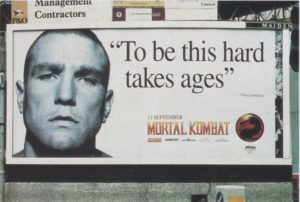

Rather than duking it out with Nintendo and Sega for the eight-to-seventeen age demographic, Sony shifted its marketing attention to young adults, positioning PlayStation gaming as something to be done before or after a night out at the clubs — or while actually at the clubs, for that matter: Sony paid to install the console in trendy nightspots all over the world, so that their patrons could enjoy a round or two of WipEout between trips to the dance floor. In effect, Sony told the people who had grown up with Nintendo and Sega that it was okay to keep on gaming, as long as they did it on a PlayStation from now on. Sony’s marketers understood that, if they could conquer this demographic, that success would automatically spill down into the high-school set that had previously been Sega’s bread and butter, since kids of that age are always aspiring to do whatever the university set is up to. Their logic was impeccable; the Sony PlayStation would destroy the Sega Saturn in due course.

For decades now, the hipster stoner gamer, slumped on the couch with controller in one hand and a bong in the other, has been a pop-culture staple. Sony created that stereotype in the space of a year or two in the 1990s. Whatever else you can say about it, it plays better with the masses than the older one of a pencil-necked nerd sitting bolt upright on his neatly made bed. David James, star goalkeeper for the Premier League football team Liverpool F.C., admitted that he had gotten “carried away” playing PlayStation the night before by way of explaining the three goals that he conceded in a match against Newcastle. It was hard to imagine substituting “Nintendo” or “Saturn” for “PlayStation” in that statement. In May of 1998, Sony would be able to announce triumphantly that, according to its latest survey, the average age of a PlayStation gamer was a positively grizzled 22. It had hit the demographic it was aiming for spot-on, with a spillover that reached both younger and older folks. David Ranyard, a member of Generation PlayStation who has had a varied and successful career in games since the millennium:

At the time of its launch, I was a student, and I’d always been into videogames, from the early days of arcades. I would hang around playing Space Invaders and Galaxian, and until the PlayStation came out, that kind of thing made me a geek. But this console changed all that. Suddenly videogames were cool — not just acceptable, but actually club-culture cool. With a soundtrack from the coolest techno and dance DJs, videogames became a part of [that] subculture. And it led to more mainstream acceptance of consoles in general.

The new PlayStation gamer stereotype dovetailed beautifully with the moody, angsty heroes that had been featuring prominently in Final Fantasy for quite some installments by now. Small wonder that Sakaguchi was more and more smitten with Sony.

Still, it was one hell of a bridge to burn; everyone at Square knew that there would be no going back if they signed on with Sony. Well aware of how high the stakes were for all parties, Sony declared its willingness to accept an extremely low per-unit royalty and to foot the bill for a lot of the next Final Fantasy game’s marketing, promising to work like the dickens to break it in the West. In the end, Sakaguchi allowed himself to be convinced. He had long run Final Fantasy as his own fiefdom at Square, and this didn’t change now: upper management rubber-stamped his decision to make Final Fantasy VII for the Sony PlayStation.

The announcement struck Japan’s games industry with all the force of one of Sakaguchi’s trademark Final Fantasy plot twists. For all the waves Sony had been making recently, nobody had seen this one coming. For its part, Nintendo had watched quite a number of studios defect to Sony already, but this one clearly hurt more than any of the others. It sold off all of its shares in Square and refused to take its calls for the next five years.

The raised stakes only gave Sakaguchi that much more motivation to make Final Fantasy VII amazing — so amazing that even the most stalwart Nintendo loyalists among the gaming population would be tempted to jump ship to the PlayStation in order to experience it. There had already been an unusually long delay after Final Fantasy VI, during which Square had made Super Mario RPG and another, earlier high-profile JRPG called Chrono Trigger, the fruit of a partnership between Hironobu Sakaguchi and Yuji Horii of Dragon Quest fame. (This was roughly equivalent in the context of 1990s Western pop culture to Oasis and Blur making an album together.) Now the rush was on to get Final Fantasy VII out the door within a year, while the franchise and its new platform the PlayStation were still smoking hot.

In defiance of the wisdom found in The Mythical Man-Month, Sakaguchi decided to both make the game quickly and make it amazing by throwing lots and lots of personnel at the problem: 150 people in all, three times as many as had worked on Final Fantasy VI. Cost was no object, especially wherever yen could be traded for time. Square spent the equivalent of $40 million on Final Fantasy VII in the course of just one year, blowing up all preconceptions of how much it could cost to make a computer or console game. (The most expensive earlier game that I’m aware of is the 1996 American “interactive movie” Wing Commander IV, which its developer Origin Systems claimed to have cost $12 million.) By one Square executive’s estimate, almost half of Final Fantasy VII‘s budget went for the hundreds of high-end Silicon Graphics workstations that were purchased, tools for the unprecedented number of 3D artists and animators who attacked the game from all directions at once. Their output came to fill not just one PlayStation CD but three of them — almost two gigabytes of raw data in all, or 30 Nintendo 64 cartridges.

Somehow or other, it all came together. Square finished Final Fantasy VII on schedule, shipping it in Japan on January 31, 1997. It went on to sell over 3 million copies there, bettering Final Fantasy VI‘s numbers by about half a million and selling a goodly number of PlayStations in the process. But, as that fairly modest increase indicates, the Japanese domestic market was becoming saturated; there were only so many games you could sell in a country of 125 million people, most of them too old or too young or lacking the means or the willingness to acquire a PlayStation. There was only one condition in which it had ever made sense to spend $40 million on Final Fantasy VII: if it could finally break the Western market wide open. Encouraged by the relative success of Final Fantasy VI and Super Mario RPG in the United States, excited by the aura of hipster cool that clung to the PlayStation, Square — and also Sony, which lived up to its promise to go all-in on the game — were determined to make that happen, once again at almost any cost. After renumbering the earlier games in the series in the United States to conform with its habit of only releasing every other Final Fantasy title there, Square elected to call this game Final Fantasy VII all over the world. For the number seven was an auspicious one, and this was nothing if not an auspicious game.

Final Fantasy VII shipped on a suitably auspicious date in the United States: September 7, 1997. It sold its millionth unit that December.

In November of 1997, it came to Europe, which had never seen any of the previous six mainline Final Fantasy game before and therefore processed the title as even more of a non sequitur. No matter. Wherever the game went, the title and the marketing worked — worked not only for the game itself, but for the PlayStation. Coming hot on the heels of the hip mega-hit Tomb Raider, it sealed the deal for the console, relegating the Sega Saturn to oblivion and the Nintendo 64 to the status of a disappointing also-ran. Paul Davies was the editor-in-chief of Britain’s Computer and Video Games magazine at the time. He was a committed Sega loyalist, he says, but

I came to my senses when Square announced Final Fantasy VII as a PlayStation exclusive. We received sheets of concept artwork and screenshots at our editorial office, sketches and stills from the incredible cut scenes. I was smitten. I tried and failed to rally. This was a runaway train. [The] PlayStation took up residence in all walks of life, moved from bedrooms to front rooms. It gained — by hook or by crook — the kind of social standing that I’d always wanted for games. Sony stomped on my soul and broke my heart, but my God, that console was a phenomenon.

Final Fantasy VII wound up selling well over 10 million units in all, as many as all six previous entries in the series combined, divided this time almost equally between Japan, North America, and Europe. Along the way, it exploded millions of people’s notions of what games could do and be — people who weren’t among the technological elite who invested thousands of dollars into high-end rigs to play the latest computer games, who just wanted to sit down in front of their televisions after a busy day with a plug-it-in-and-go console and be entertained.

Of course, not everyone who bought the game was equally enamored. Retailers reported record numbers of returns to go along with the record sales, as some people found all the walking around and reading to be not at all what they were looking for in a videogame.

In a way, I share their pain. Despite all its exceptional qualities, Final Fantasy VII fell victim rather comprehensively to the standard Achilles heel of the JRPG in the West: the problem of translation. Its English version was completed in just a couple of months at Square’s American branch, reportedly by a single employee working without supervision, then sent out into the world without a second glance. I’m afraid there’s no way to say this kindly: it’s almost unbelievably terrible, full of sentences that literally make no sense punctuated by annoying ellipses that are supposed to represent… I don’t know what. Pauses… for… dramatic… effect, perhaps? To say it’s on the level of a fan translation would be to insult the many fans of Japanese videogames in the West, who more often than not do an extraordinary job when they tackle such a project. That a game so self-consciously pitched as the moment when console-based videogames would come into their own as a storytelling medium and as a form of mass-market entertainment to rival movies could have been allowed out the door with writing like this boggles the mind. It speaks to what a crossroads moment this truly was for games, when the old ways were still in the process of going over to the new. Although the novelty of the rest of the game was enough to keep the poor translation from damaging its commercial prospects overmuch, the backlash did serve as a much-needed wake-up call for Square. Going forward, they would take the details of “localization,” as such matters are called in industry speak, much more seriously.

Writerly sort that I am, I’ll be unable to keep myself from harping further on the putrid translation in the third and final article in this series, when I’ll dive into the game itself. Right now, though, I’d like to return to the subject of what Final Fantasy VII meant for gaming writ large. In case I haven’t made it clear already, let me state it outright now: its arrival and reception in the West in particular marked one of the watershed moments in the entire history of gaming.

It cemented, first of all, the PlayStation’s status as the overwhelming victor in the late-1990s edition of the eternal Console Wars, as it did the Playstation’s claim to being the third socially revolutionary games console in history, after the Atari VCS and the original Nintendo Famicom. In the process of changing forevermore the way the world viewed videogames and the people who played them, the PlayStation eventually sold more than 100 million units, making it the best-selling games console of the twentieth century, dwarfing the numbers of the Sega Saturn (9 million units) and even the Nintendo 64 (33 million units), the latter of which was relegated to the status of the “kiddie console” on the playgrounds of the world. The underperformance of the Saturn followed by that of its successor the Dreamcast (again, just 9 million units sold) led Sega to abandon the console-hardware business entirely. Even more importantly, the PlayStation shattered the aura of remorseless, monopolistic inevitability that had clung to Nintendo since the mid-1980s; Nintendo would be for long stretches of the decades to come an also-ran in the very industry it had almost single-handedly resurrected. If the PlayStation was conceived partially as revenge for Nintendo’s jilting of Sony back in 1991, it was certainly a dish served cold — in fact, one that Nintendo is to some extent still eating to this day.

Then, too, it almost goes without saying that the JRPG, a sub-genre that had hitherto been a niche occupation of American gamers and virtually unknown to European ones, had its profile raised incalculably by Final Fantasy VII. The JRPG became almost overnight one of the hottest of all styles of game, as millions who had never imagined that a game could offer a compelling long-form narrative experience like this started looking for more of the same to play just as soon as its closing credits had rolled. Suddenly Western gamers were awaiting the latest JRPG releases with just as much impatience as Japanese gamers — releases not only in the Final Fantasy series but in many, many others as well. Their names, which tended to sound strange and awkward to English ears, were nevertheless unspeakably alluring to those who had caught the JRPG fever: Xenogears, Parasite Eve, Suikoden, Lunar, Star Ocean, Thousand Arms, Chrono Cross, Valkyrie Profile, Legend of Mana, Saiyuki. The whole landscape of console gaming changed; nowhere in the West in 1996, these games were everywhere in 1998 and 1999. It required a dedicated PlayStation gamer indeed just to keep up with the glut. At the risk of belaboring a point, I must note here that there were relatively few such games on the Nintendo 64, due to the limited storage capacity of its cartridges. Gamers go where the games they want to play are, and, for gamers in their preteens or older at least, those games were on the PlayStation.

From the computer-centric perspective that is this site’s usual stock in trade, perhaps the most important outcome of Final Fantasy VII was the dawning convergence it heralded between what had prior to this point been two separate worlds of gaming. Shortly before its Western release on the PlayStation, Square’s American subsidiary had asked the parent company for permission to port Final Fantasy VII to Windows-based desktop computers, perchance under the logic that, if American console gamers did still turn out to be nonplussed by the idea of a hundred-hour videogame despite marketing’s best efforts, American computer gamers would surely not be.

Square Japan agreed, but that was only the beginning of the challenge of getting Final Fantasy VII onto computer-software shelves. Square’s American arm called dozens of established computer publishers, including the heavy hitters like Electronic Arts. Rather incredibly, they couldn’t drum up any interest whatsoever in a game that was by now selling millions of copies on the most popular console in the world. At long last, they got a bite from the British developer and publisher Eidos, whose Tomb Raider had been 1996’s PlayStation game of the year whilst also — and unusually for the time — selling in big numbers on computers.

That example of cross-platform convergence notwithstanding, everyone involved remained a bit tentative about the Final Fantasy VII Windows port, regarding it more as a cautious experiment than the blockbuster-in-the-offing that the PlayStation version had always been treated as. Judged purely as a piece of Windows software, the end result left something to be desired, being faithful to the console game to a fault, to the extent of couching its saved states in separate fifteen-slot “files” that stood in for PlayStation memory cards.

The Windows version of Final Fantasy VII came out a year after the PlayStation version. “If you’re open to new experiences and perspectives in role-playing and can put up with idiosyncrasies from console-game design, then take a chance and experience some of the best storytelling ever found in an RPG,” concluded Computer Gaming World in its review, stamping the game “recommended, with caution.” Despite that less than rousing endorsement, it did reasonably well, selling somewhere between 500,000 and 1 million units by most reports.

They were baby steps to be sure, but Tomb Raider and Final Fantasy VII between them marked the start of a significant shift, albeit one that would take another half-decade or so to come to become obvious to everyone. The storage capacity of console CDs, the power of the latest console hardware, and the consoles’ newfound ability to easily save state from session to session had begun to elide if not yet erase the traditional barriers between “computer games” and “videogames.” Today the distinction is all but eliminated, as cross-platform development tools and the addition of networking capabilities to the consoles make it possible for everyone to play the same sorts of games at least, if not always precisely the same titles. This has been, it seems to me, greatly to the benefit of gaming in general: games on computers have became more friendly and approachable, even as games on consoles have become deeper and more ambitious.

So, that’s another of the trends we’ll need to keep an eye out for as we continue our journey down through the years. Next, though, it will be time to ask a more immediately relevant question: what is it like to actually play Final Fantasy VII, the game that changed so much for so many?

Did you enjoy this article? If so, please think about pitching in to help me make many more like it. You can pledge any amount you like.

Sources: the books Pure Invention: How Japan Made the Modern World by Matt Alt, Power-Up: How Japanese Video Games Gave the World an Extra Life by Chris Kohler, Fight, Magic, Items: The History of Final Fantasy, Dragon Quest, and the Rise of Japanese RPGs in the West by Aidan Moher, Atari to Zelda: Japan’s Videogames in Global Contexts by Mia Consalvo, Revolutionaries at Sony: The Making of the Sony PlayStation by Reiji Asakura, and Game Over: How Nintendo Conquered the World by David Sheff. Retro Gamer 69, 96, 108, 137, 170, and 188; Computer Gaming World of September 1997, October 1997, May 1998, and November 1998.

Online sources include Polygon‘s authoritative “Final Fantasy 7: An Oral History”, “The History of Final Fantasy VII“ at Nintendojo, “The Weird History of the Super NES CD-ROM, Nintendo’s Most Notorious Vaporware” by Chris Kohler at Kotaku, and “The History of PlayStation was Almost Very Different” by Blake Hester at Polygon.

Footnotes

| ↑1 | Philips wasn’t, however, above exploiting the letter of its contract with Nintendo to make a Mario game and three substandard Legend of Zelda games available for the CD-i. |

|---|---|

| ↑2 | Sony did purchase the venerable British game developer and publisher Psygnosis well before its console’s launch to help prime the pump with some quality games, but it largely left it to manage its own affairs on the other side of the world. |