Planescape: Torment, Part 1: From the Tabletop…

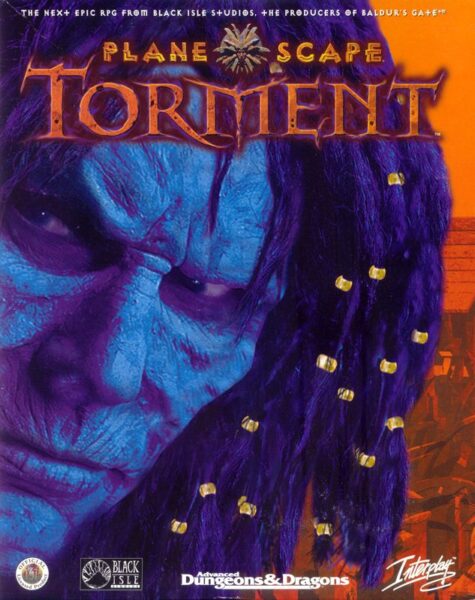

By 1999, Interplay had begun crediting its internally developed CRPGs to “Black Isle Studios,” a distinction that represented very little difference, given that Black Isle shared office space and personnel with its parent publisher. Note the careful choice of words on the box above, to call Black Isle the “producers” — not the developers — of Baldur’s Gate.

My power fantasy when playing a role-playing game is to confront a villain, explain point by point why his master plan is flawed, and then get him to admit that he hadn’t thought things through as carefully as I had, and ask me what I think he should do. Conversation-based player characters can have their bad-ass moments just as much as someone wielding a gun…

— Chris Avellone

Planescape: Torment is the damnedest game. Its list of failings is longer than that of many a game that I’ve simply written off as bad, full stop, and moved on from without a second thought. The pacing is glacial for long stretches; the interface is fussy and clunky; the combat is both irritating and utterly superfluous to the game’s design goals. Even much of the writing, by far the most celebrated aspect of Planescape: Torment, tends to seem proportionally less profound and more banal as one becomes farther removed in age and life experience from the twenty-somethings who first put all of these words — so many, many words, a reported 800,000 of them in all — onto our monitor screens more than a quarter-century ago. In so very many ways, Planescape: Torment is an undisciplined hot mess.

And yet it’s a hot mess that refuses to be dismissed lightly. For Planescape: Torment is also a vanishingly rare thing in the realm of game narratives: a genuine interactive tragedy, in the sense that Aeschylus, Shakespeare, and Nietzsche understood that word. That it recognizes the tragic side of life while inhabiting a genre whose whole point in the eyes of most of its fans is the triumphalism of going from a weakling to a demigod is incredibly brave and subversive. That it did this in 1999, when the games industry was smack dab in the middle of one of the most homogenized, risk-averse periods in its history, is as inexplicable as it is astonishing.

Clearly we have much to unpack…

TSR sold surprisingly few copies of the original Planescape campaign setting, even at the stupidly cheap price of just $30. It goes for $250 among collectors today.

Whatever else it is, Planescape: Torment is first and foremost a licensed adaptation of Dungeons & Dragons, a part of Interplay’s attempt to revive that storied tabletop game’s digital fortunes amidst the collapse of its parent company TSR and TSR’s acquisition by Wizards of the Coast. This particular computer game was no mere branding exercise, as was the case with some of them that came out in Dungeons & Dragons trade dress during the 1990s. On the contrary, Planescape: Torment was deeply, intimately informed by the creative work that took place in TSR’s Wisconsin headquarters earlier in the decade. The extent to which this is the case is often glossed over or forgotten entirely when retrospectives of it are written today. So, let me make it crystal clear here right from the start: love it or hate it, a huge chunk of what makes Planescape: Torment so unique and memorable originated not in Interplay’s Southern California offices but in the nation’s dairy-cow heartland.

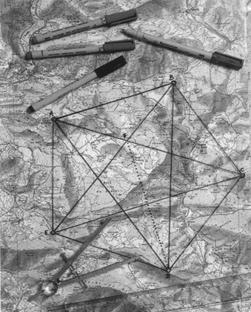

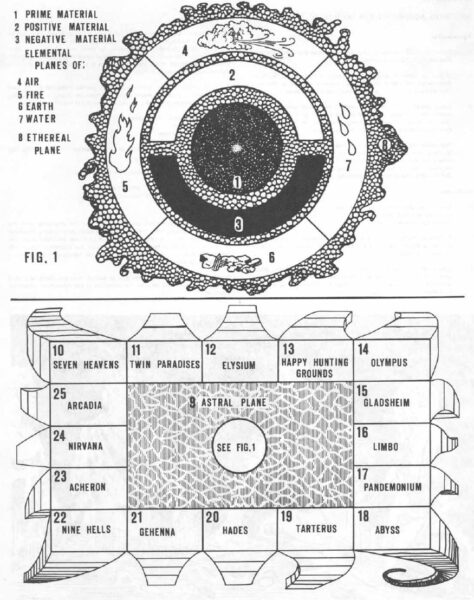

It will presumably surprise no one when I write that the “planes” of Planescape are alternate planes of existence, separate from the “Prime Material Plane” in which most Dungeons & Dragons campaigns take place. They were introduced by Gary Gygax already in the late 1970s, in the iconic first editions of the Player’s Handbook and Dungeon Master’s Guide. His cosmology was a melange of a little bit of everything: quantum physics, Renaissance-era alchemy and astronomy, the holy texts of various religions, New Age philosophy, Dante and Milton, twentieth-century fantasy and horror novels.

Gary Gygax’s vision of the Dungeons & Dragons multiverse, as found in an appendix to the Player’s Handbook.

The Prime Material Plane stands at the center of it all, much like the Earth was once imagined to stand at the center of our universe. It is surrounded by the Inner Planes that embody the physical building blocks of existence, which are in turned enclosed by the Outer Planes that embody the metaphysical alignments, those nine possible combinations of Lawful, Neutral, and Chaotic, Good, Neutral, and Evil.

Gygax was always prepared to muse and to elaborate, on this subject as on so many others. Small wonder that these alleged rule books — surely the most chatty and discursive books of rules ever written, the heart of the Gospel of Saint Gary — were perused and pored over endlessly by his young fans, many of whom were discovering for the first time the countless disparate philosophical ideas he threw into the pot. Gygax wasn’t an overly sophisticated thinker in most contexts, but he was a prolific one, who always had ten more ideas waiting in the wings if you didn’t respond to his last one.

For those of you who haven’t really thought about it, the so-called planes are your ticket to creativity, and I mean that with a capital C! Everything can be absolutely different, save for those common denominators necessary to the existence of the player characters coming to the plane. Movement and scale can be different; so can combat and morale. Creatures can have more or different attributes. As long as the player characters can somehow relate to it, then it will work…

I have recommended that Boot Hill and Gamma World be used in campaigns. There is also Metamorphoses Alpha, Tractics, and all sorts of other offerings which can be converted to man-to-man role-playing scenarios. While as of this writing there are no commercially available “other planes” modules, I am certain that there will be soon — it is simply too big an opportunity to pass up, and the need is great.

This was a remarkably prescient description of where planar travel in Dungeons & Dragons would go — eventually. For a long time after The Dungeon Master’s Guide appeared in 1979, the other planes of existence were one of those Dungeons & Dragons concepts that were kind of floating out there in the ether (or was it the Ethereal Plane?) without anyone knowing quite what to do with it. Apart from some sketchy guidelines for “ethereal” and “astral” travel and combat, the rule books remained sadly short on specifics. The 1980 adventure module Queen of the Demonweb Pits, designed by Gygax and David C. Sutherland III, did take players on a jaunt to the Abyssal Plane, but that was a one-shot thing. For all that Gygax had claimed, in his indelibly Gygaxian way, that “the need is great,” as if an understanding of the planes of Dungeons & Dragons was an urgent matter of national security, neither he nor anyone else seemed to be in all that much of a hurry to address said need. The occasional slightly dodgy article in Dragon magazine aside, Dungeons & Dragons remained in practice a very Prime Material sort of game.

This situation first started to change in the latter half of the 1980s. By then, Gygax was on his way out of TSR and the Dungeons & Dragons craze of the decade’s beginning had just about run its course. Necessity was forcing TSR to adjust its business model, from selling the core Dungeons & Dragons game to new players to selling an ever expanding lineup of rules extensions, campaign settings, and pre-crafted adventures to its surviving base of loyal, hardcore players. The planes seemed like fresh fodder for all three types of product.

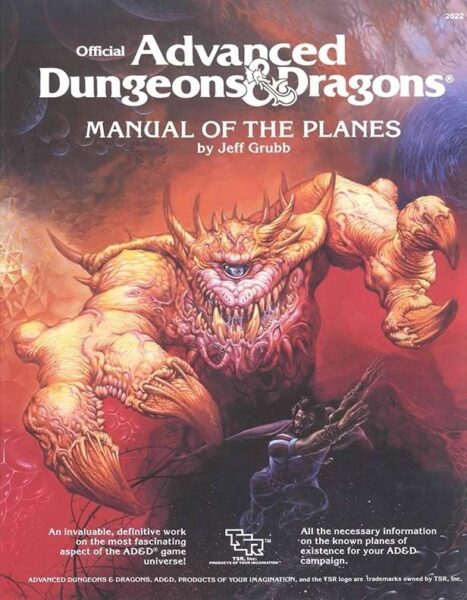

A longtime TSR stalwart named Jeff Grubb took the first concerted swing at it. In 1987, the company published his Manual of the Planes, the latest in its ever-growing line of new Dungeons & Dragons hardbacks for the hardcore. Grubb took it as his mission to give Gygax’s abstract cosmology a grounding in lived experience, to explain what it would actually be like to visit these places. Unfortunately, he prioritized alchemical realism over playability, winding up with a collection of environments that were as brutally, hilariously inhospitable to even high-level characters as one might imagine a plane of nothing but fire or air to be. “The book was fascinating reading,” notes Dori Hein, an ordinary Dungeons & Dragons fan at the time whom we will meet again in another role. “I loved the mythology and the grand majesty of all the planes, but — try as I might — I couldn’t create an adventure without killing all my players.” In the same vein, Sean Gandert of the website Exposition Break writes that “the planes’ complete resistance to being remotely welcoming is both what makes them fascinating to read about and also makes the book completely skippable and largely irrelevant. It is a work of cosmology and mythology, not a plan for where to send adventurers.”

The Manual of the Planes went out of print in fairly short order anyway, after TSR commenced rolling out a second edition of Advanced Dungeons & Dragons in 1989. The cynical interpretation of this initiative is that it was the best way TSR had yet devised for continuing to extract money from its static pool of players, by forcing them to buy the game they loved all over again in its most basic form in order to stay up to date with the times. The idealistic one is that it let TSR clean up a game system that had grown ever more baggily shambolic over the past decade of supplement after supplement. In reality, the second edition was doubtless a little of both, being seen one way by the people surrounding Lorraine Williams in her executive suite and another by the creative types in the cubicles.

That said, and looking back on what I’ve written about the later period of TSR’s history elsewhere on this site, I fear I may have overemphasized the cynicism at the expense of the idealism. There’s no question that the company fell prey to a set of perverse incentives during the last decade of its existence, many of them born out of idiosyncrasies in its longstanding distribution contract with the book publisher Random House. By the early 1990s, this had resulted in an absolute hailstorm of product brought down upon the heads of Dungeons & Dragons fans, more than all but the most well-heeled among them could possibly afford to buy, much less find the time to bring to the tabletop. But there’s likewise no question that these products were made with enormous love and care by the creative staff. This was the heyday of the alternative campaign setting, when TSR offered up the chance to leave conventional high fantasy behind and play Dungeons & Dragons in post-apocalyptic worlds, in the lands of the Arabian Nights, in Gothic castles, on the high seas, even in outer space. So what if there was no way to justify so many settings’ existence as commercial products, if each successive one sold worse than the one before, especially after the collectible-card game Magic: The Gathering arrived on the scene to tempt away large chunks of TSR’s remaining customer base. Circumstance had granted the people making these settings a rare reprieve from the harsh logic of supply and demand, and they didn’t let it go to waste.

Given this cavalcade of rich but disconnected settings, it was perhaps inevitable that TSR would look once again to the planar multiverse as a way of unifying a crazily diverse set of experiences bearing the name of Dungeons & Dragons. A boxed set reviving Gygax’s multiverse could bring them all together conceptually, could even provide a set of practical mechanisms to allow the same set of player characters to jump from setting to setting, just like Saint Gary had first proposed all those years ago.

In addition to being a unifying force for Dungeons & Dragons itself, Planescape was quite explicitly intended as a response to Vampire: The Masquerade, an RPG from an upstart company known as White Wolf Games that flipped everything you thought you knew about the tabletop scene on its head. Whereas Dungeons & Dragons, even in its supposedly cleaned-up second-edition incarnation, was infamous for the complexity of its rules, Vampire gave you just enough of them to provide a runway for storytelling. That fact, combined with its subject matter, attracted fresh blood to the hobby: Goth rockers and theater kids and Anne Rice readers, among them a surprising number of girls and women. At the end of the day, Vampire may have been full of as many clichés as vanilla Dungeons & Dragons — clichés which are all the more evident from the perspective of today, after several more decades worth of vampire fictions — but they had the advantage of feeling relatively fresh from the perspective of the early 1990s. Indeed, this was the only period in the entire history of tabletop RPGs when it seemed possible that a different game might just unseat Dungeons & Dragons from its throne as the undisputed standard bearer for the hobby. Vampire’s rise made TSR nervous enough to want to make something of its own that was grittier, messier, and a bit less morally straightforward, less of a single-unit wargame and more of a vehicle for improvisational drama. It was no accident that the Dungeons & Dragons brand appeared on the eventual Planescape box only as a small logo tucked away in the corner.

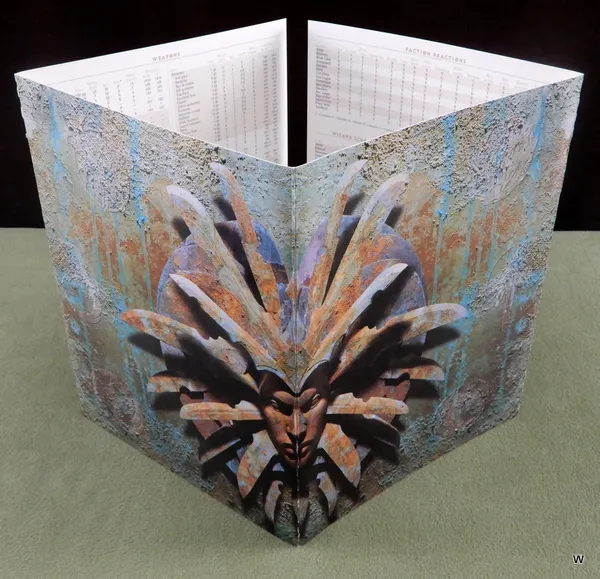

David “Zeb” Cook, another veteran TSR hand, was made lead designer on Planescape. Dori Hein, who had by now graduated from merely playing TSR’s games to working there, became the producer, overseeing a team of artists, cartographers, writers, editors, and play-testers. They pulled out all the stops for a set that wound up consisting of no fewer than four separate books, printed on thick and creamy Pentair Suede paper, and four sturdy cardboard posters. The luscious package was capped off by the most intimidating Dungeon Master’s screen ever devised. One of TSR’s purchasing managers had a sign hanging in his office: “The pleasure of a product well done lingers far longer than the excitement of a bargain.” As it happened, though, the Planescape set was both: it sold for just $30, a ridiculously cheap price for such a luxurious product even by the standards of the 1990s. It may have been no more than a break-even price, or not even that, settled upon in the hope that Planescape would revive TSR’s flagging fortunes in the longer run by spawning a whole new ecosystem of supplements, adventure modules, and tie-in novels.

The Planescape Dungeon Master’s screen. Sitting down around a table that had this thing on top of it, you knew you were in for a mind-bending journey that was more Salvador Dali than Boris Vallejo.

Zeb Cook’s first and most important stroke of brilliance was to give his vision of the planes a hub around which to operate. This was Sigil, a “city of doors” giving unto the many other planes, a meeting ground and melting pot for the entire multiverse. Ranging far afield from the pulpy fantasy of Jack Vance and the stately epic fantasy of J.R.R. Tolkien, the two most obvious inspirations for traditionalist Dungeons & Dragons, Cook read postmodern, experimental novels by Milorad Pavić and Italo Calvino for inspiration. Sigil, a city of angles as well as doors, became a physical embodiment of their twisted, self-referential approach to narrative: “Get it right out front: Sigil’s an impossible place, a city built on the inside of a tire that hovers over the top of a gods-know-how-tall spike, which rises from a universe shaped like a giant pancake.”

Sigil is not so refined a place as some might expect for the central hub of the multiverse, but that’s fair enough, given that Cook’s multiverse itself isn’t all that refined. The dominant note of the city, even outside of its plentiful and teeming slum districts, is what we might call dirty Victoriana, of a piece with 21st-century novels like Sarah Water’s Fingersmith and Michel Faber’s The Crimson Petal and the White, which read like genuine Victorian “sensation novels” with the added ability to state outright the disreputable things that their ancestors could only imply. The dialect of Sigil’s streets is vintage Cockney slang in spirit if not always in the details of the vocabulary, with the same uncanny talent for being roundabout and penetrating at the same time: “berks” and “cutters” are no-account people; “the dark” is knowledge; “jink” is money; one’s “kip” is one’s (usually humble) abode; one’s “bone-box” is one’s mouth; to “pike off” means to scram. In keeping with all the best slang, these are words that you know when you hear them even if you don’t actually know them, if you take my meaning. As we’ve already seen, the books in the Planescape box that describe Sigil are themselves written in this vernacular: “Welcome, addle-cove!” begins the Planescape “Player’s Guide.” This is not the Dungeons & Dragons of 1980s school cafeterias; both dungeons and dragons are mostly missing from Sigil, replaced by far stranger things.

Instead of embracing the simplistic good-versus-evil dynamics of traditional Dungeons & Dragons, Sigil is divided into fifteen factions whose adherents are aptly described as “philosophers with clubs,” from the chivalric and vaguely fascistic Godsmen to the nihilistic Bleak Cabal, who preach that “once a sod believes it all means nothing, it all starts to make sense.” Ruling over the whole place, ensuring that no single faction gets too powerful, is the Lady of Pain, who can flay the skin from a poor berk just by looking at him. The overriding theme is that ideas and beliefs matter, are literally woven right into the substance of the multiverse, and can kill or save you just as indubitably as the physical elements of earth, air, wind, and fire. Sigil is the ultimate argument for the value of a good humanities education.

If there’s a weakness to the Planescape set, it’s that it spends so much space on Sigil that it doesn’t have enough left over for all those other planes of existence that were supposed to be the whole point of the endeavor. Instead of offering a wide-open set of possibilities, it can feel paradoxically claustrophobic, like the crowded filthy alleyways of the city itself.

Nevertheless, the Planescape box was endlessly audacious and imaginative, as different from the typical Dungeons & Dragons experience as anyone could have asked for. But, whether despite or because of these factors, it was not a commercial success. It sold just 60,000 copies over the five years after its release in April of 1994, a thin foundation indeed on which to build a new gaming ecosystem. The add-on lines, which offered opportunities to flesh out the multiverse in some of the way that the boxed set had failed to do, continued in fits and starts for longer than you might expect — another tribute to the topsy-turvy economic incentives that marked TSR at the time — but petered out for good after the failing company was acquired in 1997 by its own worst enemy Wizards of the Coast, the maker of Magic: The Gathering. The Vampire craze did eventually fade, but its travails had nothing to do with TSR’s efforts. It was rather something to do with the ever-shifting winds of pop culture, which soon replaced teenagers’ Cure and Alice in Chains records with the Backstreet Boys and Britney Spears.

So, had things turned out just a little bit differently, Planescape would be fondly remembered today only by a few tabletop nostalgics as a piece of work of unusual vision that never got its due. Instead, though, it went on to become a landmark of another stripe, in a different medium entirely.

TSR had begun dangling the prospect of a Planescape computer game in front of publishers even before the boxed set shipped; such a thing was regarded as a potentially vital part to the product line that had become the latest Great White Hope for reversing the company’s accelerating downward spiral. Interplay rose to the bait, signing the contract before 1994 was out. In fact, it went so far as to hire Zeb Cook himself, who had concluded that “it didn’t seem like there was going to be a long-term future” for him on the tabletop. But the initial rush of enthusiasm petered out; Cook soon departed again, leaving the digital future of Planescape in limbo. And yet the idea of a Planescape computer game never completely went away. Late in 1995, when an inexperienced youngster named Chris Avellone came to Interplay for a job interview, he was asked how he would design such a game. He brainstormed in the spur of the moment the genesis of the eventual Planescape: Torment: “I would start it after the death screen. What happens after the main character dies?”

Avellone had grown up in the 1980s playing Dungeons & Dragons with his friends in his hometown of Alexandria, Virginia. By the time he went off to university, he had two possible futures in mind for himself: either to become a comic-book author or to become a tabletop-RPG designer. Neither field could exactly be called a growth industry at the time, but he made the best of it. On the gaming side, he sent a long string of submissions not only to TSR but to Steve Jackson Games, the maker of GURPS (“Generic Universal Role-Playing System”), and to Hero Games, the maker of the superhero RPG Champions. Initially, he met only with rejection; his closest brush with his heroes at TSR came when Monte Cook, yet another well-known name among the Dungeons & Dragons cognoscenti, took time out to plead with him personally to just stop submitting stuff already.

But Avellone persevered, and finally began to see some of his gaming material accepted and published. Yet he still had to confront the reality that the life of a freelance tabletop-RPG writer and designer left a little something to be desired: specifically, money. Most of the royalty checks that came in from the beleaguered companies that published his work — the Magic: The Gathering craze was in full flight, pushing RPGs to the margins of the same shops where they had once been the dominant attraction — had just two digits before the decimal point. Avellone, who had by now graduated from the College of William & Mary with a Bachelors in English, was still at loose ends when it came to the all-important question of how he was going to put food on his table as a responsible adult. Everyone told him that the wise choice was to acquire a teaching certificate, but all he wanted to do was find a way to make games full-time.

Oddly enough, he had never seriously thought about becoming a computer-game developer, despite having played his fair share of The Bard’s Tale and its ilk as a teenager. It took Steve Peterson, his editor at Hero Games, to point out to him how different the economics of that adjacent industry were. Peterson pulled some strings to secure Avellone an interview at Interplay Productions, for something which he was unlikely to find anytime soon in the moribund tabletop field: an honest-to-goodness full-time job. He got the job.

Although he had been asked about Planescape at his interview, he wasn’t allowed to spend all or even most of his time on that perpetually incipient project after he was hired. As the low man on the totem pole, he was shuffled around from team to team, plugging gaps in the design plumbing wherever needed. He worked on the infamous Descent to Undermountain, the nadir of digital Dungeons & Dragons during the 1990s; on Conquest of the New World, Interplay’s workmanlike take on the same theme as MicroProse’s Colonization; and on Starfleet Academy, an attempt to do TIE Fighter in the Star Trek universe that never felt true to its source material, in that it had the usually stately likes of the USS Enterprise dog-fighting in space as if it was, well, a TIE Fighter.

But betwixt and between all of the above, Avellone sat in his cubicle writing his Planescape game. He did so as much for his own peace of mind — because he needed something that he could feel passionate about — as out of any real conviction that the game would ever get made. The winds blowing against it seemed positively gale-force. For by now it was clear that Planescape would not prove the savior of Dungeons & Dragons on the tabletop. The TSR boxed set had barely sold at all, even as, commercially speaking, CRPGs were scarcely in better shape than their tabletop counterparts in the mid-1990s. Interplay already had one game in the stagnant genre under active development, in the form of Fallout. That looked like one too many in the eyes of most of the bean-counters.

Slowly, however, the murky picture started to take on some brighter shades. Just as 1996 was turning into 1997, Blizzard Entertainment unleashed a game called Diablo. Debate raged on Usenet and the young World Wide Web over whether Diablo, with its procedurally generated dungeons and its emphasis on constant action over a fleshed-out narrative, was a “real” CRPG at all or just a watered-down pretender. What was undeniable, though, was that it sold like crazy, raising the question of whether more complex, textured CRPGs might be ripe for a revival as well. Meanwhile a bankrupt TSR was by now in the process of being acquired by Wizards of the Coast. Wizards was saying all the right things about resurrecting Dungeons & Dragons for this new era, and its Magic revenues left it primed to spend more money on that endeavor than TSR could ever have dreamed of even before the collectible-card-game craze had cleaned its clock.

In what had seemed at the time like a triumph of hope over recent experience, earlier in 1996 the Interplay producer Feargus Urquhart had enlisted a fledgling Canadian studio known as Bioware to make yet another Dungeons & Dragons CRPG for Interplay to publish. In what had seemed a minor stipulation of the deal at the time the contract between Bioware and Interplay was signed, the former had agreed to allow the latter full access to the “Infinity Engine” it planned to use to build and run the game. By the spring of 1997, those arrangements were looking like they might prove more important, both to Interplay and to the whole industry, than anyone had anticipated at the time.

The Bioware game, for which Feargus Urquhart himself had come up with the name of Baldur’s Gate, was pitched straight down the middle, being about as traditionalist as a Dungeons & Dragons CRPG could get. It took place in the game’s more or less default setting of the Forgotten Realms, a world that took every cliché of epic fantasy and ran with it. Obviously this was the safest choice for a revival. But, in the wake of Diablo’s smashing success, Urquhart thought there might be space to throw up a curve ball as well to serve as a more outré companion piece. He asked Chris Avellone to condense his massive Planescape notebook into a proper project proposal.

The proposal reached the desk of Brian Fargo, the founder and head of Interplay, at the end of June 1997. “There was always a balance in running a studio between being commercial, being creative, and having your creative people be happy, and having them do things that are interesting to them,” says Fargo. “I was willing to take creative risks from time to time in order to allow these things to happen. Planescape: Torment was clearly one of those. When it came across my desk, I said, ‘Well, that’s as high-concept as you can get.’ But I thought that RPG players would like it, and I loved the writing and sensibility they put into the document. That got me interested in doing it.” It didn’t hurt, of course, that it ought to be possible to do the game fairly cheaply, since it would be able to re-purpose Bioware’s Infinity Engine.

The heart of the Planescape: Torment team was lead designer Chris Avellone, lead programmer Daniel Spitzley, the artists Tim Donley and Aaron Meyers, and producer Guido Henkel (a recent German immigrant who had helped to make the CRPGs Blade of Destiny and Star Trail in his native land). The project was not a major priority at Interplay for the majority of its existence, even after Fallout came out late in 1997 and sold pretty well, thus demonstrating that there truly was a reasonably sized market for more complex, conversation-heavy CRPGs than Diablo, provided that they were done well. In fact, in an ironic sort of way, Fallout’s success was to Planescape: Torment’s detriment. Eager to capitalize on the first non-sequel, non-licensed Interplay release to garner an appreciable buzz among hardcore gamers since Descent in 1995, Brian Fargo decreed that a Fallout 2 had to come out within a year of its predecessor. As a result, Planescape: Torment was all but suspended for much of 1998, while most of the team, Avellone included, moved over to pitch in on the Fallout sequel.

Although they did get it done on time, the biggest CRPG success story of the Christmas of 1998 proved not to be Fallout 2 but rather Baldur’s Gate, which introduced digital Dungeons & Dragons to a whole new generation of gamers who were more familiar with Diablo than Pool of Radiance. Just like that, Dungeons & Dragons on the computer became a hot topic again. With a Baldur’s Gate II not slated for release until 2000, Planescape: Torment was left to carry the Infinity Engine water in the interim. That brought a fresh influx of energy and resources to the project, and these were sufficient to get the game finished just in time for the Christmas of 1999.

It entered stores accompanied by stellar reviews whose fulsome praise felt only slightly obligatory in a Stockholm Syndrome sort of way. (Many reviewers did point out the “tome of text” to be read in tones that suggested that they might not have found it as uniformly delightful as their five-star verdicts suggested.) Nonetheless, as a computer game based on a tabletop setting that had been discontinued more than eighteen months earlier, Planescape: Torment was in a strange position for a licensed product. Even against weak competition — the only other high-profile CRPG release that holiday season was the abjectly terrible Ultima IX — the game’s sales were a shadow of the figures put up by Baldur’s Gate. In an ironic way, the lack of ringing commercial success may have been a positive for Planescape: Torment’s legacy, confirming its modern status as a cult classic that’s for the CRPG sophisticates rather than the hoi polloi.

As for my opinion… well, I’m afraid I’m going to need another article to properly interrogate the reputation and reality of the game. For, whether one happens to be sitting with the prosecution or the defense or just back in the jury box trying to sort through it all, the case of Planescape: Torment is a complicated one.

Did you enjoy this article? If so, please think about pitching in to help me make many more like it. You can pledge any amount you like.

Sources: The books Slaying the Dragon: A Secret History of Dungeons & Dragons by Ben Riggs, Beneath a Starless Sky: Pillars of Eternity and the Infinity Engine Era of RPGs by David L. Craddock, and Designers & Dragons: A History of the Roleplaying Game Industry volumes 1 (the 1970s) and 3 (the 1990s) by Shannon Appelcline; Dragon of March 1994, April 1994, May 1994, July 1994, and August 1994; Computer Gaming World of March 2000 and April 2000; the 2015 GamesTM special issue on “controversial” games; Retro Gamer 113. Plus the Advanced Dungeons & Dragons Player’s Handbook, Dungeon Master’s Guide, Manual of the Planes, and the Planescape boxed set. Plus the materials found in the Brian Fargo Collection in the archives of the Strong Museum of Play.

Online sources include Soren Johnson’s interview with Chris Avellone for his Designer’s Notes podcast, a Last Game Standing interview with Avellone, and Sean Gandert’s series of articles about the evolution of planar travel in Dungeons & Dragons for the website Exposition Break.

Where to Get It: Planescape: Torment is available as digital purchase from GOG.com in an “enhanced edition.” Buying it also gives you access to the original version.

The Mystery of Rennes-le-Château, Part 5: The Man Behind the Curtain

It is possible to trace the Plantard family tree a fair ways back without relying on the Lobineau dossier, but not as far back as the time when the Merovingian kings ruled over the nascent nation of France. The real genealogical record shows that the Plantards were not a line of kings or demigods; they were rather ordinary laborers. The first trace we can find of them comes in the form of one Jean Plantard, who arrived from parts unknown to the town of Sémelay, about 300 kilometers southeast of Paris, during the first half of the seventeenth century. His descendants continued living in this region of France.

A Pierre Plantard was born in the village of Magny-Cours on October 11, 1877. Following a stint as a coal miner and a term of service in the French Army, he moved to Paris early in the new century to become a valet and butler, probably to one of the officers under whom he had served. (Such arrangements were common at the time.) He was called up again in that fateful month of August 1914, and spent the entire First World War fighting on the Western Front with his artillery regiment. He then returned to Paris to take up his domestic duties once again, working now for a wealthy Russian family who had fled the Bolshevik revolution in their country. He died in 1922 from a fall out of a second-floor1 window he had been attempting to clean, a sadly ironic fate for a man who had managed to survive four and a half years of brutal war.

The older Pierre Plantard died from a fall out of one of these windows. One wonders whether his son might turned out differently if he had grown up with both of his parents.

But before he left this world, Pierre Plantard fathered a son with his wife Amélie, a fellow domestic servant. Born on March 18, 1920, the son was given the same name as the father he would never know.

This new edition of Pierre Plantard grew up coddled by his mother. By working day and night, she was able to supplement the small pension she had been awarded after the death of her husband enough to provide him with a reasonably good primary-school education. She and her son were mutually convinced that young Pierre was destined for greater things than his father, or, indeed, any of his other less-than-august ancestors.

Still, it wasn’t clear just how he was to rise to the level of his just desserts from such a humble point of origin. After he finished his standard schooling in 1937, his mother found that university was far beyond her means, no matter how many hours she worked or how she scrimped and saved. Being still in full agreement with his mother that he was above manual labor, Pierre spent almost all of his time in their apartment, expressing his political views in pamphlets which he gave away for free. He was of a type with a certain species of young man that can be found in the less savory corners of the Internet today (and occasionally showing up in this site’s usually friendly and thoughtful comment section to sound a discordant note). Intelligent in some ways but socially awkward, they channel the grievance born of their social isolation into reactionary cultural and political views. In the context of 1930s Europe, the endpoint of that journey was almost always fascism and its even uglier handmaiden of antisemitism.

Alas, Pierre Plantard was no exception. He advocated for the “purification and renewal of France,” a euphemism which, I trust, requires no elaboration. As an admirer of Adolf Hitler, he was traumatized when his country declared war against Germany in response to the latter’s invasion of Poland in September of 1939. He immediately dashed off a letter to Prime Minister Édouard Daladier to demand that he “put a stop to a war started by the Jews which we cannot win.”

In the decades to come, Plantard would claim that he joined the Resistance after a Nazi army marched into Paris in June of 1940. But in the early stages of the occupation at least, nothing could be farther from the truth. He was in fact an admirer and booster of the Vichy puppet regime that was installed to govern part of France under Marshal Philippe Pétain, an 84-year-old hero of the First World War who, to paraphrase the later words of Charles de Gaulle, went from glorious to deplorable in the view of French history in the space of an instant. A letter which Plantard wrote to Pétain on December 16, 1940, shows his delusions of grandeur already in fine fettle at age twenty. Claiming that he has knowledge of an assassination plot against Pétain, Plantard pushes the doddering old man to get a move-on implementing the Holocaust before it is too late.

Please forgive me for taking the liberty of writing to you this evening. Despite my various commitments, my lectures and my magazine, I am perhaps still unknown to you…

I know that, in the depths of your soldier’s heart, you are suffering from the knowledge that the people of France are questioning your sincerity and your patriotism, and are suffering more perhaps from that than from the effects of our recent disaster. But I know also of your great love for our country, and I am certain that you will do everything possible to save it once more…

You must act! Immediately upon receiving this letter you must issue strict but totally confidential orders. You must put an immediate stop to this terrible Masonic and Jewish conspiracy in order to save both France and the world as a whole from terrible carnage.

At present I have about 100 reliable men under me who are devoted to our cause. They are ready to fight to the bitter end in response to your orders. But what is 100 men when faced with the might of our enemies? Whatever the case may be, they will fight alongside me for our cause.

This letter would be laughable if the undertone wasn’t so dark. Plantard’s magazine was an amateurish pamphlet he passed out for free on the street; his lectures were nonexistent, as was the crack squad of 100 soldiers at his beck and call. He was just a skinny kid writing screeds in his mother’s apartment in lieu of facing the real world outside. Yet he would prove bizarrely adept at inhabiting an imaginary world throughout his life, never allowing reality to get too close to him.

In 1941, Plantard claimed to be the head of a youth group called La Rénovation Nationale Française (“The French National Renewal”). Under its auspices, he petitioned the Parisian police to let him seize the home of M. Shapiro, “an English Jew who is presently fighting alongside his fellows in the British armed forces,” to use as the group’s headquarters; he said he had already gotten permission to do so from the occupying Nazi authorities. In response, the police launched an investigation of his affairs. The verdict was as dismissive as it was scathing. From our standpoint, the most shocking aspect of the report is how fully-formed Plantard’s modus operandi was at such an early juncture.

M. Plantard describes himself as a journalist; in fact he lives entirely off his mother, who holds a pension granted to her following the accidental death of her husband…

Plantard, who boasts of having links with numerous politicians, seems to be one of those dotty, pretentious young men who run more or less fictitious groups in an effort to look important and who are taking advantage of the present trend toward a greater interest in young people in order to attract the government’s attention…

La Rénovation Nationale Française seems to be a “phantom” group whose existence is purely a figment of the imagination of M. Plantard. Plantard claims 3245 members, whereas this organization currently only has four members…

To date no meetings have been held…

It would seem that this organization is doomed to failure.

The report concludes that Plantard should not be allowed to steal M. Shapiro’s house, even if the latter is a Jew.

But Plantard soldiered on undaunted. By January of 1943, La Rénovation Nationale Française had morphed into a magazine called Vaincre (“Conquer”). Its mission was “to restore to the Fatherland the strength to live through an ideal based on chivalry and self-denial.” In what some might consider an abnegation of its stated ideal, Plantard still hadn’t found a paying job or moved out of his mother’s apartment.

His lifestyle was about to be dramatically disrupted, however. At some point during 1943 or 1944, Plantard was sent by the Nazi occupying authorities to Fresnes Prison, the primary holding place for Resistance agitators among others, for a four-month stint. This event is documented only by the French police, who were unsure of the reason for the sentence; the report author’s suspicion is that it was handed down primarily out of annoyance and exasperation, because Plantard had been badgering the German authorities with requests related to his various associations and publications as persistently as he had been the French ones. All of the relevant German documents seem to have been lost. Regardless of the reason for the prison stint, those four months, unpleasant though they doubtless were, would show themselves to have been a blessing in disguise after France was liberated, in that they prevented Plantard from being tarred with the broad brush of a full-on Nazi collaborator.

Indeed, he was quick to change his tune after an Allied army marched into Paris in August of 1944. The following month, he founded his latest organization, Alpha Galates (“Alpha Galatians”). Its object was “the creation, maintenance, and development of one or more welfare centers for young people who have suffered from German oppression (forced labor, deportation, imprisonment).” The one bar to membership was “to have been a member of any German or pro-German organization,” a standard which Plantard himself arguably didn’t meet. Another report in the archives of the French police, this one dated February 13, 1945, concludes that “this association has not engaged in any activity. It has had about 50 members, who resigned one after the other as soon as they sussed out the president of the association and worked out that it was not a serious enterprise. Plantard seems to be an odd young man who has gone off the rails, as he seems to believe that he and he alone is capable of providing French youth with proper leadership.” At the time of this report, he was back to living with his mother and still unemployed.

But not for too much longer. In December of 1945, the perpetual adolescent dipped a toe into the adult world. He got married to a woman named Anne-Léa Hisler and left the nest at long last. His activities over the next ten years are murky. He, his wife, and the daughter that would soon result from their union appear to have lived for a good part of that time in the town of Saint-Julien-en-Genevois, close to the border with Switzerland. He appears to have served two short prison sentences there, one in 1953 and the other in 1956; his wife left him around the time of this second prison stint, although the couple would not be formally divorced until 1970. The details of the convictions remain vague, thanks to French privacy laws, but mention has been made of “breach of trust,” “bad checks,” “fraud,” and, most alarmingly, “abuse of a minor,” which may refer to sexual relations with an underage girl. It isn’t clear how Plantard earned a living during these years; his attempts at playing a confidence man seem not to have been terribly lucrative. He himself would later mention working as an architect’s draftsman, but this may have been cover for other, humbler forms of employment.

It was also in 1956 that the series of events which would lead to the likes of The Holy Blood and the Holy Grail and The Da Vinci Code began in earnest. You may remember that it was in January of this year that Albert Salamon wrote the first French newspaper accounts of François-Bérenger Saunière’s possible hidden treasure. Later this same pivotal year, either just before or just after going to prison, or possibly from his prison cell, Pierre Plantard registered an organization called the Priory of Sion with the French government.

It is tempting to read more than coincidence into the dating of these events. But we must avoid falling into the same trap that poor Henry Lincoln tumbled into again and again. There is no concrete reason to believe that Plantard read Salamon’s newspaper articles at the time they first appeared. Anathema though the notion is to conspiracy theorists, sometimes coincidence is just coincidence.

At this stage, the Priory of Sion was very much of a piece with what had come before, one more shell organization occupying a liminal space between a scam and a delusion, whose claimed membership count was at extreme odds with the reality of Plantard himself and a few others whom he had persuaded or cajoled into signing on as his “board.” As a subtitle, the Priory bore the acronym C.I.R.C.U.I.T.: Chevalerie d’Institution et Règle Catholique et d’Union Indépendante Traditionaliste (“Knighthood of Catholic Institution and Rule, and of Independent Traditionalist Union”). Its founding statutes make it sound like an amalgamation of two of Plantard’s previous organizations, La Rénovation Nationale Française and Alpha Galates. It borrows from the former an interest in chivalry and Medieval life in general, from the latter an expressed interest in doing good deeds for the community. On the whole, it comes off like a French version of the Society for Creative Anachronism, albeit with a reactionary edge lurking in the corners. As a concession to the changing times, the overt fascism and antisemitism have been excised, but one can still feel their undertow beneath the surface: “The aim of the association is to found a Catholic order, with the intention of recreating in modern form, while preserving its traditional character, an ancient knighthood, which by its actions promoted a highly moralizing ideal and was a factor for steady improvement in the rule of life of the human personality.”

A C.I.R.C.U.I.T. newsletter from the fall of 1956 — the acronym was actually more prominent than the name the Priory of Sion early on — promises to institute a bus service for the local neighborhood, “to take your children to school and then bring them home again.” The fee is just 360 francs per month for one child, or 500 francs for two or more: “We would kindly ask you to enter details of your children on the attached form and deposit it in the letterbox of M. Plantard, Hill B, before Sunday evening, 21 October 1956.” There is no reason to believe that these buses ever ran. For that matter, it seems doubtful that this first incarnation of the Priory of Sion existed in even nominal form by the time 1956 was over.

Plantard returned to Paris in 1958, just as France was being plunged into its most serious political crisis of the postwar era. A revolt in the colony of Algeria had exposed the ineffectuality of the current system of parliamentary democracy, which was known as the Fourth Republic. In response, Charles de Gaulle, the hero of the Second World War, led a sort of soft coup that resulted in a system of government with more resemblance to the American approach than that of most European countries, complete with a strong president. De Gaulle himself became the first of these presidents.

While the outcome of his efforts was still in doubt, de Gaulle’s supporters all over France created organizations that bore the vaguely ominous name “committees of public safety” to forward his cause. These were catnip for Pierre Plantard. Forgetting that he had once preferred Marshal Pétain to General de Gaulle, he began to present himself to the press as one of the leaders of these committees. He was quoted in this guise several times by France’s national newspaper of record, Le Monde; this was rising higher than his fantasies had ever taken him before.

Once the Fifth Republic was firmly established and the committees dissolved, Plantard discovered a new vehicle for his schemes, one that would prove immensely important to the later history of the Priory of Sion and Rennes-le-Château. He found out that, through some colossal administrative oversight, anyone could deposit unvetted documents into the Bibliothèque Nationale. After this was done, they would be assigned a catalog number and be made available to researchers just like any other item in the library’s vast holdings. The Holy Blood and the Holy Grail, The Da Vinci Code, and all the rest of the lore and lucre of Rennes-le-Château could never have come to pass without this bureaucratic tribute to our natural instinct to trust one another. For trust was a quality just waiting to be weaponized by a man like Pierre Plantard.

His first application of the technique was to deposit documents which seemed to show that he had worked as de Gaulle’s right-hand man among the citizenry while the Fifth Republic was hanging in the balance. The clincher was a personal letter from the general — a forged one, naturally. (One problem with all of Plantard’s efforts at forgery is that he couldn’t shift himself from a stilted and fussy prose style that becomes readily recognizable, even when translated into English, as soon as you’ve seen it two or three times.)

My dear Plantard,

In my letter of 29 July 1958 I told you how much I appreciated the part that the committees of public safety had played in the restoration work that I have undertaken. Now that new institutions are being proposed that will enable our country to once again assume its place in the world, I think that the committees of public safety should be released from the obligations that have been imposed upon them to date and that they can be demolished.

This letter would later entice Henry Lincoln and his co-authors to make the ludicrous assertion that de Gaulle had “turned specifically to M. Plantard for aid” in realizing the Fifth Republic. (This after Plantard had engaged in “laudable activity” during the Second World War, including “editing the Resistance journal Vaincre,” and had then been imprisoned “more than a year” by “the Gestapo.” In between these two heroic periods of his life, he had resided near Lake Geneva, Switzerland, by explicit invitation of that country’s government, to be “one of the éminences grises from whom the great of this world seek counsel.” It certainly sounded better than languishing in a provincial prison cell for petty crimes.)

Plantard had been putting on aristocratic airs all his life, part and parcel of his longstanding fixation on the vanished life of the Middle Ages. By the time he returned to Paris, he had likely invented the idea that he himself was a long-lost scion of noble blood, may even have already linked himself to the Merovingian dynasty of almost a millennium and a half ago. But his self-aggrandizing fictions would never have reached as many people as they eventually did if he hadn’t met Philippe de Chérisey.

De Chérisey was exactly what Plantard most devoutly wished to be: a real nobleman, a count to be specific, with a pedigree stretching well back into Plantard’s beloved Middle Ages. But whereas Plantard had spent his life running toward a noble title that could become his only through deception, de Chérisey had spent his running away from the expectations of his family. Born in Paris three years after Plantard, he had enrolled in acting school as soon as the end of the Second World War made it possible. Adopting the stage name of Amédée as a sop to his family’s sensibilities, he appeared on radio and in a few reasonably successful films during the 1950s, until his other habits began to interfere with his career.

For de Chérisey was an unrepentant drunk, womanizer, and all-around bon vivant who wore his dissipation like a badge of pride, as if he had stepped straight out of the pages of Les Liaisons Dangereuses. He was also a card-carrying Surrealist, whose watchwords were anarchy and absurdity, born out of the belief that nothing in life — absolutely nothing — was worth taking all that seriously. The best reason to do something was because it was fun; the best reason not to do something was because it was not fun. Surrealist moral philosophy extended no farther than that.

De Chérisey collected rogues and misfits whom he found amusing in the way that some other people collect stray cats and dogs. We don’t know exactly how he came into contact with Pierre Plantard, but it is clear that he found that man, who approached his con artistry with all the gloomy intensity of a knight about to ride into bloody battle in the name of his lord and savior, incredibly amusing. Plantard didn’t so much willfully lie as attempt to reconfigure the order of things to the way it ought to be, with himself in the place of prominence that he deserved. De Chérisey, who already had that which Plantard most desired and affected not to care a whit about it, found it hilarious that such an unprepossessing man as this one could have such delusions of grandeur. He began paying Plantard’s bills in Paris so that the bizarre fellow could have free rein to do his bizarre thing.

De Chérisey happened to have another amusing friend, a fellow traveler in Surrealist circles named Gérard de Sède, who was working on a book about the Knights Templar that was to be, shall we say, not an overly rigorous history of the Medieval chivalric order. De Chérisey put the “hermeticist” Plantard in touch with de Sède. De Sède went on to quote Plantard prominently in his book.

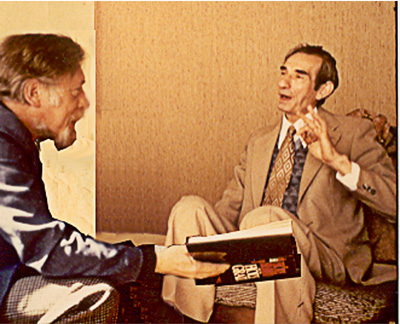

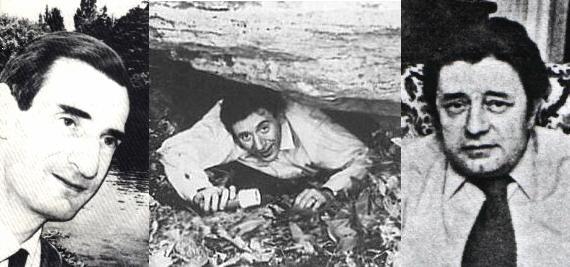

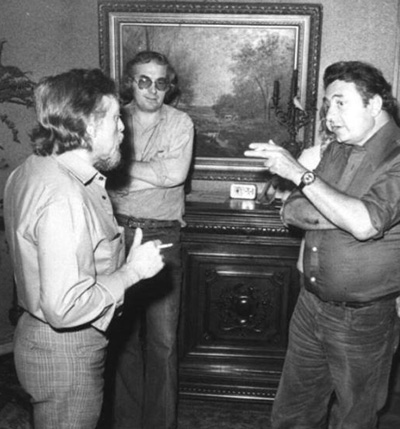

The real conspirators of Rennes-le-Château: Pierre Plantard, Philippe de Chérisey and Gérard de Sède.

It cannot be emphasized enough how essential de Sède and de Chérisey were to everything that followed. Plantard was as dull and pedantic by nature as he was dishonest; de Sède once described him as “a big nocturnal bird, very gloomy, very tall, very skinny. He’s not cultured; in fact, he’s quite ignorant.” De Sède and especially de Chérisey, on the other hand, were possessed of all the joie de vivre which Plantard lacked. They were able to give his ponderous genealogies a spark of wit and whimsy, able to turn the fraud into a game that could prove almost as enticing to those who didn’t believe in it as those who did. (Witness: the fact that this series of mine has now extended to five articles…) There is a reason that Plantard didn’t become a successful con man until he met de Chérisey and de Sède, the same reason that he was plunged into relative obscurity again almost as soon as the relationship ended.

Just as the exact time and circumstances of Plantard and de Chérisey’s first meeting are unknown, it isn’t clear when Rennes-le-Château first crossed their radar either. In the late 1970s, Plantard would spin a tale of a youthful visit.

I went to Rennes-le-Château in August 1938 to recover some letters which the priest Saunière had received from my grandfather. It was during the holidays and I was not yet twenty years old. ‘Marinette’ [Dénaraud], as they called her in the village, received me very hospitably at the Villa Bethania; I stayed there for three days. We celebrated the 70th anniversary of the old lady…

This upper-crust vision of tea in the garden of Saunière’s villa is a complete fabrication, like most of what Plantard said during his life.

In the category of more reliable witnesses, the Carcassonne librarian René Descadeillas believed Plantard made his first appearance in the area already in the late 1950s. This may be correct, but it seems at least as likely that this is a rare instance of Descadeillas being mistaken. For a documented reference to Rennes-le-Château from the pen of Plantard can’t be found until January 18, 1964. He may very well first have learned about Saunière and his alleged treasure the same way that so many of his countrymen did, through the documentary that aired on French television in early 1961. However it happened, he became one of the collection of misfits, cranks, and dreamers who took to hanging about the place, standing out only for being a bit more extreme in all three of those descriptions than most of the others. Calling him “strange,” Descadeillas wrote that

this man lived in Paris. He had no connections and no known relatives in the area. He was a difficult fellow to place, drab, secretive, cunning, with the gift of the gab, but people who spoke to him said it was hard to follow what he said. He was not having a course of medical treatment [the nearby town of Rennes-les-Bains, once the home of the French Lewis Carroll Henri Boudet, was known for its medicinal springs]. People asked about the reasons for his regular appearances, because he turned up unexpectedly, even in winter. They also speculated about his interest in archaeological and natural sights, because he was not an intellectual. They were intrigued by the strangeness of his behavior: he used to go around surveying the area and inquiring about the origin of properties. He would set his heart on scrubland or abandoned ground which did not interest anyone.

Philippe de Chérisey reportedly accompanied Plantard on some of these trips, and presumably funded all of them. Together, the two of them began to concoct the narrative that would soon spread through France and then the rest of the world, through the mediums of Gérard de Sède and Henry Lincoln respectively. Plantard may already have been working on the fake genealogies that were meant to suggest that he was a long-lost scion of the Merovingian dynasty for quite some time by this point. The earliest of those documents states that it stems from 1956 (again, that pivotal year!), but it’s impossible to say if this date is accurate. But his masterstroke — or perhaps de Chérisey’s — was to link these fantasies with the ones surrounding the treasure of Rennes-le-Château, thereby lending them the verisimilitude of being centered on a very specific place, one that anyone could go and see for themselves. Stumbling upon the tomb of a once-wealthy American heiress and her mother, constructed only 40 years before, Plantard thought it looked like the one seen in Nicolas Poussin’s The Shepherds of Arcady and worked that into the story. And of course the Priory of Sion was added to the plot, no longer as a Medieval-revivalist social club but as a shadowy cabal that had been associated with the hidden Merovingian bloodline since before the Crusades.

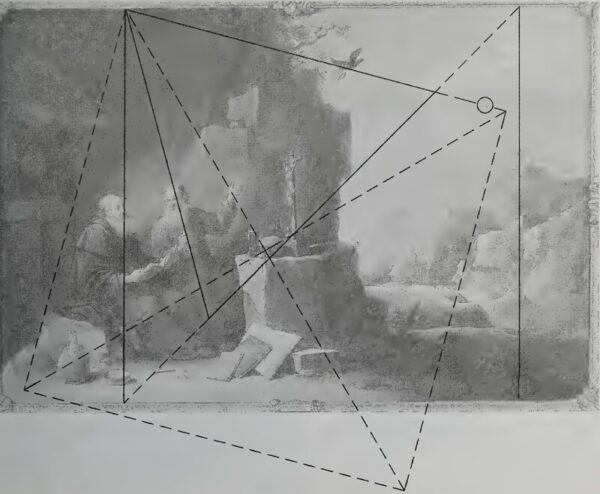

The reasons for the inclusion in the lore of the painting by David Teniers the Younger and the anonymous portrait of Pope Celestine V were and have remained far less obvious, such that they have been confusing treasure hunters ever since. Ditto Le Serpent Rouge, the nonsensical poem that also made its way into the growing dossier. De Chérisey, the unapologetic Surrealist prankster, must have relished blending the soluble conundrums with insoluble ones such as these, all in the service of turning the inept failed con artist Pierre Plantard into a king. What could be more absurd and hilarious?

Between 1963 and 1967, Plantard and/or de Chérisey deposited into the Bibliothèque Nationale the tranche of documents that Dan Brown would grandiosely call Les Dossiers Secrets in the opening pages of one of the best-selling novels in history. The holy texts of what would become practically a new religion to some are to be found right here. Among them are the genealogies of the Merovingians (and the Plantards), the list of Grand Masters of the Priory of Sion, and François-Bérenger Saunière’s connection to the mystery, including his fabled trip to Paris to have some “parchments” decoded and to buy three particular paintings. In short, the Lobineau dossier is the new religion’s version of the Bible or Koran. In time, much would be added to the theology that didn’t appear there, but it was the foundation of all that was to come.

It was de Chérisey who created the textual puzzle boxes we have been referring to as Altar Documents 1 and 2. He forged Gravestone 1, depositing it into the Bibliothèque Nationale with the names of Eugène Stübeln and a recently deceased local priest named Joseph Courtauly attached, and repurposed Gravestone 2 from an old journal put out by a Languedoc society of gentlemen scholars. We know all of this because he later told the French author Jean Markale exactly how he went about “inventing a story that the mayor [of Rennes-le-Château] had had a tracing made of the parchments discovered by the priest. Then I started to devise a coded copy based on passages from the gospels, and then I decoded myself what I had encoded.” The actual messages hidden in the documents were de Chérisey’s usual mixture of the sensical and the nonsensical. The results were superficially clever, but positively riddled with tells that they were far from aged, from the modern typesetting to the use of a far too recent edition of the Vulgate Bible. Nevertheless, they would, as de Chérisey put it, “work beyond my wildest dreams.”

For many years, it was assumed that Gérard de Sède was more or less a useful idiot in all of this, nothing more than Plantard and de Chérisey’s dupe for getting their invented mythology out into the world. But the indefatigable Rennes-le-Château skeptic Paul Smith, who has been fighting the good fight on his website since three years before Dan Brown’s novel hit, believes that de Sède, being a card-carrying Surrealist in his own right, was in on the joke all along. Smith’s theory of the case is that “originally, Plantard wrote a manuscript on the Rennes-le-Château story and during an eighteen-month period approached countless publishers to buy it. Nobody was interested. De Sède then rewrote the book, made it more comprehensible and less cluttered with detail. The result was the book L’Or de Rennes which was published in 1967 by Julliard.”

By way of bolstering his case, Paul Smith has placed the original publication contract for L’Or de Rennes on his website. Signed on January 13, 1967, it considers de Sède and Plantard to be full-fledged co-authors, and unequal ones at that: de Sède is to be awarded just one-third of the book’s royalties, Plantard the remaining two-thirds. The contract concludes that “in accordance with Mr. Pierre Plantard’s wishes, his name will not appear in any way either in the text or in any publicity relating to it.”

So, no one associated with the book was innocent; it was a knowing scam from top to bottom. The crowning masterstroke, which reeks of de Chérisey’s devious mind, was the decision to leave one fairly easily decipherable secret message undecoded, as a sort of exercise for the reader. That pulled Henry Lincoln into an intellectual black hole that he would never escape, providing the trio with a dupe par excellence.

We don’t need to dwell unduly on what happened during the 1970s, on how the myth-makers fed Lincoln a steady drip of fresh information, until he reached the point of making up his own lore, thus making the conspiracy theories self-sustaining. It seems unlikely that anyone had thought about secret geometries in the paintings and landscapes before Lincoln. And the ultimate piece of plot inflation, the transformation of Pierre Plantard from merely a long-lost king to a demigod on Earth with the sacred blood of Jesus Christ coursing through his veins, definitely caught the trio by surprise. It first occurred to Lincoln as a potential answer to a thoroughly commonsense question: why did it really matter to anyone, other than perhaps some scholars of genealogy and Medieval history, even if Plantard really was a long-lost Merovingian? On the other hand, everybody could understand why it mattered if he was Jesus’s direct descendant.

Ironically, the French themselves realized the truth about the conspiracy theories they had sprung on the world far earlier than everybody else. Already in 1974, when Henry Lincoln had barely gotten started, René Descadeillas returned to the subject out of sheer exasperation with the misinformation he saw flying all around him from his perch in the library of Carcassonne. This time, he wrote and published a proper book, Mythologie du Trésor de Rennes (“Mythology of the Treasure of Rennes”), thoroughly debunking the notion that Saunière had been anything but a corrupt priest with a taste for simony alongside the one that the various documents being passed around by de Sède could be anything more than an elaborate, eminently Gallic practical joke. Several other French authors wrote similarly detailed exposés in the decades that followed, but none of them were translated into other languages to directly challenge the gospels of Henry Lincoln and Dan Brown in the wider world.

The real, three-man conspiracy that stood behind it all started to fall apart already by the end of the 1970s. De Sède was the first to break ranks, angry at Plantard for communicating directly to Lincoln instead of using him as his exclusive intermediary. He was already gone when Lincoln filmed his fateful interview with the would-be Merovingian scion in March of 1979. Switching sides with the fecklessness of a conspiracist spurned, in 1988 he would publish the book Rennes-le-Château: Le Dossier, les Impostures, les Phantasmes, les Hypothèses (“The Dossier, the Deceptions, the Fantasies, the Hypotheses”). It would explain almost everything, except the extent to which de Sède himself had been in on the scam all along.

Plantard’s break with de Chérisey came a few years after the one with de Sède, after Lincoln and his co-authors trumpeted the theory that Plantard was a direct descendant of Jesus Christ in The Holy Blood and the Holy Grail. He took extreme exception to this assertion, for reasons that aren’t really clear. One would think that this man of all men would love to be known as a demigod walking the Earth, but such was not the case. It may have been that, underneath all of the lies and deceptions, the Catholic faith he professed was in some sense genuine, and couldn’t abide a blasphemy such as this. Or it may have been simply that Lincoln and his cronies got rich by building on his cherished myth, while he did not.

De Chérisey, by contrast, loved this ultimate theory of the case, clearly seeing it as the perfect final punchline of the joke he had unleashed upon the world fifteen years earlier. He wrote articles and letters telling people so, which led Plantard to cut ties with him, as he already had with Henry Lincoln. De Chérisey died relatively young in 1985, a victim of way too much aristocratic decadence. Plantard refused to attend the funeral.

In the aftermath of the rupture, Plantard attempted to reboot a fraudulent legacy that, he must have felt, had gotten too far out of his control. He went back to his usual playbook, re-inaugurating the Priory of Sion as a social organization with a philosophy and a magazine but a dubious roll call of actual members. According to the latest mythology, the Priory dated not from before the Crusades or even from after the Second World War, but rather from September 19, 1738. I could try to explain the new secret history, which retained some elements of the old one and discarded others, but what would be the point? Suffice to say that Plantard was still up to his old tricks. Unfortunately for him, the loss of his co-conspirators meant that his efforts were subject once again to the weaknesses that had undermined his earlier attempts at fraud: his tendency toward pedantry, his tangled prose, his lack of personal charisma, and his utter inability to tell a straightforward story without getting himself and his reader irretrievably lost in the weeds.

One technique which Plantard had long used to good effect was that of attaching his tales to those of famous dead people, who, being dead, were unable to contradict him. “He was first and foremost a past master at putting words into the mouths of the dead”, says Jean-Luc Chaumeil, the author of several books about the mythology of Rennes-le-Château. “Once someone had died he produced all sorts of documents, letters, and so on, all forgeries of course.” This longstanding practice — as demonstrated most famously in his list of Grand Masters for the Priory of Sion, without which Dan Brown’s novel would have been left without a title — remained one of his go-to moves as he entered his septuagenarian years. This time around, however, it would wind up hoisting Plantard with his own petard, in a way delightfully surreal enough to cause Philippe de Chérisey to giggle in his grave.

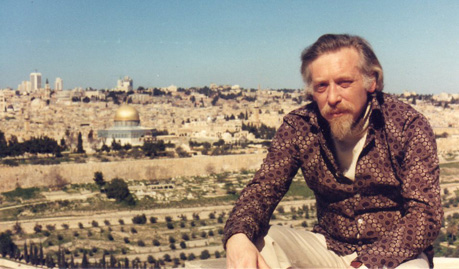

Roger-Patrice Pelat, at far left, in Rennes-le-Château in early 1981. His friend François Mitterand stands just to his right, wearing a hat.

Once upon a time in France, there was a prominent financier named Roger-Patrice Pelat, whose grievous life story would come to parallel that of his British counterpart Robert Maxwell. Pelat was very close to François Mitterrand, France’s president from 1981 to 1995, whom he had known since the two were prisoners of the Nazis together. They visited Rennes-le-Château during Mitterrand’s first presidential campaign, resulting in a well-traveled photograph of them standing inside Bérenger Saunière’s cluttered, rather tacky church. (The line between sinister trappings of the occult and mere bad taste is often thin.) Several years later, Pelat found himself at the center of a scandal when all sorts of improprieties in his dealings began to come to light. Mitterand distanced himself hurriedly, as politicians invariably do in such situations, and Pelat died alone and unlamented in March of 1989 of a literal broken heart.

Pierre Plantard moved to appropriate Pelat’s legacy for his purposes almost before the body was cold. In his telling, Pelat had become Grand Master of the Priory of Sion after he himself had stepped down from that post in 1984. But the Americans — these had become Plantard’s new bogeymen, a replacement for the Jews who had filled that role in his youth — had gotten to Pelat in some undefined way and brought him down.

For a long time, the American dream has been to dominate our country for financial and economic motives. The Priory, very many of whose members are themselves major financiers, politicians, directors of major insurance companies, magistrates, and so on, is the ideal CIRCUIT for various courses of action. That’s how Patrice Pelat was ensnared, and — I can say it here — I retain for him the most profound affection in spite of everything that has happened.

All would have been fine if Plantard had contented himself with spinning such fables exclusively for the all but nonexistent members of his largely imaginary organization. But on September 28, 1993, someone who called himself René-Roger Dagobert — yes, really — sent a number of documents to the court of law that was still investigating the dealings of the deceased Pelat. One of these, on the letterhead of the Priory of Sion, referred to Pelat as “our former Grand Master, who was always very much a man in the background, perfectly honest and just, who fell beneath the blows of various American initiates.”

In response, Judge Thierry Jean-Pierre ordered the police to search Plantard’s house and ordered the man himself to appear before his court, possibly because he suspected that the Priory of Sion may have been helping Pelat to launder money. This was a laughable notion on the face of it; Plantard’s operation was nowhere near that sophisticated, as must have been made all too clear by the documents that were seized. Nevertheless, Judge Jean-Pierre soon had Plantard under oath in his courtroom, and seems to have enjoyed making him squirm. There are conflicting reports about exactly how far he pushed him; transcripts of the proceedings remain sealed. Some reports have it that Plantard admitted only that he had lied about Roger-Patrice Pelat having been affiliated with the Priory of Sion, while others say that he confessed to all of the fraud, stretching back at least to 1956. Either way, Judge Jean-Pierre clearly put the fear of God into Pierre Plantard. For Plantard disappeared completely from public life after he went home from court for the last time on November 23, 1993; I might write now that he finally shuttered the Priory of Sion for good, if only there had actually existed much of anything to shutter. He died on February 3, 2000, just short of his 80th birthday.

The symmetry of the young and the old Pierre Plantard seems better suited to a novel than to real life. From first to last, he was founding grandiosely titled organizations in that aforementioned liminal space between wish-fulfillment and fraud, imagining thousands of members who didn’t exist, preaching his reactionary, nationalist politics in whatever form was deemed most acceptable at the time. He got his comeuppance at the hands of the Nazi occupiers of France during the 1940s and a French court of the 1990s for the same reason: for annoying them by spouting an endless stream of stupid, petty, self-aggrandizing lies instead of doing something better with his time.

Pierre Plantard was the embodiment of hyperreality, living his entire life in a virtual world of his own making. And he was the embodiment of another quality that hardcore hyperrealists often manifest: a complete inability to evolve. He was, whatever else he might have been, a perpetual adolescent. How strange to think that the mystery and the industry of Rennes-le-Château — the novels and the games and the movies and the tours and the earnest students of the lore who are poring over their sacred geometries even as I write these words — all came to exist because one emotionally stunted man decided to make himself the king he wanted to be as a replacement for a reality he refused to accept. In its way, his story is even more improbable than the mythology he spawned.

There is much, much more that I could write about Rennes-le-Château, a sticky topic that can ensnare the skeptic almost as easily as it can the true believer. Every answer just leads you to five more questions if you let it.

But we shouldn’t let it. Once we cut through all of the tangential connections and secret codes and other nonsense, we find that the underlying truth is disarmingly straightforward. At the turn of the twentieth century, a corrupt French priest remade his church and his village into a lavish if tacky monument to his own eccentric tastes. In the 1950s, a hotelier harnessed the local legend of hidden treasure which the priest had left behind to drive business his way. Then a few other bored Frenchmen embellished the story for aggrandizement and amusement. Then Henry Lincoln heard the tale they were spouting and added some extravagant touches of his own to the story, because he wanted it to make sense so very badly. And then Dan Brown came along to mash it down and make it suitable for mass consumption, turning a chicken into a chicken nugget.

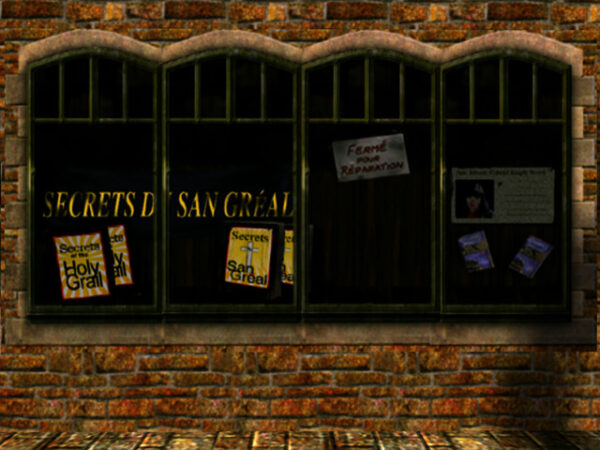

The peak of the Da Vinci Code mania is well past now, but the fantasies of Plantard, Lincoln, and their friends still echo through popular culture — not least the culture of gaming, which has long since become as mainstream as any other form of entertainment. Gabriel Knight 3, the game which started us on this journey, is just the tip of the iceberg. We will surely be encountering this mythology again as we proceed on this larger journey of ours through the history of gaming.

But at the same time that it has been an influence on games, the mystery of Rennes-le-Château is itself a sort of game — an “infinite game” in the words of Mariano Tomatis, one whose playing board is the whole world. Anyone who claims to have solved the mystery and thus won the game is immediately perceived as a threat by a community of players who have made it their hobby, their social outlet, in some cases even their identity: “The game of Rennes-le-Château continues to provide amusement only if there is an underlying mystery, an unsolved puzzle to be explored. Any contribution academically sound is immediately rejected by the large community of players, because every demystifying statement closes at least one of the possible extensions of the game, thus threatening the very purpose of the infinite game, which is to continue indefinitely.”

Every gamer knows the feeling of wanting to keep a good game going. But the wise ones also maintain the barrier between the game and real life. For to do otherwise is to risk madness, as the lives of Plantard, Lincoln et al. so painfully demonstrate.

Did you enjoy this article? If so, please think about pitching in to help me make many more like it. You can pledge any amount you like.

Sources: The books Holy Blood, Holy Grail and The Messianic Legacy by Michael Baigent, Richard Leigh, and Henry Lincoln; Bloodline of the Holy Grail: The Hidden Lineage of Jesus Revealed by Laurence Gardner; The Treasure of Rennes-le-Château: A Mystery Solved by Bill Putnam and John Edwin Wood; The Holy Grail: The History of a Legend by Richard Barber; Invented Knowledge: False History, Fake Science and Pseudo-religions by Ronald H. Fritze; The Tomb of God: The Body of Jesus and the Solution to a 2,000-Year-Old Mystery by Richard Andrews and Paul Schellenberger; Rennes-le-Château et l’enigme de l’or maudit by Jean Markale; Le Trésor Maudit de Rennes-le-Château by Gérard de Sède; The Holy Place: Saunière and the Decoding of the Mystery of Rennes-le-Château by Henry Lincoln; Key to the Sacred Pattern: The Untold Story of Rennes-le-Château by Henry Lincoln; Les Templiers sont parmi nous, ou, L’Enigme de Gisors by Gérard de Sède; Les Mérovingiens à Rennes-le-Château. Mythes ou Réalités. Réponse à Messieurs: Plantard, Lincoln, Vazart & Cie by Richard Bordes; Lives of the Popes: The Pontiffs from St. Peter to Benedict XVI by Richard P. McBrien; Foucault’s Pendulum by Umberto Eco; The Da Vinci Code by Dan Brown; Are We Idiots?: The Simulacra of Jean Baudrillard by Boris Kriger; Simulations by Jean Baudrillard; Mythologie du Trésor de Rennes by René Descadeillas; Rennes-le-Château: Autopsie d’un Mythe by Jean-Jacques Bedu; Bérenger Saunière Curé à Rennes-le-Château by Abbé Bruno de Monts; Rennes-le-Château: Le Dossier, les Impostures, les Phantasmes, les Hypothèses by Gérard de Sède.

Skeptical Inquirer of November/December 2004; Politica Hermetica 10.

As should be made clear by the links sprinkled through the article, I owe a special debt to the material collected by Paul Smith on his website Priory of Sion.

The Mystery of Rennes-le-Château, Part 4: Non-Fiction Meets Fiction

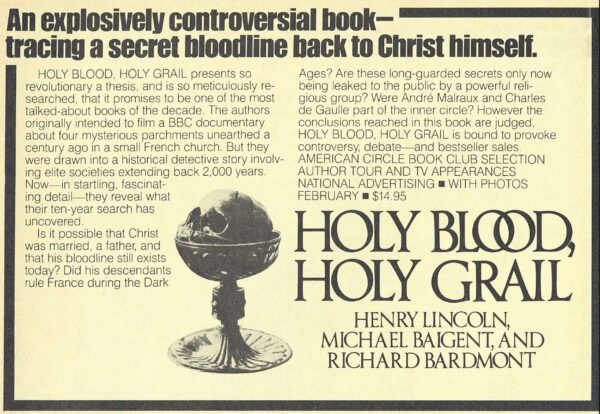

Even the authorship of books about Rennes-le-Château is unnecessarily complicated. Richard Leigh almost adopted the pen name of “Richard Bardmont,” perhaps to keep his work in alternative history separate from the “serious” novels he still dreamed of writing. At the last minute, however, he changed his mind and allowed the book to be published under his real name. Just as well; the novels would never emerge.

The Holy Blood and the Holy Grail was published by Jonathan Cape in Britain on January 18, 1982. Delacorte released an American edition five weeks later, under the punchier title of simply Holy Blood, Holy Grail. Sales were strong right out of the gate, and in time the book grew into something of a sensation on both sides of the Atlantic.