By 1999, Interplay had begun crediting its internally developed CRPGs to “Black Isle Studios,” a distinction that represented very little difference, given that Black Isle shared office space and personnel with its parent publisher. Note the careful choice of words on the box above, to call Black Isle the “producers” — not the developers — of Baldur’s Gate.

My power fantasy when playing a role-playing game is to confront a villain, explain point by point why his master plan is flawed, and then get him to admit that he hadn’t thought things through as carefully as I had, and ask me what I think he should do. Conversation-based player characters can have their bad-ass moments just as much as someone wielding a gun…

— Chris Avellone

Planescape: Torment is the damnedest game. Its list of failings is longer than that of many a game that I’ve simply written off as bad, full stop, and moved on from without a second thought. The pacing is glacial for long stretches; the interface is fussy and clunky; the combat is both irritating and utterly superfluous to the game’s design goals. Even much of the writing, by far the most celebrated aspect of Planescape: Torment, tends to seem proportionally less profound and more banal as one becomes farther removed in age and life experience from the twenty-somethings who first put all of these words — so many, many words, a reported 800,000 of them in all — onto our monitor screens more than a quarter-century ago. In so very many ways, Planescape: Torment is an undisciplined hot mess.

And yet it’s a hot mess that refuses to be dismissed lightly. For Planescape: Torment is also a vanishingly rare thing in the realm of game narratives: a genuine interactive tragedy, in the sense that Aeschylus, Shakespeare, and Nietzsche understood that word. That it recognizes the tragic side of life while inhabiting a genre whose whole point in the eyes of most of its fans is the triumphalism of going from a weakling to a demigod is incredibly brave and subversive. That it did this in 1999, when the games industry was smack dab in the middle of one of the most homogenized, risk-averse periods in its history, is as inexplicable as it is astonishing.

Clearly we have much to unpack…

TSR sold surprisingly few copies of the original Planescape campaign setting, even at the stupidly cheap price of just $30. It goes for $250 among collectors today.

Whatever else it is, Planescape: Torment is first and foremost a licensed adaptation of Dungeons & Dragons, a part of Interplay’s attempt to revive that storied tabletop game’s digital fortunes amidst the collapse of its parent company TSR and TSR’s acquisition by Wizards of the Coast. This particular computer game was no mere branding exercise, as was the case with some of them that came out in Dungeons & Dragons trade dress during the 1990s. On the contrary, Planescape: Torment was deeply, intimately informed by the creative work that took place in TSR’s Wisconsin headquarters earlier in the decade. The extent to which this is the case is often glossed over or forgotten entirely when retrospectives of it are written today. So, let me make it crystal clear here right from the start: love it or hate it, a huge chunk of what makes Planescope: Torment so unique and memorable originated not in Interplay’s Southern California offices but in the nation’s dairy-cow heartland.

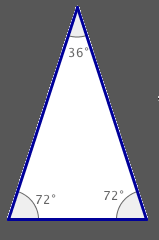

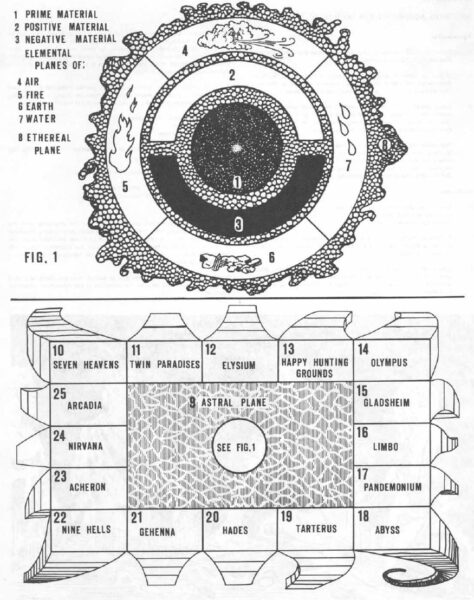

It will presumably surprise no one when I write that the “planes” of Planescape are alternate planes of existence, separate from the “Prime Material Plane” in which most Dungeons & Dragons campaigns take place. They were introduced by Gary Gygax already in the late 1970s, in the iconic first editions of the Player’s Handbook and Dungeon Master’s Guide. His cosmology was a melange of a little bit of everything: quantum physics, Renaissance-era alchemy and astronomy, the holy texts of various religions, New Age philosophy, Dante and Milton, twentieth-century fantasy and horror novels.

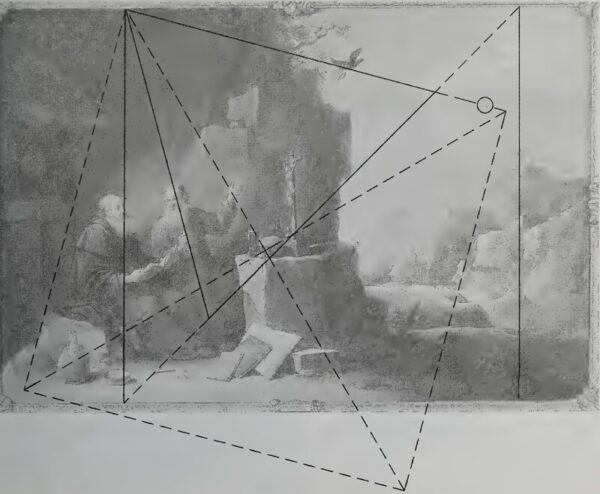

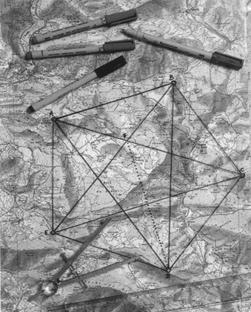

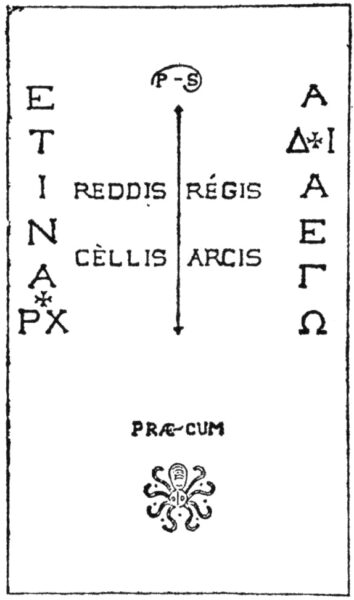

Gary Gygax’s vision of the Dungeons & Dragons multiverse, as found in an appendix to the Player’s Handbook.

The Prime Material Plane stands at the center of it all, much like the Earth was once imagined to stand at the center of our universe. It is surrounded by the Inner Planes that embody the physical building blocks of existence, which are in turned enclosed by the Outer Planes that embody the metaphysical alignments, those nine possible combinations of Lawful, Neutral, and Chaotic, Good, Neutral, and Evil.

Gygax was always prepared to muse and to elaborate, on this subject as on so many others. Small wonder that these alleged rule books — surely the most chatty and discursive books of rules ever written, the heart of the Gospel of Saint Gary — were perused and pored over endlessly by his young fans, many of whom were discovering for the first time the countless disparate philosophical ideas he threw into the pot. Gygax wasn’t an overly sophisticated thinker in most contexts, but he was a prolific one, who always had ten more ideas waiting in the wings if you didn’t respond to his last one.

For those of you who haven’t really thought about it, the so-called planes are your ticket to creativity, and I mean that with a capital C! Everything can be absolutely different, save for those common denominators necessary to the existence of the player characters coming to the plane. Movement and scale can be different; so can combat and morale. Creatures can have more or different attributes. As long as the player characters can somehow relate to it, then it will work…

I have recommended that Boot Hill and Gamma World be used in campaigns. There is also Metamorphoses Alpha, Tractics, and all sorts of other offerings which can be converted to man-to-man role-playing scenarios. While as of this writing there are no commercially available “other planes” modules, I am certain that there will be soon — it is simply too big an opportunity to pass up, and the need is great.

This was a remarkably prescient description of where planar travel in Dungeons & Dragons would go — eventually. For a long time after The Dungeon Master’s Guide appeared in 1979, the other planes of existence were one of those Dungeons & Dragons concepts that were kind of floating out there in the ether (or was it the Ethereal Plane?) without anyone knowing quite what to do with it. Apart from some sketchy guidelines for “ethereal” and “astral” travel and combat, the rule books remained sadly short on specifics. The 1980 adventure module Queen of the Demonweb Pits, designed by Gygax and David C. Sutherland III, did take players on a jaunt to the Abyssal Plane, but that was a one-shot thing. For all that Gygax had claimed, in his indelibly Gygaxian way, that “the need is great,” as if an understanding of the planes of Dungeons & Dragons was an urgent matter of national security, neither he nor anyone else seemed to be in all that much of a hurry to address said need. The occasional slightly dodgy article in Dragon magazine aside, Dungeons & Dragons remained in practice a very Prime Material sort of game.

This situation first started to change in the latter half of the 1980s. By then, Gygax was on his way out of TSR and the Dungeons & Dragons craze of the decade’s beginning had just about run its course. Necessity was forcing TSR to adjust its business model, from selling the core Dungeons & Dragons game to new players to selling an ever expanding lineup of rules extensions, campaign settings, and pre-crafted adventures to its surviving base of loyal, hardcore players. The planes seemed like fresh fodder for all three types of product.

A longtime TSR stalwart named Jeff Grubb took the first concerted swing at it. In 1987, the company published his Manual of the Planes, the latest in its ever-growing line of new Dungeons & Dragons hardbacks for the hardcore. Grubb took it as his mission to give Gygax’s abstract cosmology a grounding in lived experience, to explain what it would actually be like to visit these places. Unfortunately, he prioritized alchemical realism over playability, winding up with a collection of environments that were as brutally, hilariously inhospitable to even high-level characters as one might imagine a plane of nothing but fire or air to be. “The book was fascinating reading,” notes Dori Hein, an ordinary Dungeons & Dragons fan at the time whom we will meet again in another role. “I loved the mythology and the grand majesty of all the planes, but — try as I might — I couldn’t create an adventure without killing all my players.” In the same vein, Sean Gandert of the website Exposition Break writes that “the planes’ complete resistance to being remotely welcoming is both what makes them fascinating to read about and also makes the book completely skippable and largely irrelevant. It is a work of cosmology and mythology, not a plan for where to send adventurers.”

The Manual of the Planes went out of print in fairly short order anyway, after TSR commenced rolling out a second edition of Advanced Dungeons & Dragons in 1989. The cynical interpretation of this initiative is that it was the best way TSR had yet devised for continuing to extract money from its static pool of players, by forcing them to buy the game they loved all over again in its most basic form in order to stay up to date with the times. The idealistic one is that it let TSR clean up a game system that had grown ever more baggily shambolic over the past decade of supplement after supplement. In reality, the second edition was doubtless a little of both, being seen one way by the people surrounding Lorraine Williams in her executive suite and another by the creative types in the cubicles.

That said, and looking back on what I’ve written about the later period of TSR’s history elsewhere on this site, I fear I may have overemphasized the cynicism at the expense of the idealism. There’s no question that the company fell prey to a set of perverse incentives during the last decade of its existence, many of them born out of idiosyncrasies in its longstanding distribution contract with the book publisher Random House. By the early 1990s, this had resulted in an absolute hailstorm of product brought down upon the heads of Dungeons & Dragons fans, more than all but the most well-heeled among them could possibly afford to buy, much less find the time to bring to the tabletop. But there’s likewise no question that these products were made with enormous love and care by the creative staff. This was the heyday of the alternative campaign setting, when TSR offered up the chance to leave conventional high fantasy behind and play Dungeons & Dragons in post-apocalyptic worlds, in the lands of the Arabian Nights, in Gothic castles, on the high seas, even in outer space. So what if there was no way to justify so many settings’ existence as commercial products, if each successive one sold worse than the one before, especially after the collectible-card game Magic: The Gathering arrived on the scene to tempt away large chunks of TSR’s remaining customer base. Circumstance had granted the people making these settings a rare reprieve from the harsh logic of supply and demand, and they didn’t let it go to waste.

Given this cavalcade of rich but disconnected settings, it was perhaps inevitable that TSR would look once again to the planar multiverse as a way of unifying a crazily diverse set of experiences bearing the name of Dungeons & Dragons. A boxed set reviving Gygax’s multiverse could bring them all together conceptually, could even provide a set of practical mechanisms to allow the same set of player characters to jump from setting to setting, just like Saint Gary had first proposed all those years ago.

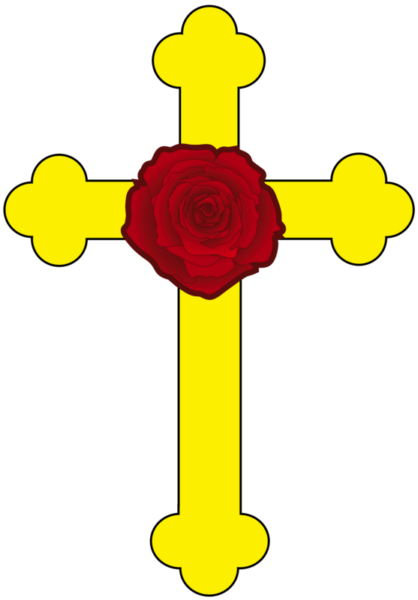

In addition to being a unifying force for Dungeons & Dragons itself, Planescape was quite explicitly intended as a response to Vampire: The Masquerade, an RPG from an upstart company known as White Wolf Games that flipped everything you thought you knew about the tabletop scene on its head. Whereas Dungeons & Dragons, even in its supposedly cleaned-up second-edition incarnation, was infamous for the complexity of its rules, Vampire gave you just enough of them to provide a runway for storytelling. That fact, combined with its subject matter, attracted fresh blood to the hobby: Goth rockers and theater kids and Anne Rice readers, among them a surprising number of girls and women. At the end of the day, Vampire may have been full of as many clichés as vanilla Dungeons & Dragons — clichés which are all the more evident from the perspective of today, after several more decades worth of vampire fictions — but they had the advantage of feeling relatively fresh from the perspective of the early 1990s. Indeed, this was the only period in the entire history of tabletop RPGs when it seemed possible that a different game might just unseat Dungeons & Dragons from its throne as the undisputed standard bearer for the hobby. Vampire’s rise made TSR nervous enough to want to make something of its own that was grittier, messier, and a bit less morally straightforward, less of a single-unit wargame and more of a vehicle for improvisational drama. It was no accident that the Dungeons & Dragons brand appeared on the eventual Planescape box only as a small logo tucked away in the corner.

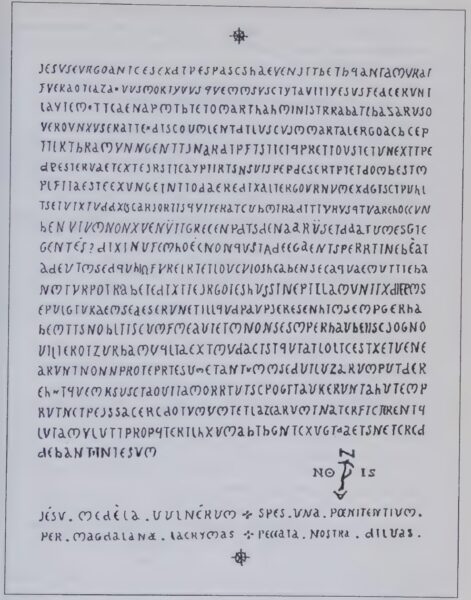

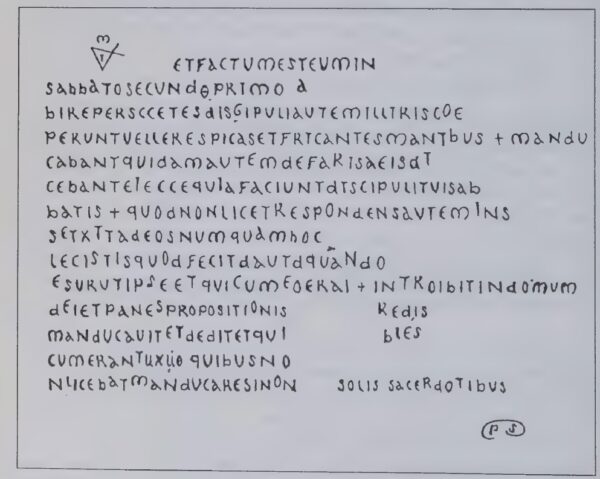

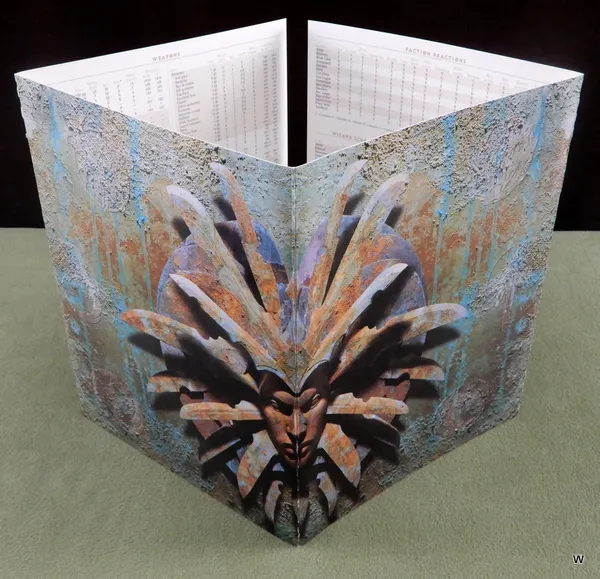

David “Zeb” Cook, another veteran TSR hand, was made lead designer on Planescape. Dori Hein, who had by now graduated from merely playing TSR’s games to working there, became the producer, overseeing a team of artists, cartographers, writers, editors, and play-testers. They pulled out all the stops for a set that wound up consisting of no fewer than four separate books, printed on thick and creamy Pentair Suede paper, and four sturdy cardboard posters. The luscious package was capped off by the most intimidating Dungeon Master’s screen ever devised. One of TSR’s purchasing managers had a sign hanging in his office: “The pleasure of a product well done lingers far longer than the excitement of a bargain.” As it happened, though, the Planescape set was both: it sold for just $30, a ridiculously cheap price for such a luxurious product even by the standards of the 1990s. It may have been no more than a break-even price, or not even that, settled upon in the hope that Planescape would revive TSR’s flagging fortunes in the longer run by spawning a whole new ecosystem of supplements, adventure modules, and tie-in novels.

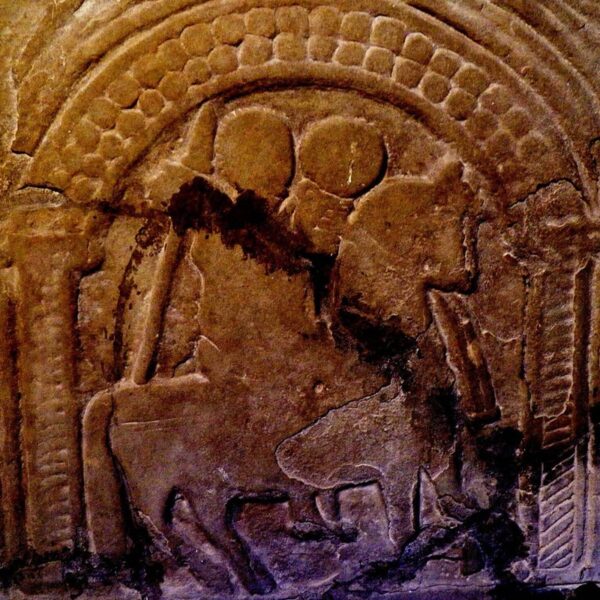

The Planescape Dungeon Master’s screen. Sitting down around a table that had this thing in top of it, you knew you knew you were in for a mind-bending journey that was more Salvador Dali than Boris Vallejo.

Zeb Cook’s first and most important stroke of brilliance was to give his vision of the planes a hub around which to operate. This was Sigil, a “city of doors” giving unto the many other planes, a meeting ground and melting pot for the entire multiverse. Ranging far afield from the pulpy fantasy of Jack Vance and the stately epic fantasy of J.R.R. Tolkien, the two most obvious inspirations for traditionalist Dungeons & Dragons, Cook read postmodern, experimental novels by Milorad Pavić and Italo Calvino for inspiration. Sigil, a city of angles as well as doors, became a physical embodiment of their twisted, self-referential approach to narrative: “Get it right out front: Sigil’s an impossible place, a city built on the inside of a tire that hovers over the top of a gods-know-how-tall spike, which rises from a universe shaped like a giant pancake.”

Sigil is not so refined a place as some might expect for the central hub of the multiverse, but that’s fair enough, given that Cook’s multiverse itself isn’t all that refined. The dominant note of the city, even outside of its plentiful and teeming slum districts, is what we might call dirty Victoriana, of a piece with 21st-century novels like Sarah Water’s Fingersmith and Michel Faber’s The Crimson Petal and the White, which read like genuine Victorian “sensation novels” with the added ability to state outright the disreputable things that their ancestors could only imply. The dialect of Sigil’s streets is vintage Cockney slang in spirit if not always in the details of the vocabulary, with the same uncanny talent for being roundabout and penetrating at the same time: “berks” and “cutters” are no-account people; “the dark” is knowledge; “jink” is money; one’s “kip” is one’s (usually humble) abode; one’s “bone-box” is one’s mouth; to “pike off” means to scram. In keeping with all the best slang, these are words that you know when you hear them even if you don’t actually know them, if you take my meaning. As we’ve already seen, the books in the Planescape box that describe Sigil are themselves written in this vernacular: “Welcome, addle-cove!” begins the Planescape “Player’s Guide.” This is not the Dungeons & Dragons of 1980s school cafeterias; both dungeons and dragons are mostly missing from Sigil, replaced by far stranger things.

Instead of embracing the simplistic good-versus-evil dynamics of traditional Dungeons & Dragons, Sigil is divided into fifteen factions whose adherents are aptly described as “philosophers with clubs,” from the chivalric and vaguely fascistic Godsmen to the nihilistic Bleak Cabal, who preach that “once a sod believes it all means nothing, it all starts to make sense.” Ruling over the whole place, ensuring that no single faction gets too powerful, is the Lady of Pain, who can flay the skin from a poor berk just by looking at him. The overriding theme is that ideas and beliefs matter, are literally woven right into the substance of the multiverse, and can kill or save you just as indubitably as the physical elements of earth, air, wind, and fire. Sigil is the ultimate argument for the value of a good humanities education.

If there’s a weakness to the Planescape set, it’s that it spends so much space on Sigil that it doesn’t have enough left over for all those other planes of existence that were supposed to be the whole point of the endeavor. Instead of offering a wide-open set of possibilities, it can feel paradoxically claustrophobic, like the crowded filthy alleyways of the city itself.

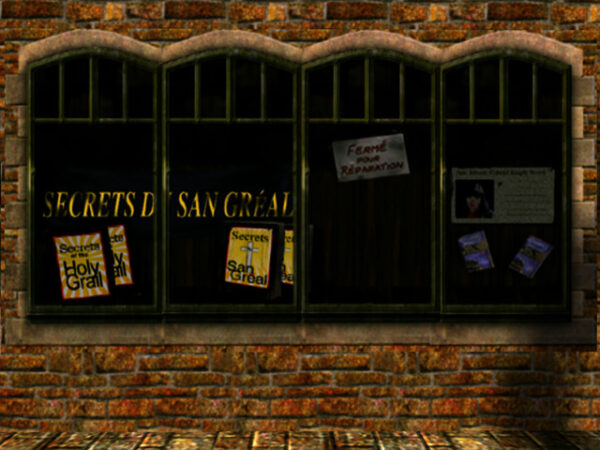

Nevertheless, the Planescape box was endlessly audacious and imaginative, as different from the typical Dungeons & Dragons experience as anyone could have asked for. But, whether despite or because of these factors, it was not a commercial success. It sold just 60,000 copies over the five years after its release in April of 1994, a thin foundation indeed on which to build a new gaming ecosystem. The add-on lines, which offered opportunities to flesh out the multiverse in some of the way that the boxed set had failed to do, continued in fits and starts for longer than you might expect — another tribute to the topsy-turvy economic incentives that marked TSR at the time — but petered out for good after the failing company was acquired in 1997 by its own worst enemy Wizards of the Coast, the maker of Magic: The Gathering. The Vampire craze did eventually fade, but its travails had nothing to do with TSR’s efforts. It was rather something to do with the ever-shifting winds of pop culture, which soon replaced teenagers’ Cure and Alice in Chains records with the Backstreet Boys and Britney Spears.

So, had things turned out just a little bit differently, Planescape would be fondly remembered today only by a few tabletop nostalgics as a piece of work of unusual vision that never got its due. Instead, though, it went on to become a landmark of another stripe, in a different medium entirely.

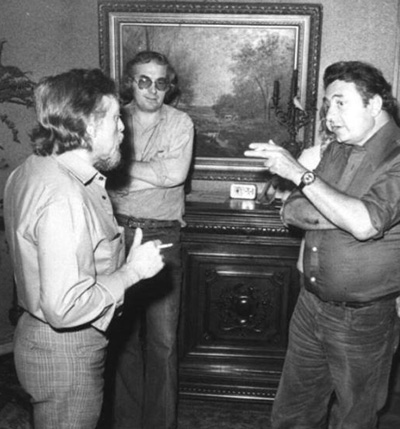

TSR had begun dangling the prospect of a Planescape computer game before publishers even before the boxed set shipped; such a thing was regarded as a potentially vital part to the product line that had become the latest Great White Hope for reversing the company’s accelerating downward spiral. Interplay rose to the bait, signing the contract before 1994 was out. In fact, it went so far as to hire Zeb Cook himself, who had concluded that “it didn’t seem like there was going to be a long-term future” for him on the tabletop. But the initial rush of enthusiasm petered out; Cook soon departed again, leaving the digital future of Planescape in limbo. And yet the idea of a Planescape computer game never completely went away. Late in 1995, when an inexperienced youngster named Chris Avellone came to Interplay for a job interview, he was asked how he would design such a game. He brainstormed in the spur of the moment the genesis of the eventual Planescape: Torment: “I would start it after the death screen. What happens after the main character dies?”

Avellone had grown up in the 1980s playing Dungeons & Dragons with his friends in his hometown of Alexandria, Virginia. By the time he went off to university, he had two possible futures in mind for himself: either to become a comic-book author or to become a tabletop-RPG designer. Neither field could exactly be called a growth industry at the time, but he made the best of it. On the gaming side, he sent a long string of submissions not only to TSR but to Steve Jackson Games, the maker of GURPS (“Generic Universal Role-Playing System”), and to Hero Games, the maker of the superhero RPG Champions. Initially, he met only with rejection; his closest brush with his heroes at TSR came when Monte Cook, yet another well-known name among the Dungeons & Dragons cognoscenti, took time out to plead with him personally to just stop submitting stuff already.

But Avellone persevered, and finally began to see some of his gaming material accepted and published. Yet he still had to confront the reality that the life of a freelance tabletop-RPG writer and designer left a little something to be desired: specifically, money. Most of the royalty checks that came in from the beleaguered companies that published his work — the Magic: The Gathering craze was in full flight, pushing RPGs to the margins of the same shops where they had once been the dominant attraction — had just two digits before the decimal point. Avellone, who had by now graduated from the College of William & Mary with a Bachelors in English, was still at loose ends when it came to the all-important question of how he was going to put food on his table as a responsible adult. Everyone told him that the wise choice was to acquire a teaching certificate, but all he wanted to do was find a way to make games full-time.

Oddly enough, he had never seriously thought about becoming a computer-game developer, despite having played his fair share of The Bard’s Tale and its ilk as a teenager. It took Steve Peterson, his editor at Hero Games, to point out to him how different the economics of that adjacent industry were. Peterson pulled some strings to secure Avellone an interview at Interplay Productions, for something which he was unlikely to find anytime soon in the moribund tabletop field: an honest-to-goodness full-time job. He got the job, and started at Interplay in 1995 as a junior designer.

Although he had been asked about Planescape at his interview, he wasn’t allowed to spend all or even most of his time on that perpetually incipient project after he was hired. As the low man on the totem pole, he was shuffled around from team to team, plugging gaps in the design plumbing wherever needed. He worked on the infamous Descent to Undermountain, the nadir of digital Dungeons & Dragons during the 1990s; on Conquest of the New World, Interplay’s workmanlike take on the same theme as MicroProse’s Colonization; and on Starfleet Academy, an attempt to do TIE Fighter in the Star Trek universe that never felt true to its source material, in that it had the usually stately likes of the USS Enterprise dog-fighting in space as if it was, well, a TIE Fighter.

But betwixt and between all of the above, Avellone sat in his cubicle writing his Planescape game. He did so as much for his own peace of mind — because he needed something that he could feel passionate about — as out of any real conviction that the game would ever get made. The winds blowing against it seemed positively gale-force. For by now it was clear that Planescape would not prove the savior of Dungeons & Dragons on the tabletop. The TSR boxed set had barely sold at all, even as, commercially speaking, CRPGs were scarcely in better shape than their tabletop counterparts in the mid-1990s. Interplay already had one game in the stagnant genre under active development, in the form of Fallout. That looked like one too many in the eyes of most of the bean-counters.

Slowly, however, the murky picture started to take on some brighter shades. Just as 1996 was turning into 1997, Blizzard Entertainment unleashed a game called Diablo. Debate raged on Usenet and the young World Wide Web over whether Diablo, with its procedurally generated dungeons and its emphasis on constant action over a fleshed-out narrative, was a “real” CRPG at all or just a watered-down pretender. What was undeniable, though, was that it sold like crazy, raising the question of whether more complex, textured CRPGs might be ripe for a revival as well. Meanwhile a bankrupt TSR was by now in the process of being acquired by Wizards of the Coast. Wizards was saying all the right things about resurrecting Dungeons & Dragons for this new era, and its Magic revenues left it primed to spend more money on that endeavor than TSR could ever have dreamed of even before the collectible-card-game craze had cleaned its clock.

In what had seemed at the time like a triumph of hope over recent experience, earlier in 1996 the Interplay producer Feargus Urquhart had enlisted a fledgling Canadian studio known as Bioware to make yet another Dungeons & Dragons CRPG for Interplay to publish. In what had seemed a minor stipulation of the deal at the time the contract between Bioware and Interplay was signed, the former had agreed to allow the latter full access to the “Infinity Engine” it planned to use to build and run the game. By the spring of 1997, those arrangements were looking like they might prove more important, both to Interplay and to the whole industry, than anyone had anticipated at the time.

The Bioware game, for which Feargus Urquhart himself had come up with the name of Baldur’s Gate, was pitched straight down the middle, being about as traditionalist as a Dungeons & Dragons CRPG could get. It took place in the game’s more or less default setting of the Forgotten Realms, a world that took every cliché of epic fantasy and ran with it. Obviously this was the safest choice for a revival. But, in the wake of Diablo’s smashing success, Urquhart thought there might be space to throw up a curve ball as well to serve as a more outré companion piece. He asked Chris Avellone to condense his massive Planescape notebook into a proper project proposal.

The proposal reached the desk of Brian Fargo, the founder and head of Interplay, at the end of June 1997. “There was always a balance in running a studio between being commercial, being creative, and having your creative people be happy, and having them do things that are interesting to them,” says Fargo. “I was willing to take creative risks from time to time in order to allow these things to happen. Planescape: Torment was clearly one of those. When it came across my desk, I said, ‘Well, that’s as high-concept as you can get.’ But I thought that RPG players would like it, and I loved the writing and sensibility they put into the document. That got me interested in doing it.” It didn’t hurt, of course, that it ought to be possible to do the game fairly cheaply, since it would be able to re-purpose Bioware’s Infinity Engine.

The heart of the Planescape: Torment team was lead designer Chris Avellone, lead programmer Daniel Spitzley, the artists Tim Donley and Aaron Meyers, and producer Guido Henkel (a recent German immigrant who had helped to make the CRPGs Blade of Destiny and Star Trail in his native land). The project was not a major priority at Interplay for the majority of its existence, even after Fallout came out late in 1997 and sold pretty well, thus demonstrating that there truly was a reasonably sized market for more complex, conversation-heavy CRPGs than Diablo, provided that they were done well. In fact, in an ironic sort of way, Fallout’s success was to Planescape: Torment’s detriment. Eager to capitalize on the first non-sequel, non-licensed Interplay release to garner an appreciable buzz among hardcore gamers since Descent in 1995, Brian Fargo decreed that a Fallout 2 had to come out within a year of its predecessor. As a result, Planescape: Torment was all but suspended for much of 1998, while most of the team, Avellone included, moved over to pitch in on the Fallout sequel.

Although they did get it done on time, the biggest CRPG success story of the Christmas of 1998 proved not to be Fallout 2 but rather Baldur’s Gate, which introduced digital Dungeons & Dragons to a whole new generation of gamers who were more familiar with Diablo than Pool of Radiance. Just like that, Dungeons & Dragons on the computer became a hot topic again. With a Baldur’s Gate II not slated for release until 2000, Planescape: Torment was left to carry the Infinity Engine water in the interim. That brought a fresh influx of energy and resources to the project, and these were sufficient to get the game finished just in time for the Christmas of 1999.

It entered stores accompanied by stellar reviews whose fulsome praise felt only slightly obligatory in a Stockholm Syndrome sort of way. (Many reviewers did point out the “tome of text” to be read in tones that suggested that they might not have found it as uniformly delightful as their five-star verdicts suggested.) Nonetheless, as a computer game based on a tabletop setting that had been discontinued more than eighteen months earlier, Planescape: Torment was in a strange position for a licensed product. Even against weak competition — the only other high-profile CRPG release that holiday season was the abjectly terrible Ultima IX — the game’s sales were a shadow of the figures put up by Baldur’s Gate. In an ironic way, the lack of ringing commercial success may have been a positive for Planescape: Torment’s legacy, confirming its modern status as a cult classic that’s for the CRPG sophisticates rather than the hoi polloi.

As for my opinion… well, I’m afraid I’m going to need another article to properly interrogate the reputation and reality of the game. For, whether one happens to be sitting with the prosecution or the defense or just back in the jury box trying to sort through it all, the case of Planescape: Torment is a complicated one.

Did you enjoy this article? If so, please think about pitching in to help me make many more like it. You can pledge any amount you like.

Sources: The books Slaying the Dragon: A Secret History of Dungeons & Dragons by Ben Riggs, Beneath a Starless Sky: Pillars of Eternity and the Infinity Engine Era of RPGs by David L. Craddock, and Designers & Dragons: A History of the Roleplaying Game Industry volumes 1 (the 1970s) and 3 (the 1990s) by Shannon Appelcline; Dragon of March 1994, April 1994, May 1994, July 1994, and August 1994; Computer Gaming World of March 2000 and April 2000; the 2015 GamesTM special issue on “controversial” games; Retro Gamer 113. Plus the Advanced Dungeons & Dragons Player’s Handbook, Dungeon Master’s Guide, Manual of the Planes, and the Planescape boxed set. Plus the materials found in the Brian Fargo Collection in the archives of the Strong Museum of Play.

Online sources include Soren Johnson’s interview with Chris Avellone for his Designer’s Notes podcast, a Last Game Standing interview with Avellone, and Sean Gandert’s series of articles about the evolution of planar travel in Dungeons & Dragons for the website Exposition Break.

Where to Get It: Planescape: Torment is available as digital purchase from GOG.com in an “enhanced edition.” Buying it also gives you access to the original version.