Born in northern California in 1953, William M. “Trip” Hawkins III was the perfect age to be captured by the tabletop experiential games that had begun to arrive in force by his teenage years. He experimented with the Avalon Hill wargames, but what really captured his imagination was Strat-o-Matic Football. A huge football fan, he loved the idea of guiding a team game by game through the drama of a full NFL season — loved it enough that he was willing to put up with all of the dice-rolling and math that were part of the process. Unfortunately, his friends were not so entranced. After taking a look at the closely printed manual and all of the complicated forms, they threw up their hands and asked Trip if he’d maybe like to just watch some TV instead. Here was born for Hawkins a lifelong antipathy toward the “boob tube,” a belief that such a passive, brain-numbing medium could and should be superseded by other, interactive forms of entertainment. Yet he had also run into the classic experiential gamer’s dilemma. To wring a dramatic experience out of Strat-o-Matic you had to spend far too much time fiddling with numbers and mundane details. Some people revel in that sort of thing, losing themselves in games as systems. Hawkins’s friends, however, wanted them to be lived experiences. Fiddling with the system only clouded the fictional context that really interested them, and made the whole thing feel far too much like schoolwork.

Then, in 1971, Hawkins saw his first computer, a DEC PDP-8. The answer to his dilemma seemed clear: he could run games on the computer, letting the machine handle all of the boring stuff. Being possessed of a strong entrepreneurial streak — he would start his first (unsuccessful) business venture before the age of 20, selling a Strat-o-Matic-inspired football game of his own design — he decided that his mission in life would be to start a company to make computer games. By this he imagined not the simple arcade games that would soon begin appearing in bars and shopping malls, but richer, deeper experiences in the spirit of the board games that had so equally enticed and frustrated him.

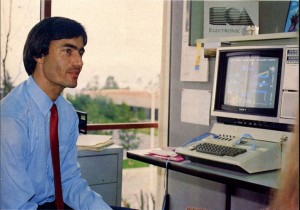

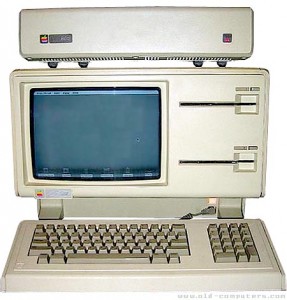

As I mentioned in my last article, Hawkins was possessed of some of the same qualities that marked the young Steve Jobs, including intense charisma and the associated reality distortion field that made him able to convince older and presumably wiser people to do highly improbable things. He thus became the first and (I assume) only person ever to graduate from Harvard with a degree in “Strategy and Applied Game Theory,” for which he combined social-science and computer-science courses. He thought what he learned would aid him both in the real world of business and the simulated worlds he hoped to create. He used his access to computers at Harvard to refine his ideas, continuing to tinker with what would always remain his biggest gaming love, football simulations. In 1975, the arrival of the microprocessor and the first kit microcomputers such as the Altair made him sit down and try to decide on a date when this new technology would make his dream of a home-computer-entertainment company viable. He claims to have decided then that 1982 would be the perfect moment. And indeed, 1982 would be the year that he would found Electronic Arts. If that all sounds a little bit too neat to be entirely believable, the fact still remains that the patience and dedication he showed in the face of considerable temptation to go down other paths is, as we shall see, amazing. As the next step in his master plan, he went off to Stanford for an MBA. And then came Apple, and a pivotal role in the Lisa project.

Hawkins was one of the beneficiaries of Apple’s IPO at the end of 1980; his first two-and-a-half years in the workforce made him a millionaire, free never to work again if he didn’t feel like it. With incentives like that, and a position as marketing director for one of the most prominent young companies in the country, it would be easy to forgive him for putting games in the category of childish things left behind. Yet he never forgot his dream through those years at Apple. Hawkins was the outlier amongst a management team not just disinterested in games but a little bit afraid of them as indicative of a product line less “serious” (read: useful for business) than IBM’s. Even whilst dutifully trying to ingratiate Apple with po-faced businessmen, Hawkins kept up with the thriving game scene on the Apple II. Witnessing the success of companies like Brøderbund and On-Line, he began to fret that the entertainment revolution was coming even sooner than he had anticipated, and that he was missing it. In January of 1982, he thus told his colleagues that he wanted to resign for the most preposterous of reasons: he wanted to start a game company. Hawkins at first acquiesced when they told him how foolish he was to walk away from a company like Apple, but a few months later he resigned again, and this time stuck to his guns.

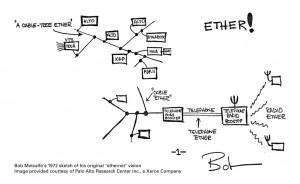

On May 28, 1982, Hawkins officially founded the venture he had been dreaming of for over ten years under the truly awful name of Amazin’ Software. He was just 28 years old. He had a small fortune of his own to inject into the company thanks to the Apple IPO, but he would need much, much more to launch on the lavish scale he envisioned. Fortunately, he had an established relationship with an investor named Don Valentine, head of Sequoia Capital, one of the most important sources of start-up funding in Silicon Valley. Valentine and Sequoia had already helped to fund Atari, Apple, and Shugart (developers of the floppy disk) among others. Now he found Hawkins’s vision of a next-generation entertainment-software publisher compelling. He became more like a business partner than an investor, providing much more than money. After working out of his home for a few months, Hawkins set up shop inside Sequoia’s offices when he began to hire his first employees. As Valentine later wryly explained, he told Hawkins he had to leave only when Hawkins’s own people exceeded the number of Sequoia people in the building. Hawkins then moved his company to a spacious three-story building in San Mateo, California, where it would remain for the next fifteen years. To begin to fill the space, Hawkins put together a team made from ex-Apple people (like Joe Ybarra), ex-Xerox PARC people (like Tim Mott), ace advertising executives (like Bing Gordon, who would remain with the company for more than 25 years), people from other games companies, from IBM, from Visicorp. Even Steve Wozniak agreed to sit on the board of directors.

But, you might ask, just what did all these people find so compelling about Hawkins’s vision? Well, he proposed a completely new approach to computer games — to the way that they were designed, programmed, marketed, and even played (or, more accurately, he wished to change who played them). As I’ve described in earlier articles on this blog, the computer-game industry was growing rapidly by 1982, and with the arrival of new, inexpensive yet capable platforms like the Commodore 64 was beginning to attract serious attention from people like Don Valentine as the potential next big thing to replace the increasingly moribund game consoles. Yet the industry had also only recently left the Ziploc era behind. Its products — full of garish cover art, typo-riddled manuals, bugs, and cryptic user interfaces — still bore an unmistakeable whiff of the dingy basements in which they were created. In short, computer games still felt almost as much hobby as business. Hawkins proposed to change that, by selling games tailored to ordinary consumers, games with the same professional polish found in the book and music industries. He felt the best way to do that was not to devalue the creative component of games and sell them as simply toys or product, as Atari had been doing for years with its game cartridges. Indeed, Atari’s current struggles illustrated that this was exactly the wrong approach. No, the best way to sell games was to celebrate them as art, made by real artists. Thus the eventual title of his company, arrived at after a long day of brainstorming in October of 1982: Electronic Arts. It was simple, classy, elegant, everything Hawkins wanted his games to be, in contrast to the scruffy products of the first-generation companies with whom he’d be competing.

Hawkins spent considerable time refining his ideas for the new “consumer software” he wanted EA to produce. Eventually he arrived at a formula: EA’s games must be “simple, hot, and deep.”

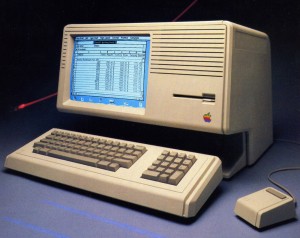

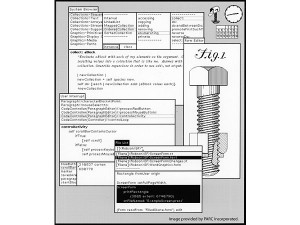

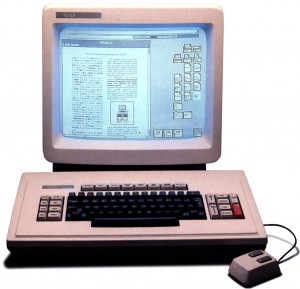

Many of his ideas about simplicity came from the Lisa project. Like the Lisa’s desktop, the interface in EA games should be as simple as possible and as much as possible prompted by obvious visual cues right there on the screen. There should be no cryptic command-key sequences, and it shouldn’t be necessary to read the manual to learn how to play.

“Hotness” is the most abstract of the three qualities. It’s not quite the same as Marshall McLuhan’s definition of the term in Understanding Media, although there is a definite kinship. Hawkins described it as meaning that the program take maximum advantage of what he saw as the four important strengths of the computer as an artistic medium: video and, increasingly with the arrival of the Commodore 64 and its magnificent SID chip, sound; interactivity, the single quality that most distinguished it from any other form of electronic media; and the ability to have hidden computational machinery to solve the bookkeeping problem that had so frustrated him in the tabletop simulations he had played as a kid. Hawkins wanted his games to push all four qualities “as far as you can” on each platform for which they were released.

Finally there is the notion of depth. Hawkins wanted EA’s games to strive for that classic ideal of being simple to learn and play, but challenging — and infinitely interesting — to master. He also pointedly considered this quality to be the real differentiation between the new generation of computer games to be made by EA and the old console and standup arcade games that were aimed at the same market of ordinary consumers. Sustained interest, he argued, required depth, and it was exactly the lack of same that had caused consumers to lose interest in Atari’s games in a way they wouldn’t in those of EA. He liked to say that arcade games were reactive rather than interactive, requiring the player to use her reflexes but not her intelligence or creativity.

There’s a definite sense of the over-optimistic here, particularly in this belief in the power of depth. A parade of truly awful games that have nevertheless become huge hits in the years since the Great Videogame Crash rather puts the lie to the idea that people would come to reject bad designs that seem determined to insult their intelligence. Nevertheless, much of Hawkins’s vision did indeed end up coming true. In his book A Casual Revolution from 2010, Jesper Juul laid out the qualities he feels define the new generation of casual games now played by a huge swathe of the population. The successful ones are, he writes: possessed of an immediately identifiable fictional context; easy to play for the first time; easily interruptable, and accepting of any level of player dedication; difficult enough to be interesting but not difficult enough to frustrate; and “juicy,” offering a constant, colorful stream of feedback to every action to hold the player’s interest. Juul’s criteria benefit from many additional years of gaming history, but they aren’t that horribly far from Hawkins’s vision for gaming back in 1982. That’s not to say that the casual model of gaming is or should be the only viable model — indeed, EA themselves would depart from it constantly over the years, and often for good reasons — but as a blueprint for consumer software, it’s hard to beat. When I played some early EA titles again recently after spending the last couple of years immersed in games of earlier vintage for this blog, I felt like I’d crossed some threshold into, if not quite modernity, at least something that felt a whole lot closer to it.

All of Hawkins’s design goals seemed great in the abstract, but of course to realize them he’d need to find actual designers capable of crafting that elusive combination of simple and deep gameplay. Not wanting to take any chances, he decided to go with several proven hands along with newcomers for EA’s first titles. He therefore made a list of those whose work had impressed him and started making calls, asking them to publish their next game through EA. To entice them, he offered exactly what you might expect, advances (a first for the industry) and generous royalty rates. That, however, was only the beginning of the pitch. Hawkins promised to do everything possible to let his developers and designers just do what they did best: create. To do so he would borrow liberally from the model of other forms of entertainment. Each development team would be assigned an in-house producer who would be their point of contact with EA and who would make sure all the boring stuff got done: arranging testing, arranging ports to other machines, adding copy protection, getting the manual written, keeping contracts up to date, coordinating with advertising and packaging designers. (EA’s early star in this role would be Joe Ybarra, who shepherded a string of classic titles through development.) In the long term, Hawkins also promised them access to a suite of in-house development tools, including workstation computers, tools to develop video and audio content and even in-house artists to help them use them, a cross-platform FORTH compiler. Such tools would not always be used as widely or as soon as Hawkins had hoped, but they were, like so much else about EA, a preview of how game development would work in the future. But the most enticing thing that Hawkins offered his developers, and by far the most remembered today, was an appeal aimed straight at their egos: he promised to make them rock stars.

Hawkins had decided, logically enough, that if computer games were art then those who created them had to be considered artists. In fact, he decided to build EA’s June 1983 launch around this premise of “software artists.” Each EA game box bore a carefully crafted mission statement that made the company sound more like an artistic enclave than a for-profit corporation:

We’re an association of electronic artists who share a common goal. We want to fulfill the potential of personal computing. That’s a tall order. But with enough imagination and enthusiasm we believe there’s a good chance for success. Our products, like this game, are evidence of our intent. If you’d like to get involved, please write to us at…

Said boxes themselves were a slim-line design deliberately evocative of record albums, with big gate-fold insides featuring pictures and profiles of the artists behind the work. Hawkins imagined that, just as you always bought the new album from your favorite band, you would rush to buy the next game from Bill Budge or Dan Bunten; that every hip household would eventually have a shelf full of EA games waiting to be pulled down and played in lieu of an evening of television.

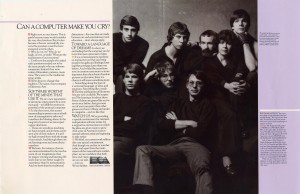

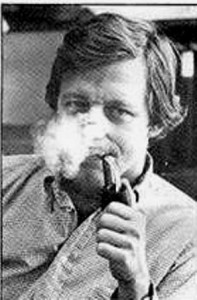

Indeed, EA’s first big advertising blitz was designed to demonstrate just what a hip and important new artistic medium the computer was. Hawkins had his stable of developers photographed in brooding rock-star poses lifted straight from an Annie Leibowitz shoot for Rolling Stone — which was appropriate, because EA largely bypassed the traditional computer press to run them in just that sort of glossy mainstream magazine.

The advertising headlines argued for software as the next great art form: “We See Farther”; “Can a Computer Make You Cry?” (The answer to the latter was essentially “We’re working on it.”) Nobody had ever promoted computer games quite like this. It was, if nothing else, audacious as all hell.

But now we come to the part of the article where we have to ask What It All Means. We have to be careful here. It would be very easy to look at the idealistic sentiments in those early advertisements, compare it with the allegedly soulless corporate behemoth that is EA today (voted “Worst Company in America” for 2012), and drift off into an elegiac for the artistic integrity of the early days of gaming and the perpetual adolescence and sequel-driven creative bankruptcy the medium seems to be caught in today. That’s very, very easy to do, as demonstrated by the countless other blog entries in just that mold that you’ll find all over the Internet; I even did it once myself back in graduate school. Nor is it precisely a point of view without merit. Still, before we go too far down that sepia-toned road let’s make room for some other facets of all this.

There may be more similarities between the EA of 1983 and the EA of today than nostalgia likes to admit. A certain streak of cold corporate ruthlessness was a part of EA’s personality even then. For all the idealism, EA wasn’t terribly interested in playing nice with the others who had already done so much toward building this new industry. They bypassed the established distribution system that Ken Williams had first begun to build back in 1980 in favor of setting up their own network that let them sell their products to stores directly. It may seem a small thing, but the message was that EA didn’t need the rest of the industry, that now the adults were ready to take over, thank you. The first-generation publishers tended to view EA as wealthy carpetbaggers swooping in to capitalize on what they had spent years building. Yes, part of that was just inevitable jealousy toward the well-financed, well-connected Hawkins who never had to start his company on the shoestrings that they did, but there’s also a grain of truth to their complaints. June of 1983 marks as good a line of demarcation as any for the final end of the era that Doug Carlston called the software Brotherhood. The industry would be a different place in the post-EA era. The games would be better, more polished and sophisticated, but the competition would also be more ruthless, the atmosphere colder, everything slicker and more calculated. It’s hard not to feel that EA had something to do with that. The fact is that EA was always known to its competitors as a company of hard edges and sharp elbows.

One other thing that’s always lost in nostalgic reminiscence over EA’s first advertising campaign is the awkward fact that it actually didn’t work out all that terribly well. EA found that the mainstream public did not respond as they had hoped to their software artists, and within six months had already begun to switch gears, away from advertising the creators and back to advertising their creations on their own merits, as was the norm for other publishers. They also returned to the trade press for promotion, and often relaxed Hawkins’s rules for consumer software in favor of titles that catered more to the hardcore. Within a few years EA would have extended dungeon crawls, tough-as-nails adventure games, and strategy games with thick manuals, just like everybody else did. It turned out that consumers — or, perhaps more accurately, the PCs of the era — weren’t yet quite ready for consumer software. EA would turn into a very successful publisher, but not the force for widespread, mainstream cultural change Hawkins had imagined. Games would still be viewed by most of the tastemakers as kids’ stuff for many years to come. When that became clear (as it did in fairly short order), EA would continue to credit their developers on the box covers and to offer photos inside, but no longer made them the centerpiece of their marketing. Certainly there were no more developers-as-rock-stars photos like the one above.

Which brings us to another point that’s worthwhile to note. I have no doubt that much of the idealistic sentiment in those early advertisements was genuine, just as I have no doubt that Hawkins really, genuinely loved games and the potential of games and wanted to bring them to more people. Yet EA was also a business, funded by unsentimental people like Don Valentine. They ultimately demanded that EA live up to its earning potential. If presenting themselves to the public as an enclave of artists worked to do that, great. If making great, groundbreaking games did that, double great. But when push came to shove, EA needed to make money. Even the advertisement above displays as much cold calculation as it does idealism. There’s something not quite genuine about all those nerds mugging like rock stars. The message of the advertisements resonates so because it was good PR, that perfectly connected with what EA wanted to be and — just as importantly — with what so many commentators today so desperately want them to have been. But, like the iconic Infocom advertisements that still largely define perceptions of that company, it’s also a very, very carefully crafted piece of calculated rhetoric. So, What It All Means is… complicated.

But to return to firmer ground, that of the games themselves: they were mostly good. Often really, really good. EA launched that June with seven titles available or announced: M.U.L.E., by Dan Bunten and his company Ozark Softscape; Archon, by Free Fall Associates; Murder on the Zinderneuf, also by Free Fall; Worms? by David Maynard; Pinball Construction Set by Bill Budge; Hard Hat Mack by Michael Abbot and Matthew Alexander; and Axis Assassin by John Field. Perhaps surprisingly given Hawkins’s connections to Apple, the first four of those originated on the Atari 8-bit machines, only the last three on the Apple II. On the other hand, with the Commodore 64 still quite new and something of an unknown quantity as these games were in development, the Atari machines were the best qualified to realize Hawkins’s vision of audiovisually “hot” games. (EA funded ports to most viable platforms as a matter of course for most games, so all of these titles did eventually reach several platforms.)

EA’s starting lineup was so good and so important to gaming history that I want to look at several of them individually. We’ll get started with that next time.

(There are several good interviews and articles about EA’s history available on the Internet. That said, it’s also worthwhile to go back to the spate of interviews and articles that greeted EA’s entrance back in 1983. Particularly good ones can be found in the October 1983 Byte, the July/August 1983 Softline, and the October 1983 Computer Gaming World.)