Some time ago, in the midst of a private email discussion about the general arc of adventure-game history, one of my readers offered up a bold claim: he said that the best single year to be a player of point-and-click graphic adventures was 1996. This rings decidedly counterintuitive, given that 1996 was also the year during which the genre first slid into a precipitous commercial decline that would not even begin to level out for a decade or more. But you know what? Looking at the lineup of games released that year, I found it difficult to argue with him. These were games of high hopes, soaring ambitions, and big budgets. The genre has never seen such a lineup since. How poignant and strange, I thought to myself. Then I thought about it some more, and I decided that it wasn’t really so strange at all.

For when we cast our glance back over entertainment history, we find that it’s not unusual for a strain of creative expression to peak in terms of sophistication and ambition some time after it has passed its zenith of raw popularity. Wings won the first ever best-picture Oscar two years after The Jazz Singer had numbered the days of soundless cinema; Duke Ellington’s big band blew up a storm at Newport two years after “Rock Around the Clock” and “That’s All Right” had heralded the end of jazz music at the top of the hit parade. The same sort of thing has happened on multiple occasions in gaming. I would argue, for example, that more great text adventures were commercially published after 1984, the year that interactive fiction plateaued and prepared for the down slide, than before that point. And then, of course, we have the graphic adventures of 1996 — the year after the release of Phantasmagoria, the last million-selling adventure game to earn such sales numbers entirely on its own intrinsic appeal, without riding the coattails of an earlier game for which it was a sequel or any other pre-existing mass-media sensation.

There are two reasons why this phenomenon occurs. One is that the people who decide what projects to green-light always have a tendency to look backward at least as much as forward; new market paradigms are always hard to get one’s head around. The other becomes increasingly prevalent as projects grow more complex, and the window of time between the day they are begun and the day they are completed grows longer as a result. A lot can happen in the world of media in the span of two years or more — not coincidentally, the type of time span that more and more game-development projects were starting to fill by the mid-1990s. Toonstruck, our subject for today, is a classic example of what can happen when the world in which a game is conceived is dramatically different from the one to which it is finally born.

Let us turn the clock back to late 1993, the moment of Toonstruck‘s genesis. At that time, the conventional wisdom inside the established games industry about gaming’s necessary future hewed almost exclusively to what we might call the Sierra vision, because it was articulated so volubly and persuasively by that major publisher’s founder and president Ken Williams. It claimed that the rich multimedia affordances of CD-ROM would inevitably lead to a merger of interactivity with cinema. Popular movie stars would soon be vying to appear in interactive movies which would boast the same production values and storytelling depth as traditional movies, but which would play out on computer instead of movie-theater or television screens, with the course of the story in the hands of the ones sitting behind the screens. This mooted merger of Silicon Valley and Hollywood — often abbreviated as “Siliwood” — would require development budgets exponentially larger than those the industry had been accustomed to, but the end results would reach an exponentially wider audience.

The games publisher Virgin Interactive, a part of Richard Branson’s sprawling media and travel empire, was every bit as invested in this prophecy as Sierra was. Its Los Angeles-based American arm was the straw that stirred the drink, under the guidance of a Brit named Martin Alper, who had been working to integrate games into a broader media zeitgeist for many years; he had first made a name for himself in his homeland as the co-founder of the budget label Mastertronic, whose games embraced pop-culture icons from Michael Jackson to Clumsy Colin (the mascot of a popular brand of chips), and were sold as often from supermarkets as from software stores. Earlier in 1993, his arm of Virgin had published The 7th Guest, an interactive horror flick which struck many as a better prototype for the Sierra vision than anything Sierra themselves had yet released; it had garnered enormous sales and adoring press notices from the taste-makers of mainstream media as well as those inside the computer-gaming ghetto. Now, Alper was ready to take things to the next level.

He turned for ideas to another Brit who had recently joined him in Los Angeles: a man named David Bishop, who had already worked as a journalist, designer, manager, and producer over the course of his decade in the industry. Bishop proposed an interactive counterpart of sorts to Who Framed Roger Rabbit, the hit 1988 movie which had wowed audiences with the novel feat of inserting cartoon characters into a live-action world. Bishop’s game would do the opposite: insert real actors into a cartoon world. He urged Alper to pull out all the stops in order to make something that would be every bit as gobsmacking as Roger Rabbit had been in its day.

So far, so good. But who should take on the task of turning Bishop’s idea into a reality? The 7th Guest had been created by a then-tiny developer known as Trilobyte, itself a partnership between a frustrated filmmaker and a programming whiz. Taking the press releases that labeled them the avatars of the next generation of entertainment at face value, the two had now left the Virgin fold, signing a contract with a splashy new player in the multimedia sweepstakes called Media Vision. Someone else would have to make the game called Toonstruck.

In a telling statement of just how committed they already were to their interactive cartoon, Virgin USA, who had only acted as a publisher to this point, decided to dive into the development business. In October of 1993, Martin Alper put two of his most trusted producers, Neil Young and Chris Yates, in charge of a new, wholly owned development studio called Burst, formed just to make Toonstruck. The two were given a virtually blank check to do so. Make it amazing was their only directive.

So, Young and Yates went across town to Hollywood. There they hired Nelvana, an animation house that had been making cartoons of every description for over twenty years. And they hired as well a gaggle of voice-acting talent that was worthy of a big-budget Disney feature. There were Tim Curry, star of the camp classic Rocky Horror Picture Show; Dan Castellaneta, the voice of Homer Simpson (“D’oh!”); David Ogden-Stiers, who had played the blue-blooded snob Charles Emerson Winchester III on M*A*S*H; Dom Deluise of The Cannonball Run and All Dogs Go to Heaven fame; plus many other less recognizable names who were nevertheless among the most talented and sought-after voices in cartoon production, the sort that any latch-key kid worth her salt had listened to for countless hours by the time she became a teenager. In hiring the star of the show — the actor destined to actually appear onscreen, inserted into the cartoon world — Burst pulled off their greatest coup of all: they secured the signature of none other than Christopher Lloyd, a veteran character actor best known as the hippie burnout Jim from the beloved sitcom Taxi, the mad scientist Doc Brown from the Back to the Future films… and Judge Doom, the villain from Who Framed Roger Rabbit. Playing in a game that would be the technological opposite of that film’s inserting of cartoon characters into the real world, Lloyd would become his old character’s psychological opposite, the hero rather than the villain. Sure, it was stunt casting — but how much more perfect could it get?

What happened next is impossible to explain in any detail. The fact is that Burst was and has remained something of a black box. What is clear, however, is that Toonstruck‘s designers-in-the-trenches Richard Hare and Jennifer McWilliams took their brief to pull out all the stops and to spare no expense in doing so as literally as everyone else at the studio, concocting a crazily ambitious script. “We were full of ideas, so we designed and designed and designed,” says McWilliams, “with a great deal of emphasis on what would be cool and interesting and funny, and not so much focus on what would actually be achievable within a set schedule and budget. [Virgin] for the most part stepped aside and let us do our thing.”

Their colleagues storyboarded their ever-expanding design document and turned it into hours and hours of quality cartoon animation — animation which was intended to meet or exceed the bar set by a first-string Disney feature film. As they did so, the deadlines flew by unheeded. Originally earmarked with the eternal optimism of game developers and Chicago Cubs fans for the 1994 Christmas season, the project slipped into 1995, then 1996. Virgin trotted it out at trade show after trade show, making ever more sweeping claims about its eventual amazingness at each one, until it became an in-joke among the gaming journalists who dutifully inserted a few lines about it into each successive “coming soon” preview. By 1996, the bill for Toonstruck was approaching a staggering $8 million, enough to make it the second most expensive computer game to date. And yet it was still far from completion.

It seems clear that the project was poorly managed from the start. Take, for example, all that vaunted high-quality animation. Burst’s decision to make the cartoon of Toonstruck first, then figure out how to make use of it in an interactive context later was hardly the most cost-effective way of doing things. It made little sense to aim to compete with Disney on a level playing field when the limitations of the consumer-computing hardware of the time meant that the final product would have to be squashed down to a resolution of 640 X 400, with a palette of just 256 shades, for display on a dinky 15-inch monitor screen.

There are also hints of other sorts of dysfunction inside Burst, and between Burst and its parent company. One Virgin insider who chose to remain anonymous alluded vaguely in 1998 to the way that “internal politics made the situation worse. Some of the project leaders didn’t get on with other senior staff, and some people had friendships to protect. So there was finger-pointing and back-slapping going on at the same time.”

During the three years that Toonstruck spent in development, the Sierra vision of gaming’s necessary future was challenged by a new one. In December of 1993, id Software, a tiny renegade company operating outside the traditional boundaries of the industry by selling its creations largely through the shareware model, released a little game called DOOM, which featured exclusively computer-generated 3D environments, gobs of bloody action, and, to paraphrase a famous statement by its chief programmer John Carmack, no more story than your typical porn movie. Not long after, a studio called Blizzard Entertainment debuted a fantasy strategy game called Warcraft which played like an action game, in hectic real time; not the first of its type, it was nevertheless the one that really caught gamers’ imaginations, especially after Blizzard perfected the concept with 1995’s Warcraft II. With these games and others like them selling at least as well as the hottest adventures, the industry’s One True Way Forward had become a proverbial fork in the road. Publishers could continue to plow money into interactive movies in the hope of cracking into the mainstream of mass entertainment, or they could double down on their longstanding customer demographic of young white males by offering them yet more fast-paced mayhem. Already by 1995, the fact that games of the latter stripe tended to cost far less than those of the former was enough to seal the deal in the minds of many publishers.

Virgin Interactive was given especial food for thought that year when they wound up publishing Trilobyte’s next game after all. Media Vision, the publisher Trilobyte had signed with, had imploded amidst government investigations of securities fraud and other financial crimes, and an opportunistic Virgin had swooped into the bankruptcy auction and made off with the contract for The 11th Hour, the sequel to The 7th Guest. It seemed like quite a clever heist at the time — but it began to seem somewhat less so when The 11th Hour under-performed relative to expectations. Both reviewers and ordinary gamers stated clearly that they were already becoming bored of Trilobyte’s rote mixing of B-movie cinematics with hoary set-piece puzzles that mostly stemmed from well before the computer age — tired of the way that the movie and the gameplay in a Trilobyte creation had virtually nothing to do with one another.

Then, as I noted at the beginning of this article, 1996 brought with it an unprecedentedly large lineup of ambitious, earnest, and expensive games of the Siliwood stripe, with some of them at least much more thoughtfully designed than anything Trilobyte had ever come up with. Nonetheless, as the year went by an alarming fact was more and more in evidence: this year’s crop of multimedia extravaganzas was not producing any towering hits to rival the likes of Sherlock Holmes: Consulting Detective in 1992, The 7th Guest in 1993, Myst in 1994, or Phantasmagoria in 1995. Arguably the best year in history to be a player of graphic adventures, 1996 was also the year that broke the genre. Almost all of the big-budget adventure releases still to come from American publishers would owe their existence to corporate inertia, being projects that executives found easier to complete and hope for a miracle than to cancel outright and then try to explain the massive write-off to their shareholders — even if outright cancellation would have been better for their companies’ bottom lines. In short, by the beginning of 1997 only dreamers doubted that the real future of the gaming mainstream lay with the lineages of DOOM and Warcraft.

Before we rush to condemn the philistines who preferred such games to their higher-toned counterparts, we must acknowledge that their preferences had to do with more than sheer bloody-mindedness. First-person shooters and real-time-strategy games could be a heck of a lot of fun, and lent themselves very well to playing with others, whether gathered together in one room or, increasingly, over the Internet. The generally solitary pursuit of adventure gaming had no answer for this sort of boisterous bonding experience. And there was also an economic factor: an adventure was a once-and-done endeavor that might last a week or two at best, after which you had no recourse but to go out and buy another one. You could, on the other hand, spend literally years playing the likes of DOOM and Warcraft with your mates.

Then there is one final harsh reality to be faced: the fact is that the Sierra vision never came close to living up to its billing for the player. These games were never remotely like waking up in the starring role of a Hollywood film. Boosters like Ken Williams were thrilled to talk about interactive movies in the abstract, but these same people were notably vague about how their interactivity was actually supposed to work. They invested massively in Hollywood acting talent, in orchestral soundtracks, and in the best computer artists money could buy, while leaving the interactivity — the very thing that ostensibly set their creations apart — to muddle through on its own, one way or another.

Inevitably, then, the interactivity ended up taking the form of static puzzles, the bedrock of adventure games since the days when they had been presented all in text. The puzzle paradigm persisted into this brave new era simply because no one could proffer any other ideas about what the player should be doing that were both more compelling and technologically achievable. I hasten to add that some players really, genuinely love puzzles, love few things more than to work through an intricate web of them in order to make something happen; I include myself among this group. When puzzles are done right, they’re as satisfying and creatively valid as any other type of gameplay.

But here’s the rub: most people — perhaps even most gamers — really don’t like solving puzzles all that much at all. (These people are of course no better or worse than those who do — just different.) For the average Joe or Jane, playing one of these new-fangled interactive movies was like watching a conventional movie filmed on an ultra-low-budget, usually with terrible acting. And then, for the pièce de résistance, you were expected to solve a bunch of boring puzzles for the privilege of witnessing the underwhelming next scene. Who on earth wanted to do this after a hard day at the office?

All of which is to say that the stellar sales of Consulting Detective, The 7th Guest, Myst, and Phantasmagora were not quite the public validations of the concept of interactive movies that the industry chose to read them as. The reasons for these titles’ success were orthogonal to their merits as games, whatever the latter might have been. People bought them as technology demonstrations, to show off the new computers they had just purchased and to test out the CD-ROM drives they had just installed. They gawked at them for a while and then, satiated, planted themselves back in front of their televisions to spend their evenings as they always had. This was not, needless to say, a sustainable model for a mainstream gaming genre. By 1996, the days when the mere presence of human actors walking and/or talking on a computer monitor could wow even the technologically unsophisticated were fast waning. That left as customers only the comparatively tiny hardcore of buyers who had always played adventure games. They were thrilled by the diverse and sumptuous smorgasbord that was suddenly set before them — but the industry’s executives, looking at the latest sales numbers, most assuredly were not. Just like that, the era of Siliwood passed into history. One can only hope that all of the hardcore adventure fans enjoyed it while it lasted.

Toonstruck was, as you may have guessed, among the most prominent of the adventures that were released to disappointing results in 1996. That event happened at the very end of the year, and only thanks to a Virgin management team who decided in the summer that enough was enough. “The powers that be in management had to step in and give us a dose of reality,” says Jennifer McWilliams. “We then needed to come up with an ending that could credibly wrap the game up halfway through, with a cliffhanger that would, ideally, introduce part two. I think we did well considering the constraints we were under, but still, it was not what we originally envisioned.” Another, anonymous team member has described what happened more bluntly: “The team was told to ‘cut it or can it’ — it either had to be shipped real soon, or not at all.”

The former option was chosen, and thus Toonstruck shipped just before Christmas, on two discs that between them bore only about one third of the total amount of animation created for the game, and that in a severely degraded form. Greeted with reviews that ran the gamut from raves to pans, it wound up selling about 150,000 copies. For a normal game with a normal budget, such numbers would be just about acceptable; if the 100,000-copy threshold was no longer the mark of an outright hit in the computer-games industry of 1996, selling that many copies and then half again that many more wasn’t too bad either. Unfortunately, all of the usual quantifiers got thrown out for a game that had cost over $8 million to make. One Virgin employee later mused wryly how Toonstruck had been intended to “blow the public away. The only thing that got blown was vast amounts of cash, and the public stayed away.”

Bleeding red ink from the failure of Toonstruck and a number of other games, Virgin’s American arm was ordered by the parent company in London to downsize their budgets and ambitions drastically. After creating a few less expensive but equally commercially disappointing games, Burst Studios was sold in 1998 to Electronic Arts, who renamed it EA Pacific and shifted its focus to 3D real-time strategy — a sign of the times if ever there was one.

Such is one tale of Toonstruck, a game which could only have appeared in its own very specific time and place. But, you might be wondering, how does this relic of a fizzled vision of gaming’s future play?

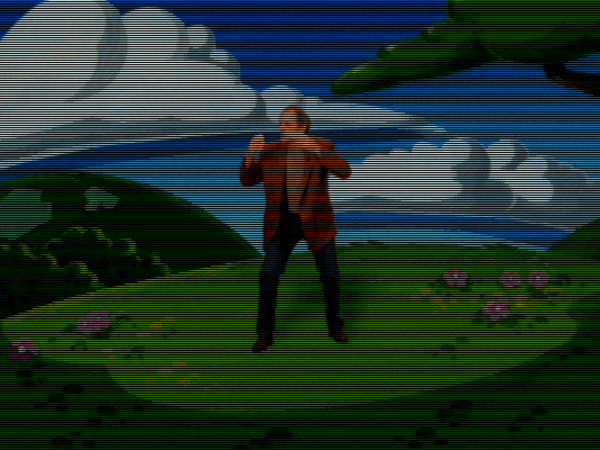

Toonstruck‘s opening movie is not a cartoon. We instead meet Christopher Lloyd for the first time in the real world, in the role of Drew Blanc (get it?), a cartoonist suffering from writer’s block. He’s called into the office of his impatient boss Sam Schmaltz, who’s played by Ben Stein, an actor of, shall we say, limited range, but one who remains readily recognizable to an entire generation for playing every kid’s nightmare of a boring teacher in Ferris Bueller’s Day Off and The Wonder Years.

We learn that Drew is unhappy with his current assignment as the illustrator of The Fluffy Fluffy Bun Bun Show, a piece of cartoon pablum with as much edge as a melting stick of butter. He rather wants to do something with his creation Flux Wildly, a hyperactive creature of uncertain taxonomy and chaotic disposition. Schmaltz, however, quickly lives up to his name; he’s having none of it. A deflated Drew resigns himself to an all-nighter in the studio to make up the time he’s wasted daydreaming about the likes of Flux. But in the course of that night, he is somehow drawn into his television — right into a cartoon.

There the bewildered Drew meets none other than Flux Wildly himself, finding him every bit as charmingly unhinged as he’d always imagined him to be. He learns that the cartoon world in which he finds himself is divided into three regions: Cutopia, where the fluffy bun bun bunnies and their ilk live; Zanydu, which anarchists like Flux call home; and Malevoland, where true evil lurks. Trouble is, Count Nefarious of Malevoland has gotten tired of the current balance of power, and has started making bombing raids on the other two regions in his Malevolator, using its ray of evil to turn them as dark and twisted as his homeland. King Hugh of Cutopia promises Drew that, if he first saves them all by collecting the parts necessary to build a Cutifier — the antidote to the Malevolator — he will send Drew back to his own world.

All of that is laid out in the opening movie, after which the plot gears are more or less shifted into neutral while you commence wandering around solving puzzles. And it’s here that the game presents its most welcome surprise: unlike so many other multimedia productions of this era that were sold primarily on the basis of their audiovisuals, this game’s puzzle design is clever, complex, and carefully crafted. I have no knowledge of precisely how this game was tested and balanced, but I have to assume these things were done, and done well. It’s not an easy game by any means — there are dozens and dozens of puzzles here, layered on top of one another in a veritable tangle of dependencies — but it’s never an unfair one. In the best tradition of LucasArts, there are no deaths or dead ends. If you are willing to observe the environment with a meticulous eye, experiment patiently, and enter into the cartoon logic of a world where holes are portable and five minutes on a weight bench can transform your physique, you might just be able to solve this one without hints.

The puzzles manage the neat trick of being whimsical without ever abandoning logic entirely. Take, for example, the overarching meta-puzzle you’re attempting to solve as you wander through the lands. Assembling the Cutifier requires combining matched pairs of objects, such as sugar and spice (that’s a freebie the game gives you to introduce the concept). Other objects waiting for their partners include a dagger, some stripes, a heart, some whistles, some polish, etc. If possible combinations have started leaping to mind already, you might really enjoy this game. If they haven’t, on the other hand, you might not, or you might have fallen afoul of the exception to the rule of its general solubility: it requires a thoroughgoing knowledge of idiomatic English, of the sort that only native speakers or those who have been steeped in the language for many years are likely to possess.

While you’re working out its gnarly puzzle structure, Toonstruck is doing its level best to keep you amused in other ways. Players who are only familiar with Christopher Lloyd from his scenery-chewing portrayals in Back to the Future and Who Framed Roger Rabbit may be surprised at his relatively low-key performance here; more often than not, he’s acting as the straight man for his wise-cracking sidekick Flux Wildly and other gleefully over-the-top cartoon personalities. In truth, Lloyd was (and is) a more multi-faceted and flexible actor than his popular image might suggest, having decades of experience in film, television, and theater productions of all types behind him. His performance here, in what must have been extremely trying circumstances — he was, after all, constantly expected to say his lines to characters who weren’t actually there — feels impressively natural.

Drew Blanc’s friendship with Flux Wildly is the emotional heart of the story. Their relationship can’t help but bring to mind the much-loved LucasArts adventuring duo Sam and Max. Once again, we have here a subdued humanoid straight man paired with a less anthropomorphic pal who comes complete with a predilection for violence. Once again the latter keeps things lively with his antics and his constant patter. And once again you the player can use him like an inventory item from time to time on the problems you encounter, sometimes with productive and often with amusing results. Flux Wildly may just be my favorite thing in the game. I just wish he was around through the whole game; more on that momentarily.

Although Flux is a lot of fun, the writing in general is a bit of a mixed bag. As, for that matter, were contemporary reviews of the writing. Computer Gaming World found Toonstruck “hilarious”: “With humor that ranges from cutesy to risqué, Toonstruck keeps the laughter coming nonstop.” Next Generation, on the other hand, wrote that “the designers have tried desperately hard to make the game zany, wacky, crazy, twisted, madcap, and side-splittingly hilarious — but it just isn’t. The dialog, slapstick humor, and relentless ‘comedy’ situations are tired. You’ve seen most of these jokes done better 40 years ago.”

In a way, both takes are correct. Toonstruck is sometimes genuinely clever and funny, but just as often feels like it’s trying way too hard. There are reports that the intended audience for the game drifted over its three years in development, that it was originally planned as a kid-friendly game and only slowly moved in a more adult direction. This may explain some of the jarring tonal shifts inside its world. At times, the writing doesn’t seem to know what it wants to be, veering wildly from the light and frothy to that depressingly common species of videogame humor that mistakes transgression for wit. The most telling example is also the one scene that absolutely no one who has ever played this game, or for that matter merely watched it being played, can possibly forget, even if she wants to.

While exploring the land of Cutopia, you come upon a sweet, matronly dairy cow and her two BFFs, a cute and fuzzy sheep and a tired old horse. Some time later, Count Nefarious arrives to zap their farm with his Malevolator. Next time you visit, you find that the horse has been turned into glue. Meanwhile the cow is spread-eagled on a “Wheel-O-Luv,” her udders dangling pendulously in a way that looks downright pornographic, cackling with masochistic delight while the leather-clad sheep gives her her delicious punishment. Words fail me… this is something you have to see for yourself.

Here and in a few other places, Toonstruck is just off, weird in a way that is not just unfunny or immature but that actually leaves you feeling vaguely uncomfortable. It demonstrates that, for all Virgin Interactive’s mainstream ambitions, they were still a long way from mustering the thematic, aesthetic, and writerly unity that goes into a slick piece of mass-market entertainment.

Toonstruck is at its best when it is neither trying to trangress for the sake of it nor to please the mass market, but rather when it’s delicately skewering a certain stripe of sickly sweet, creatively bankrupt, lowest-common denominator children’s programming that was all over television during the 1980s and 1990s. Think of The Care Bears, a program that was drawn by some of the same Nelvana animators who worked on Toonstruck; they must surely have enjoyed ripping their mawkish past to shreds here. Or, even better, think of Barney the hideous purple dinosaur, dawdling through excruciating songs with ripped-off melodies and cloying lyrics that sound like they were made up on the spot. Few media creations have ever been as easy to hate as him, as the erstwhile popularity of the Usenet newsgroup alt.Barney.dinosaur.die.die.die will attest.

Being created by so many insiders to the cartoon racket, Toonstruck is well placed to capture the very adult cynicism that oozes from such productions, engineered as they were mainly to sell plush toys to co-dependent children. It does so not least through King Hugh of Cutopia himself, who turns out to be — spoiler alert! — not quite the heroic exemplar of inclusiveness he’s billed as. Meanwhile Flux Wildly and his friends from Zanydu stand for a different breed of cartoons, ones which demonstrate a measure of respect for their young audience.

There does eventually come a point in Toonstruck, more than a few hours in, when you’ve unraveled the web of puzzles and assembled all twelve matched pairs that are required for the Cutifier. By now you feel like you’ve played a pretty complete game, and are expecting the end credits to start rolling soon. Instead the game pulls its next big trick on you: everything goes to hell in a hand basket and you find yourself in Count Nefarious’s dungeon, about to begin a second act whose presence was heretofore hinted at only by the presence of a second, as-yet unused CD in the game’s (real or virtual) box.

Most players agree that this unexpected second act is, for all the generosity demonstrated by the mere fact of its existence, considerably less enjoyable than the first. Your buddy Flux Wildly is gone, the environment darker and more constrained, and your necessary path through the plot more linear. It feels austere and lonely in contrast to what has come before — and not in a good way. Although the puzzle design remains solid enough, I imagine that this is the point where many players begin to succumb to the temptations of hints and walkthroughs. And it’s hard to blame them; the second act is the very definition of an anticlimax — almost a dramatic non sequitur in the way it throws the game out of its natural rhythm.

But a real ending — or at least a form of ending — does finally arrive. Drew Blanc defeats Count Nefarious and is returned to his own world. All seems well — until Flux Wildly contacts him again in the denouement to tell him that Nefarious really isn’t done away with just yet. Incredibly, this was once intended to mark the beginning of a third act, of four in total, all in the service of a parable about the creative process that the game we have only hints at. Laboring under their managers’ ultimatum to ship or else, the developers had to fall back on the forlorn hope of a surprise, sequel-justifying hit in the face of the marketplace headwinds that were blowing against the game. Jennifer McWilliams:

Toonstruck was meant to be a funny story about defeating some really weird bad guys, as it was when released, but originally it was also about defeating one’s own creative demons. It was a tribute to creative folks of all types, and was meant to offer encouragement to any of them that had lost their way. So, the second part of the game had Drew venturing into his own psyche, facing his fears (like a psychotically overeager dentist), living out his fantasies (like meeting his hero, Vincent van Gogh), and eventually finding a way to restore his creative spark.

It does sound intriguing on one level, but it also sounds like much, much too much for a game that already feels rather overstuffed. If the full conception had been brought to fruition, Toonstruck would have been absolutely massive, in the running for the biggest graphic adventure ever made. But whether its characters and puzzle mechanics could have supported the weight of so much content is another question. It seems that all or most of the animation necessary for acts three and four was created — more fruits of that $8 million budget — and this has occasionally led fans to dream of a hugely belated sequel. Yet it is highly doubtful whether any of the animation still exists, or for that matter whether the economics of using it make any more sense now than they did in the mid-1990s. Once all but completely forgotten, Toonstruck has enjoyed a revival of interest since it was put up for sale on digital storefronts some years ago. But only a small one: it would be a stretch to label it even a cult classic.

What we’re left with instead, then, is a fascinating exemplar of a bygone age; the fact that this game could only have appeared in the mid-1990s is a big part of its charm. Then, too, there’s a refreshing can-do spirit about it. Tasked with making something amazing, its creators did their honest best to achieve just that, on multiple levels. If the end result is imperfect in some fairly obvious ways, it never fails to be playable, which is more than can be said for many of its peers. Indeed, it remains well worth playing today for anyone who shivers with anticipation at the prospect of a pile of convoluted, deviously interconnected puzzles. Ditto for anyone who just wants to know what kind of game $8 million would buy you back in 1996.

(Sources: Starlog of May 1984 and August 1993; Computer Gaming World of January 1997; Electronic Entertainment of December 1995; Next Generation of January 1997, February 1997, and April 1998; PC Zone of August 1995, August 1996, and June 1998; Questbusters 117; Retro Gamer 174.

Toonstruck is available for digital purchase on GOG.com.)