Most of the academic papers about 3D graphics that John Carmack so assiduously studied during the 1990s stemmed from, of all times and places, the Salt Lake City, Utah, of the 1970s. This state of affairs was a credit to one man by the name of Dave Evans.

Born in Salt Lake City in 1924, Evans was a physicist by training and an electrical engineer by inclination, who found his way to the highest rungs of computing research by way of the aviation industry. By the early 1960s, he was at the University of California, Berkeley, where he did important work in the field of time-sharing, taking the first step toward the democratization of computing by making it possible for multiple people to use one of the ultra-expensive big computers of the day at the same time, each of them accessing it through a separate dumb terminal. During this same period, Evans befriended one Ivan Sutherland, who deserves perhaps more than any other person the title of Father of Computer Graphics as we know them today.

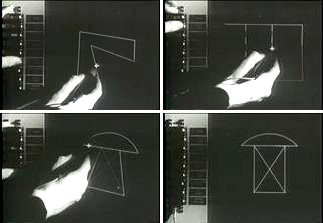

For, in the course of earning his PhD at MIT, Sutherland developed a landmark software application known as Sketchpad, the first interactive computer-based drawing program of any stripe. Sketchpad did not do 3D graphics. It did, however, record its user’s drawings as points and lines on a two-dimensional plane. The potential for adding a third dimension to its Flatland-esque world — a Z coordinate to go along with X and Y — was lost on no one, least of all Sutherland himself. His 1963 thesis on Sketchpad rocketed him into the academic stratosphere.

In 1964, at the ripe old age of 26, Sutherland succeeded J.C.R. Licklider as head of the computer division of the Defense Department’s Advanced Research Projects Agency (ARPA), the most remarkable technology incubator in computing history. Alas, he proved ill-suited to the role of administrator: he was too young, too introverted — just too nerdy, as a later generation would have put it. But during the unhappy year he spent there before getting back to the pure research that was his real passion, he put the University of Utah on the computing map, largely as a favor to his friend Dave Evans.

Evans may have left Salt Lake City more than a decade ago, but he remained a devout Mormon, who found the counterculture values of the Berkeley of the 1960s rather uncongenial. So, he had decided to take his old alma mater up on an offer to come home and build a computer-science department there. Sutherland now awarded said department a small ARPA contract, one fairly insignificant in itself. What was significant was that it brought the University of Utah into the ARPA club of elite research institutions that were otherwise clustered on the coasts. An early place on the ARPANET, the predecessor to the modern Internet, was not the least of the perks which would come its way as a result.

Evans looked for a niche for his university amidst the august company it was suddenly joining. The territory of time-sharing was pretty much staked; extensive research in that field was already going full steam ahead at places like MIT and Berkeley. Ditto networking and artificial intelligence and the nuts and bolts of hardware design. Computer graphics, though… that was something else. There were smart minds here and there working on them — count Ivan Sutherland as Exhibit Number One — but no real research hubs dedicated to them. So, it was settled: computer graphics would become the University of Utah’s specialty. In what can only be described as a fantastic coup, in 1968 Evans convinced Sutherland himself to abandon the East Coast prestige of Harvard, where he had gone after leaving his post as the head of ARPA, in favor of the Mormon badlands of Utah.

Things just snowballed from there. Evans and Sutherland assembled around them an incredible constellation of bright young sparks, who over the course of the next decade defined the terms and mapped the geography of the field of 3D graphics as we still know it today, writing papers that remain as relevant today as they were half a century ago — or perchance more so, given the rise of 3D games. For example, the two most commonly used algorithms for calculating the vagaries of light and shade in 3D games stem directly from the University of Utah: Gouraud shading was invented by a Utah student named Henri Gouraud in 1971, while Phong shading was invented by another named Bui Tuong Phong in 1973.

But of course, lots of other students passed through the university without leaving so indelible a mark. One of these was Jim Clark, who would still be semi-anonymous today if he hadn’t gone on to become an entrepreneur who co-founded two of the most important tech companies of the late twentieth century.

When you’ve written as many capsule biographies as I have, you come to realize that the idea of the truly self-made person is for the most part a myth. Certainly almost all of the famous names in computing history were, long before any of their other qualities entered into the equation, lucky: lucky in their time and place of birth, in their familial circumstances, perhaps in (sad as it is to say) their race and gender, definitely in the opportunities that were offered to them. This isn’t to disparage their accomplishments; they did, after all, still need to have the vision to grasp the brass ring of opportunity and the talent to make the most of it. Suffice to say, then, that luck is a prerequisite but the farthest thing from a guarantee.

Every once in a while, however, I come across someone who really did almost literally make something out of nothing. One of these folks is Jim Clark. If today as a soon-to-be octogenarian he indulges as enthusiastically as any of his Old White Guy peers in the clichéd trappings of obscene wealth, from the mansions, yachts, cars, and wine to the Victoria’s Secret model he has taken for a fourth wife, he can at least credibly claim to have pulled himself up to his current station in life entirely by his own bootstraps.

Clark was born in 1944, in a place that made Salt Lake City seem like a cosmopolitan metropolis by comparison: the small Texas Panhandle town of Plainview. He grew up dirt poor, the son of a single mother living well below the poverty line. Nobody expected much of anything from him, and he obliged their lack of expectations. “I thought the whole world was shit and I was living in the middle of it,” he recalls.

An indifferent student at best, he was expelled from high school his junior year for telling a teacher to go to hell. At loose ends, he opted for the classic gambit of running away to sea: he joined the Navy at age seventeen. It was only when the Navy gave him a standardized math test, and he scored the highest in his group of recruits on it, that it began to dawn on him that he might actually be good at something. Encouraged by a few instructors to pursue his aptitude, he enrolled in correspondence courses to fill his free time when out plying the world’s oceans as a crewman on a destroyer.

Ten years later, in 1971, the high-school dropout, now six years out of the Navy and married with children, found himself working on a physics PhD at Louisiana State University. Clark:

I noticed in Physics Today an article that observed that physicists getting PhDs from places like Harvard, MIT, Yale, and so on didn’t like the jobs they were getting. And I thought, well, what am I doing — I’m getting a PhD in physics from Louisiana State University! And I kept thinking, well, I’m married, and I’ve got these obligations. By this time, I had a second child, so I was real eager to get a good job, and I just got discouraged about physics. And a friend of mine pointed to the University of Utah as having a computer-graphics specialty. I didn’t know much about it, but I was good with geometry and physics, which involves a lot of geometry.

So, Clark applied for a spot at the University of Utah and was accepted.

But, as I already implied, he didn’t become a star there. His 1974 thesis was entitled “3D Design of Free-Form B-Spline Surfaces”; it was a solid piece of work addressing a practical problem, but not anything to really get the juices flowing. Afterward, he spent half a decade bouncing around from campus to campus as an adjunct professor: the Universities of California at Santa Cruz and Berkeley, the New York Institute of Technology, Stanford. He was fairly miserable throughout. As an academic of no special note, he was hired primarily as an instructor rather than a researcher, and he wasn’t at all cut out for the job, being too impatient, too irascible. Proving the old adage that the child is the father of the man, he was fired from at least one post for insubordination, just like that angry teenager who had once told off his high-school teacher. Meanwhile he went through not one but two wives. “I was in this kind of downbeat funk,” he says. “Dark, dark, dark.”

It was now early 1979. At Stanford, Clark was working right next door to Xerox’s famed Palo Alto Research Center (PARC), which was inventing much of the modern paradigm of computing, from mice and menus to laser printers and local-area networking. Some of the colleagues Clark had known at the University of Utah were happily ensconced over there. But he was still on the outside looking in. It was infuriating — and yet he was about to find a way to make his mark at last.

Hardware engineering at the time was in the throes of a revolution and its backlash, over a technology that went by the mild-mannered name of “Very Large Scale Integration” (VLSI). The integrated circuit, which packed multiple transistors onto a single microchip, had been invented at Texas Instruments at the end of the 1950s, and had become a staple of computer design already during the following decade. Yet those early implementations often put only a relative handful of transistors on a chip, meaning that they still required lots of chips to accomplish anything useful. A turning point came in 1971 with the Intel 4004, the world’s first microprocessor — i.e., the first time that anyone put the entire brain of a computer on a single chip. Barely remarked at the time, that leap would result in the first kit computers being made available for home users in 1975, followed by the Trinity of 1977, the first three plug-em-in-and-go personal computers suitable for the home. Even then, though, there were many in the academic establishment who scoffed at the idea of VLSI, which required a new, in some ways uglier approach to designing circuitry. In a vivid illustration that being a visionary in some areas doesn’t preclude one from being a reactionary in others, many of the folks at PARC were among the scoffers. Look how far we’ve come doing things one way, they said. Why change?

A PARC researcher named Lynn Conway was enraged by such hidebound thinking. A rare female hardware engineer, she had made scant progress to date getting her point of view through to the old boy’s club that surrounded her at PARC. So, broadening her line of attack, she wrote a paper about the basic techniques of modern chip design, and sent it out to a dozen or so universities along with a tempting offer: if any students or faculty wished to draw up schematics for a chip of their own and send them to her, she would arrange to have the chip fabricated in real silicon and sent back to its proud parent. The point of it all was just to get people to see the potential of VLSI, not to push forward the state of the art. And indeed, just as she had expected, almost all of the designs she received were trivially simple by the standards of even the microchip industry of 1979: digital time keepers, adding machines, and the like. But one was unexpectedly, even crazily complex. Alone among the submissions, it bore a precautionary notice of copyright, from one James Clark. He called his creation the Geometry Engine.

The Geometry Engine was the first and, it seems likely, only microchip that Jim Clark ever personally attempted to design in his life. It was created in response to a fundamental problem that had been vexing 3D modelers since the very beginning: that 3D graphics required shocking quantities of mathematical calculations to bring to life, scaling almost exponentially with the complexity of the scene to be depicted. And worse, the type of math they required was not the type that the researchers’ computers were especially good at.

Wait a moment, some of you might be saying. Isn’t math the very thing that computers do? It’s right there in the name: they compute things. Well, yes, but not all types of math are created equal. Modern computers are also digital devices, meaning they are naturally equipped to deal only with discrete things. Like the game of DOOM, theirs is a universe of stair steps rather than smooth slopes. They like integer numbers, not decimals. Even in the 1960s and 1970s, they could approximate the latter through a storage format known as floating point, but they dealt with these floating-point numbers at least an order of magnitude slower than they did whole numbers, as well as requiring a lot more memory to store them. For this reason, programmers avoided them whenever possible.

And it actually was possible to do so a surprisingly large amount of the time. Most of what computers were commonly used for could be accomplished using only whole numbers — for example, by using Euclidean division that yields a quotient and a remainder in place of decimal division. Even financial software could be built using integers only to count the total number of cents rather than floating-point values to represent dollars and cents. 3D-graphics software, however, was one place where you just couldn’t get around them. Creating a reasonably accurate mathematical representation of an analog 3D space forced you to use floating-point numbers. And this in turn made 3D graphics slow.

Jim Clark certainly wasn’t the first person to think about designing a specialized piece of hardware to lift some of the burden from general-purpose computer designs, an add-on optimized for doing the sorts of mathematical operations that 3D graphics required and nothing else. Various gadgets along these lines had been built already, starting a decade or more before his Geometry Engine. Clark was the first, however, to think of packing it all onto a single chip — or at worst a small collection of them — that could live on a microcomputer’s motherboard or on a card mounted in a slot, that could be mass-produced and sold in the thousands or millions. His description of his “slave processor” sounded disarmingly modest (not, it must be said, a quality for which Clark is typically noted): “It is a four-component vector, floating-point processor for accomplishing three basic operations in computer graphics: matrix transformations, clipping, and mapping to output-device coordinates [i.e., going from an analog world space to pixels in a digital raster].” Yet it was a truly revolutionary idea, the genesis of the graphical processing units (GPUs) of today, which are in some ways more technically complex than the CPUs they serve. The Geometry Engine still needed to use floating-point numbers — it was, after all, still a digital device — but the old engineering doctrine that specialization yields efficiency came into play: it was optimized to do only floating-point calculations, and only a tiny subset of all the ones possible at that, just as quickly as it could.

The Geometry Engine changed Clark’s life. At last, he had something exciting and uniquely his. “All of these people started coming up and wanting to be part of my project,” he remembers. Always an awkward fit in academia, he turned his thinking in a different direction, adopting the mindset of an entrepreneur. “He reinvented his relationship to the world in a way that is considered normal only in California,” writes journalist Michael Lewis in a book about Clark. “No one who had been in his life to that point would be in it ten years later. His wife, his friends, his colleagues, even his casual acquaintances — they’d all be new.” Clark himself wouldn’t hesitate to blast his former profession in later years with all the fury of a professor scorned.

I love the metric of business. It’s money. It’s real simple. You either make money or you don’t. The metric of the university is politics. Does that person like you? Do all these people like you enough to say, “Yeah, he’s worthy?”

But by whatever metric, success didn’t come easy. The Geometry Engine and all it entailed proved a harder sell with the movers and shakers in commercial computing than it had with his colleagues at Stanford. It wasn’t until 1982 that he was able to scrape together the funding to found a company called Silicon Graphics, Incorporated (SGI), and even then he was forced to give 85 percent of his company’s shares to others in order to make it a reality. Then it took another two years after that to actually ship the first hardware.

The market segment SGI was targeting is one that no longer really exists. The machines it made were technically microcomputers, being built around microprocessors, but they were not intended for the homes of ordinary consumers, nor even for the cubicles of ordinary office workers. These were much higher-end, more expensive machines than those, even if they could fit under a desk like one of them. They were called workstation computers. The typical customer spent tens or hundreds of thousands of dollars on them in the service of some highly demanding task or another.

In the case of the SGI machines, of course, that task was almost always related to graphics, usually 3D graphics. Their expense wasn’t bound up with their CPUs; in the beginning, these were fairly plebeian chips from the Motorola 68000 series, the same line used in such consumer-grade personal computers as the Apple Macintosh and the Commodore Amiga. No, the justification of their high price tags rather lay with their custom GPUs, which even in 1984 already went far beyond the likes of Clark’s old Geometry Engine. An SGI GPU was a sort of black box for 3D graphics: feed it all of the data that constituted a scene on one side, and watch a glorious visual representation emerge at the other, thanks to an array of specialized circuitry designed for that purpose and no other.

Now that it had finally gotten off the ground, SGI became very successful very quickly. Its machines were widely used in staple 3D applications like computer-aided industrial design (CAD) and flight simulation, whilst also opening up new vistas in video and film production. They drove the shift in Hollywood from special effects made using miniature models and stop-motion techniques dating back to the era of King Kong to the extensive use of computer-generated imagery (CGI) that we see even in the purportedly live-action films of today. (Steven Spielberg and George Lucas were among SGI’s first and best customers.) “When a moviegoer rubbed his eyes and said, ‘What’ll they think of next?’,” writes Michael Lewis, “it was usually because SGI had upgraded its machines.”

The company peaked in the early 1990s, when its graphics workstations were the key to CGI-driven blockbusters like Terminator 2 and Jurassic Park. Never mind the names that flashed by in the opening credits; everyone could agree that the computer-generated dinosaurs were the real stars of Jurassic Park. SGI was bringing in over $3 billion in annual revenue and had close to 15,000 employees by 1993, the year that movie was released. That same year, President Bill Clinton and Vice President Al Gore came out personally to SGI’s offices in Silicon Valley to celebrate this American success story.

SGI’s hardware subsystem for graphics, the beating heart of its business model, was known in 1993 as the RealityEngine2. This latest GPU was, wrote Byte magazine in a contemporary article, “richly parallel,” meaning that it could do many calculations simultaneously, in contrast to a traditional CPU, which could only execute one instruction at a time. (Such parallelism is the reason that modern GPUs are so often used for some math-intensive non-graphical applications, such as crypto-currency mining and machine learning.) To support this black box and deliver to its well-heeled customers a complete turnkey solution for all their graphics needs, SGI had also spearheaded an open-source software library for 3D applications, known as the Open Graphics Library, or OpenGL. Even the CPUs in its latest machines were SGI’s own; it had purchased a maker of same called MIPS Technologies in 1990.

But all of this success did not imply a harmonious corporation. Jim Clark was convinced that he had been hard done by back in 1982, when he was forced to give up 85 percent of his brainchild in order to secure the funding he needed, then screwed over again when he was compelled by his board to give up the CEO post to a former Hewlett Packard executive named Ed McCracken in 1984. The two men had been at vicious loggerheads for years; Clark, who could be downright mean when the mood struck him, reduced McCracken to public tears on at least one occasion. At one memorable corporate retreat intended to repair the toxic atmosphere in the board room, recalls Clark, “the psychologist determined that everyone else on the executive committee was passive aggressive. I was just aggressive.”

Clark claims that the most substantive bone of contention was McCracken’s blasé indifference to the so-called low-end market, meaning all of those non-workstation-class personal computers that were proliferating in the millions during the 1980s and early 1990s. If SGI’s machines were advancing by leaps and bounds, these consumer-grade computers were hopscotching on a rocket. “You could see a time when the PC would be able to do the sort of graphics that [our] machines did,” says Clark. But McCracken, for one, couldn’t see it, was content to live fat and happy off of the high prices and high profit margins of SGI’s current machines.

He did authorize some experiments at the lower end, but his heart was never in it. In 1990, SGI deigned to put a limited subset of the RealityEngine smorgasbord onto an add-on card for Intel-based personal computers. Calling it IrisVision, it hopefully talked up its price of “under $5000,” which really was absurdly low by the company’s usual standards. What with its complete lack of software support and its way-too-high price for this marketplace, IrisVision went nowhere, whereupon McCracken took the failure as a vindication of his position. “This is a low-margin business, and we’re a high-margin company, so we’re going to stop doing that,” he said.

Despite McCracken’s indifference, Clark eventually managed to broker a deal with Nintendo to make a MIPS microprocessor and an SGI GPU the heart of the latter’s Nintendo 64 videogame console. But he quit after yet another shouting match with McCracken in 1994, two years before it hit the street.

He had been right all along about the inevitable course of the industry, however undiplomatically he may have stated his case over the years. Personal computers did indeed start to swallow the workstation market almost at the exact point in time that Clark bailed. The profits from the Nintendo deal were rich, but they were largely erased by another of McCracken’s pet projects, an ill-advised acquisition of the struggling supercomputer maker Cray. Meanwhile, with McCracken so obviously more interested in selling a handful of supercomputers for millions of dollars each than millions upon millions of consoles for a few hundred dollars each, a group of frustrated SGI employees left the company to help Nintendo make the GameCube, the followup to the Nintendo 64, on their own. It was all downhill for SGI after that, bottoming out in a 2009 bankruptcy and liquidation.

As for Clark, he would go on to a second entrepreneurial act as remarkable as his first, abandoning 3D graphics to make a World Wide Web browser with Marc Andreessen. We will say farewell to him here, but you can read the story of his second company Netscape’s meteoric rise and fall elsewhere on this site.

Now, though, I’d like to return to the scene of SGI’s glory days, introducing in the process three new starring players. Gary Tarolli and Scott Sellers were talented young engineers who were recruited to SGI in the 1980s; Ross Smith was a marketing and business-development type who initially worked for MIPS Technologies, then ended up at SGI when it acquired that company in 1990. The three became fast friends. Being of a younger generation, they didn’t share the contempt for everyday personal computers that dominated among their company’s upper management. Whereas the latter laughed at the primitiveness of games like Wolfenstein 3D and Ultima Underworld, if they bothered to notice them at all, our trio saw a brewing revolution in gaming, and thought about how much it could be helped along by hardware-accelerated 3D graphics.

Convinced that there was a huge opportunity here, they begged their managers to get into the gaming space. But, still smarting from the recent failure of IrisVision, McCracken and his cronies rejected their pleas out of hand. (One of the small mysteries in this story is why their efforts never came to the attention of Jim Clark, why an alliance was never formed. The likely answer is that Clark had, by his own admission, largely removed himself from the day-to-day running of SGI by this time, being more commonly seen on his boat than in his office.) At last, Tarolli, Sellers, Smith, and some like-minded colleagues ran another offer up the flagpole. You aren’t doing anything with IrisVision, they said. Let us form a spinoff company of our own to try to sell it. And much to their own astonishment, this time management agreed.

They decided to call their new company Pellucid — not the best name in the world, sounding as it did rather like a medicine of some sort, but then they were still green at all this. The technology they had to peddle was a couple of years old, but it still blew just about anything else in the MS-DOS/Windows space out of the water, being able to display 16 million colors at a resolution of 1024 X 768, with 3D acceleration built-in. (Contrast this with the SVGA card found in the typical home computer of the time, which could do 256 colors at 640 X 480, with no 3D affordances). Pellucid rebranded the old IrisVision the ProGraphics 1024. Thanks to the relentless march of chip-fabrication technology, they found that they could now manufacture it cheaply enough to be able to sell it for as little as $1000 — still pricey, to be sure, but a price that some hardcore gamers, as well as others with a strong interest in having the best graphics possible, might just be willing to pay.

The problem, the folks at Pellucid soon came to realize, was a well-nigh intractable deadlock between the chicken and the egg. Without software written to take advantage of its more advanced capabilities, the ProGraphics 1024 was just another SVGA graphics card, selling for a ridiculously high price. So, consumers waited for said software to arrive. Meanwhile software developers, seeing the as-yet non-existent installed base, saw no reason to begin supporting the card. Breaking this logjam must require a concentrated public-relations and developer-outreach effort, the likes of which the shoestring spinoff couldn’t possibly afford.

They thought they had done an end-run around the problem in May of 1993, when they agreed, with the blessing of SGI, to sell Pellucid kit and caboodle to a major up-and-comer in consumer computing known as Media Vision, which currently sold “multimedia upgrade kits” consisting of CD-ROM drives and sound cards. But Media Vision’s ambitions knew no bounds: they intended to branch out into many other kinds of hardware and software. With proven people like Stan Cornyn, a legendary hit-maker from the music industry, on their management rolls and with millions and millions of dollars on hand to fund their efforts, Media Vision looked poised to dominate.

It seemed the perfect landing place for Pellucid; Media Vision had all the enthusiasm for the consumer market that SGI had lacked. The new parent company’s management said, correctly, that the ProGraphics 1024 was too old by now and too expensive to ever become a volume product, but that 3D acceleration’s time would come as soon as the current wave of excitement over CD-ROM and multimedia began to ebb and people started looking for the next big thing. When that happened, Media Vision would be there with a newer, more reasonably priced 3D card, thanks to the people who had once called themselves Pellucid. It sounded pretty good, even if in the here and now it did seem to entail more waiting around than anything else.

There was just one stumbling block: “Media Vision was run by crooks,” as Scott Sellers puts it. In April of 1994, a scandal erupted in the business pages of the nation’s newspapers. It turned out that Media Vision had been an experiment in “fake it until you make it” on a gigantic scale. Its founders had engaged in just about every form of malfeasance imaginable, creating a financial house of cards whose honest revenues were a minuscule fraction of what everyone had assumed them to be. By mid-summer, the company had blown away like so much dust in the wind, still providing income only for the lawyers who were left to pick over the corpse. (At least two people would eventually be sent to prison for their roles in the conspiracy.) The former Pellucid folks were left as high and dry as everyone else who had gotten into bed with Media Vision. All of their efforts to date had led to the sale of no more than 2000 graphics cards.

That same summer of 1994, a prominent Silicon Valley figure named Gordon Campbell was looking for interesting projects in which to invest. Campbell had earned his reputation as one of the Valley’s wise men through a company called Chips and Technologies (C&T), which he had co-founded in 1984. One of those hidden movers in the computer industry, C&T had largely invented the concept of the chipset: chips or small collections of them that could be integrated directly into a computer’s motherboard to perform functions that used to be placed on add-on cards. C&T had first made a name for itself by reducing IBM’s bulky nineteen-chip EGA graphics card to just four chips that were cheaper to make and consumed less power. Campbell’s firm thrived alongside the cost-conscious PC clone industry, which by the beginning of the 1990s was rendering IBM itself, the very company whose products it had once so unabashedly copied, all but irrelevant. Onboard video, onboard sound, disk controllers, basic firmware… you name it, C&T had a cheap, good-enough-for-the-average-consumer chipset to handle it.

But now Campbell had left C&T “in pursuit of new opportunities,” as they say in Valley speak. Looking for a marketing person for one of the startups in which he had invested a stake, he interviewed a young man named Ross Smith who had SGI on his résumé — always a plus. But the interview didn’t go well. Campbell:

It was the worst interview I think I’ve ever had. And so finally, I just turned to him and I said, “Okay, your heart’s not in this interview. What do you really want to do?”

And he kind of looks surprised and says, well, there are these two other guys, and we want to start a 3D-graphics company. And the next thing I know, we had set up a meeting. And we had, over a lot of beers, a discussion which led these guys to all come and work at my office. And that set up the start of 3Dfx.

It seemed to all of them that, after all of the delays and blind alleys, it truly was now or never to make a mark. For hardware-accelerated 3D graphics were already beginning to trickle down into the consumer space. In standup arcades, games like Daytona USA and Virtua Fighter were using rudimentary GPUs. Ditto the Sega Saturn and the Sony PlayStation, the latest in home-videogame consoles, both which were on the verge of release in Japan, with American debuts expected in 1995. Meanwhile the software-only, 2.5D graphics of DOOM were taking the world of hardcore computer gamers by storm. The men behind 3Dfx felt that the next move must surely seem obvious to many other people besides themselves. The only reason the masses of computer-game players and developers weren’t clamoring for 3D graphics cards already was that they didn’t yet realize what such gadgets could do for them.

Still, they were all wary of getting back into the add-on board market, where they had been burned so badly before. Selling products directly to consumers required retail access and marketing muscle that they still lacked. Instead, following in the footsteps of C&T, they decided to sell a 3D chipset only to other companies, who could then build it into add-on boards for personal computers, standup-arcade machines, whatever they wished.

At the same time, though, they wanted their technology to be known, in exactly the way that the anonymous chipsets made by C&T were not. In the pursuit of this aspiration, Gordon Campbell found inspiration from another company that had become a household name despite selling very little directly to consumers. Intel had launched the “Intel Inside” campaign in 1990, just as the era of the PC clone was giving way to a more amorphous commodity architecture. The company introduced a requirement that the makers of computers which used its CPUs include the Intel Inside logo on their packaging and on the cases of the computers themselves, even as it made the same logo the centerpiece of a standalone advertising campaign in print and on television. The effort paid off; Intel became almost as identified with the Second Home Computer Revolution in the minds of consumers as was Microsoft, whose own logo showed up on their screens every time they booted into Windows. People took to calling the emerging duopoly the “Wintel” juggernaut, a name which has stuck around to this day.

So, it was decided: a requirement to display a similarly snazzy 3Dfx logo would be written into that company’s contracts as well. The 3Dfx name itself was a vast improvement over Pellucid. As time went on, 3Dfx would continue to display a near-genius for catchy branding: “Voodoo” for the chipset itself, “GLide” for the software library that controlled it. All of this reflected a business savvy the likes of which hadn’t been seen from Pellucid, that was a credit both to Campbell’s steady hand and the accumulating experience of the other three partners.

But none of it would have mattered without the right product. Campbell told his trio of protégés in no uncertain terms that they were never going to make a dent in computer gaming with a $1000 video card; they needed to get the price down to a third of that at the most, which meant the chipset itself could cost the manufacturers who used it in their products not much more than $100 a pop. That was a tall order, especially considering that gamers’ expectations of graphical fidelity weren’t diminishing. On the contrary: the old Pellucid card hadn’t even been able to do 3D texture mapping, a failing that gamers would never accept post-DOOM.

But none of it would have mattered without the right product. Campbell told his trio of protégés in no uncertain terms that they were never going to make a dent in computer gaming with a $1000 video card; they needed to get the price down to a third of that at the most, which meant the chipset itself could cost the manufacturers who used it in their products not much more than $100 a pop. That was a tall order, especially considering that gamers’ expectations of graphical fidelity weren’t diminishing. On the contrary: the old Pellucid card hadn’t even been able to do 3D texture mapping, a failing that gamers would never accept post-DOOM.

It was left to Gary Tarolli and Scott Sellers to figure out what absolutely had to be in there, such as the aforementioned texture mapping, and what they could get away with tossing overboard. Driven by the remorseless logic of chip-fabrication costs, they wound up going much farther with the tossing than they ever could have imagined when they started out. There could be no talk of 24-bit color or unusually high resolutions: 16-bit color (offering a little over 65,000 onscreen shades) at a resolution of 640 X 480 would be the limit.[1]A resolution of 800 X 600 was technically possible using the Voodoo chipset, but using this resolution meant that the programmer could not use a vital affordance known as Z-buffering. For this reason, it was almost never seen in the wild. Likewise, they threw out the capability of handling any polygons except for the simplest of them all, the humble triangle. For, they realized, you could make almost any solid you liked by combining triangular surfaces together. With enough triangles in your world — and their chipset would let you have up to 1 million of them — you needn’t lament the absence of the other polygons all that much.

Sellers had another epiphany soon after. Intel’s latest CPU, to which gamers were quickly migrating, was the Pentium. It had a built-in floating-point co-processor which was… not too shabby, actually. It should therefore be possible to take the first phase of the 3D-graphics pipeline — the modeling phase — out of the GPU entirely and just let the CPU handle it. And so another crucial decision was made: they would concern themselves only with the rendering or rasterization phase, which was a much greater challenge to tackle in software alone, even with a Pentium. Another huge piece of the puzzle was thus neatly excised — or rather outsourced back to the place where it was already being done in current games. This would have been heresy at SGI, whose ethic had always been to do it all in the GPU. But then, they were no longer at SGI, were they?

Undoubtedly their bravest decision of all was to throw out any and all 2D-graphics capabilities — i.e., the neat rasters of pixels used to display Windows desktops and word processors and all of those earlier, less exciting games. Makers of Voodoo boards would have to include a cable to connect the existing, everyday graphics cards inside their customers’ machines to their new 3D ones. When you ran non-3D applications, the Voodoo card would simply pass the video signal on to the monitor unchanged. But when you fired up a 3D game, it would take over from the other board. A relay inside made a distinctly audible click when this happened. Far from a bug, gamers would soon come to consider the noise a feature.”Because you knew it was time to have fun,” as Ross Smith puts it.

It was a radical plan, to be sure. These new cards would be useful only for games, would have no other purpose whatsoever; there would be no justifying this hardware purchase to the parents or the spouse with talk of productivity or educational applications. Nevertheless, the cost savings seemed worth it. After all, almost everyone who initially went out to buy the new cards would already have a perfectly good 2D video card in their computer. Why make them pay extra to duplicate those functions?

The final design used just two custom chips. One of them, internally known as the T-Rex (Jurassic Park was still in the air), was dedicated exclusively to the texture mapping that had been so conspicuously missing from the Pellucid board. Another, called the FBI (“Frame Buffer Interface”), did everything else required in the rendering phase. Add to this pair a few less exciting off-the-shelf chips and four megabytes worth of RAM chips, put it on a board with the appropriate connectors, and you had yourself a 3Dfx Voodoo GPU.

Needless to say, getting this far took some time. Tarolli, Sellers, and Smith spent the last half of 1994 camped out in Campbell’s office, deciding what they wanted to do and how they wanted to do it and securing the funding they needed to make it happen. Then they spent all of 1995 in offices of their own, hiring about a dozen people to help them, praying all the time that no other killer product would emerge to make all of their efforts moot. While they worked, the Sega Saturn and Sony PlayStation did indeed arrive on American shores, becoming the first gaming devices equpped with 3D GPUs to reach American homes in quantity. The 3Dfx crew were not overly impressed by either console — and yet they found the public’s warm reception of the PlayStation in particular oddly encouraging. “That showed, at a very rudimentary level, what could be done with 3D graphics with very crude texture mapping,” says Scott Sellers. “And it was pretty abysmal quality. But the consumers were just eating it up.”

They got their first finished chipsets back from their Taiwanese fabricator at the end of January 1996, then spent Super Bowl weekend soldering them into place and testing them. There were a few teething problems, but in the end everything came together as expected. They had their 3D chipset, at the beginning of a year destined to be dominated by the likes of Duke Nukem 3D and Quake. It seemed the perfect product for a time when gamers couldn’t get enough 3D mayhem. “If it had been a couple of years earlier,” says Gary Tarolli, “it would have been too early. If it had been a couple of years later, it would have been too late.” As it was, they were ready to go at the Goldilocks moment. Now they just had to sell their chipset to gamers — which meant they first had to sell it to game developers and board makers.

Did you enjoy this article? If so, please think about pitching in to help me make many more like it. You can pledge any amount you like.

(Sources: the books The Dream Machine by M. Mitchell Waldrop Dealers of Lightning: Xerox PARC and the Dawn of the Computer Age by Michael A. Hiltzik, and The New New Thing: A Silicon Valley Story by Michael Lewis; Byte of May 1992 and November 1993; InfoWorld of April 22 1991 and May 31 1993; Next Generation of October 1997; ACM’s Computer Graphics journal of July 1982; Wired of January 1994 and October 1994. Online sources include the Computer History Museum’s “oral histories” with Jim Clark, Forest Baskett, and the founders of 3Dfx; Wayne Carlson’s “Critical History of Computer Graphics and Animation”; “Fall of Voodoo” by Ernie Smith at Tedium; Fabian Sanglard’s reconstruction of the workings of the Voodoo 1 chips; “Famous Graphics Chips: 3Dfx’s Voodoo” by Dr. Jon Peddie at the IEEE Computer Society’s site; an internal technical description of the Voodoo technology archived at bitsavers.org.)

Footnotes

| ↑1 | A resolution of 800 X 600 was technically possible using the Voodoo chipset, but using this resolution meant that the programmer could not use a vital affordance known as Z-buffering. For this reason, it was almost never seen in the wild. |

|---|

Andrew Pam

May 5, 2023 at 4:17 pm

I spotted two name misspellings: One “Gouraaud” and one “McCrackon”.

Jimmy Maher

May 5, 2023 at 7:24 pm

Thanks!

PlayHistory

May 5, 2023 at 4:27 pm

A stray “McCrackon” there.

Very good balancing of the topics. I attempted to do some digging on this back when I was looking at SGI to do my N64 article but there’s a fair bit new to me here. Tracing the story of how the “hardcore” became the “mainstream” in the PC market is such a fun topic, running against the idea that the only way to make things for the masses is to make it all-in-one and cheap as dirt. We do live in a different paradigm of computing than in the 90s, entirely because of what happened in that decade.

The only real thing missing is a little more about the IBM PC existing graphics board standards, without which the entire GPU market idea wouldn’t have been feasible. I feel like a paragraph or so on that would make this a fairly complete overview.

LeeH

May 5, 2023 at 4:30 pm

I would say that buying that first 3dfx voodoo card (a Diamond Monster 3d!) back some time in 1997 was the most significant PC upgrade I’ve ever had. The only thing that even comes close, imo, is the much more recent shift from HDD to SSD.

It’s difficult to describe just how transformative it was, and those pack-in GLide games (especially Mechwarrior 2!) were absolutely brain-rearranging. I’d never seen consistently smooth graphics like that before.

What a wild time.

Bogdanow

May 5, 2023 at 4:42 pm

Excellent, I will be impatiently waiting for the next part. For the Polish folks here (I’m sure there are some), there is an exciting book from Marek Hołyński, about his time in Silicon Valley, “E-mailem z doliny krzemowej” – sharing some details about both Silicon Graphics (he spent eight years there) and 3dfx. Bit old, it is possible to find it for cheap.

Sarah Walker

May 5, 2023 at 4:49 pm

Couple of minor notes on the branding. 3dfx was originally styled as 3Dfx, with one of the highly unfashionable capital letters. Also, while you haven’t stated that the 3dfx logo on this page is the original one (so I don’t know if this was a mistake or not), it is the one from the 1999 (IIRC) rebranding. The original logo from their glory years is a tad more “90s”.

Peter Olausson

May 5, 2023 at 6:28 pm

Is it the first 3Dfx-logo on the chip in the very first picture?

Jimmy Maher

May 5, 2023 at 7:33 pm

No, both were mistakes. Thanks! (And now I have to change “3dfx” to “3Dfx” everywhere in the article. Pity; the former looks a lot nicer in my opinion.)

Ross

May 7, 2023 at 3:24 am

I’m not sure what happened culturally, but it does totally feel like in the ’90s, the occasional unexpected capital letter was considered veRy coOl, whereas at some point it became even cooler to forsake capital letters altogether even when the normal rules of english usage called for them.

Andrew Plotkin

May 5, 2023 at 4:58 pm

> The market segment SGI was targeting is one that no longer really exists.

Hm, would you say? When Apple sells a “Mac Pro” for $6000 (or up as high as you want), I’d say it’s exactly that market segment. The only difference is that it’s the top end of a home computer line that uses the same OS and software down to the laptop level.

Matter of semantics, I guess.

This is all right up against my adult life, of course. I graduated in 1992, and several of my classmates took their newly-minted CS degrees straight to SGI.

Jimmy Maher

May 5, 2023 at 7:39 pm

It’s still a different category, I think. An SGI workstation would cost you $15,000 to $20,000 minimum, at late 1980s/early 1990s prices. Further, there’s a difference in the way the machines were sold. You can walk into an Apple store today as an ordinary Jane or Jim and walk out with a Mac Pro. (I suppose you might have to order it, but still…) SGI didn’t sell at retail, only to institutional customers. For a while at least, you couldn’t even run one of their machines without a high-voltage outlet of a sort not found in most homes. Of course, if you really wanted one as just somebody off the street, they presumably wouldn’t tell you no. But it really was a very different sales and support model.

sam

May 5, 2023 at 5:40 pm

‘Sullivan’ should be ‘Sutherland’, right?

Jimmy Maher

May 5, 2023 at 7:41 pm

Yes. Thanks!

Ken Brubaker

May 5, 2023 at 6:08 pm

You have “the the” in the first paragraph below the horizontal line.

Jimmy Maher

May 5, 2023 at 7:42 pm

Thanks!

Peter Olausson

May 5, 2023 at 6:38 pm

I remember reading about the 3dfx limitations much later, and immediately understood them: As pioneers, they had to sacrifice. With 16 bit colour (which isn’t that big a problem really) and 640×480 resolution only the stuff got cheaper, which was vital to break through in the consumer market (the stuff was pretty expensive as it was). Those limits would only be problematic later on, when the market for 3d graphics cards had been well established.

I’ll never forget my first experience with a 3d graphics card. It was with Unreal, a game I suspect will be covered in future posts. (Which will no doubt be as excellent as this, as they always are.)

Gordon Cameron

May 6, 2023 at 2:48 am

The first accelerated game I played on my PC was Heretic 2. I was a little late to the party, getting a Voodoo 3 around New Year’s, 2000. I remember admiring the beautifully rendered crates (of course it had to be crates) in the opening level… it was a heady time.

Sniffnoy

May 5, 2023 at 7:00 pm

Huh, the paradigm of 3D graphics where “polygons” always means “triangles” has been so dominant for the past few decades that I had no idea that at one point they could actually be arbitrary polygons! I was aware of the weird case of the Sega Saturn, which used quadrilaterals instead of triangles (as a result of the 3D being a late feature that had to be built on top of the already-designed hardware for handling rectangular 2D sprites), but had no idea that systems built out of truly general polygons had ever been a thing…

Sniffnoy

May 5, 2023 at 7:42 pm

I think typically here one would use fixed-point (i.e., storing a single number representing the total number of cents (or mills or whatever)), rather than separately storing dollars and cents. And you wouldn’t want to use floating-point for this anyway (or at least, not binary floating point).

Now I think a lot of your comments about using integers are already alluding to the use of fixed-point, but since you never mention it explicitly, I feel like I should take a moment to explain for people.

Sticking to decimal for now — fixed-point representation means representing fraction with the decimal point remaining fixed in its usual place, and you get a fixed number of decimal places after it. It’s contrasted with floating point, where you also only get a fixed number of decimal places after the decimal point, but the decimal point moves around; it “floats”.

Thus, in fixed-point with 2 decimal places, you might have 5.99, or 600.67, or 1356.88, but never 0.0055, because that’s 4 decimal places. By contrast, if you had floating-point with 2 decimal places (horribly limited I know), you could say, instead of thinking of it as 0.0055, let’s think of it as 0.55 * 10^-2. (This is not the usual representation, I know, but I feel like this illustrates my point more easily.) So it allows one to represent smaller numbers without having to add more decimal places. And large numbers too, because one could similarly represent 55,000,000 as 0.55 * 10^8. Etc.

The thing about fixed-point is that computer hardware and computer languages don’t come with fixed-point capability built-in… because they don’t need to! Fixed-point doesn’t need any special support. Imagine for instance that we wanted to store quantities of American money — dollars and cents. So, we only need 2 decimal places. Well… why not just store the total number of cents? That’s all fixed-point is! If we have a fixed-point number x with k decimal places, then the number x*10^k is always an integer, so just store that in place of x. So storing fixed-point numbers only requires storing integers, and doing fixed-point math just requires some tweaks to integer operations.

(Of course here I’ve been describing decimal fixed-point for ease of understanding, and it is farily common, but binary fixed-point is also; and notionally you could use other bases as well. One nice thing about fixed-point is that, because you’re just storing integers, which can be represented perfectly fine in any base, the base you’re using doesn’t have to match the base the computer is using; you can perfectly well do decimal fixed-point even though the computer stores numbers in binary.)

So, a lot of things that you describe as doing “integer math” can be alternately thought of as doing fixed-point math, and likely was thought of that way by its designers; they were not thinking only in integers, they did have fractions! Just not floating-point ones. But because fixed-point math consists of integer operations, whether you think of it as integer math or fixed-point math is largely a matter of interpretation. Still, in many cases thinking of it as fixed-point math, with fractional values, can clarify what’s going on. E.g., if you see quantities of cents being stored, you likely want to think of it as quantities of dollars being stored, just in fixed point.

Now with floating-point, the base really matters; you can do decimal fixed-point with binary integers, but you can’t do decimal floating-point with binary floating-point. So you wouldn’t want to use binary floating-point for money, because binary floating-point can’t represent exactly a fraction like 1/100 (for a single cent). 1/128, sure, but not 1/100. That said, decimal floating-point does also exist, and some programming languages have support for it; but I’m not sure that you’d want to use that for money even so, as floating-point operations necessarily involve approximations in places where fixed-point operations can be exact (although yes there’s always the problem of overflow).

Regardless, you wouldn’t store money as dollars and cents separately; you’d probably use fixed-point. And fixed-point is also what a lot of that integer math probably actually was — fractions weren’t off-limits! Their fractional nature was just implicit.

Jimmy Maher

May 5, 2023 at 7:50 pm

Yes, that is a far more logical way to do it. Thanks! (The only exception would be in the case of a computer that doesn’t easily handle integers of at least 32 bits.)

And thanks for the explanation of fixed-point as well. A little more complex than I wanted to get in the article proper, but definitely a worthy addendum.

Joe Vincent

May 5, 2023 at 9:16 pm

Jimmy, thanks for taking us through the fascinating evolution of 3D graphics and CGI. As someone who shared funds with 5 other college room-mates to buy a PC with a Creative Media Sound blaster and a Pentium chip in late-90s pre-internet India, this is also an incredible hit of nostalgia. Spent a phenomenal amount of time exploring free 3D game demos which came with PC mags of that era – including Shadow Warrior, Descent Freespace, & Comanche to name a few.

Minor edit – ‘Makers of Voodoo boards would have to include a cable to connect the existing, everyday graphics cards insider their customers’ machines to their new 3D one.’ Insider needs to be edited to inside.

Jimmy Maher

May 6, 2023 at 6:05 am

Thanks!

cobbpg

May 6, 2023 at 5:49 am

Around the same time Commodore were working on the Amiga Hombre chipset amidst their death throes. There are so many interesting ways history could have gone differently.

Alex

May 6, 2023 at 5:59 am

Oh boy, the brand of 3dfx trigger so many memories. In fact in such a way that this name and the second era of the 1990s are inextricable connected in my memory as a PC-“Gamer”. Regarding the Playstation: It was quite clear that its 3D-Technology had some problems even back then. Textures would vanish and re-appear quite regularly, many objects were blurry, the quality of sharpness would never be constant. Yes, it was quite strange that the console was marketed as such a powerhouse with this shortcomings, but I think there was some kind of mainstream “Hipness” to it that the Computer did not reach at this point. The hardware industry was just learning how to market their new gadgets to a wider spectrum of consumers.

fform

May 6, 2023 at 9:09 am

The amusing thing about the Playstation (and other consoles of that generation) that was discovered years later was how much their graphics quality relied on the inherent fuzziness of a CRT TV display. Plugging a PSX, N64 or Saturn into an LCD TV in the early 2000s was frankly shocking, because the clarity of the digital display revealed just how janky the graphical work had been.

I was lucky enough to grow up in this period, my first 3d card being a Voodoo Banshee (a Voodoo 1 with integrated 2d graphics). I always looked down on the graphics of console games in comparison with my crisp computer CRT with the high resolution, and felt a little vindicated when everyone realized just how smudged they really were – literally.

John

May 6, 2023 at 6:49 pm

Older console games had art optimized for the displays on which they were played. That is the exact opposite of “janky”.

Gnoman

May 6, 2023 at 7:05 pm

That’s as much a problem with connection to LCD than fundamental problems with the graphics design – taking the raw input and having the monitor upscale it has some ugly side effects.

Using dedicated upscaler hardware instead of just plugging the thing in shows that the systems did rely a lot on CRT bleed and even poor cable quality – connecting something like Silent Hill even to a CRT with a high-quality cable breaks the dithering – but it isn’t nearly as bad as you’d get from just plugging it in to the monitor directly.

Jimmy Maher

May 7, 2023 at 7:32 am

This is nothing unique to the PlayStation. Going back to the Commodore 64, programmers were able to create the illusion of more than 16 colors by arranging their pixels so that their colors bled into one another — an effect totally lost on our crisp digital displays where every pixel is absolutely discrete. It’s not entirely down to nostalgia, in other words, that so many games look better in our memories than they do in our emulators. (I suppose our screens are dense enough by now that it would be possible to simulate the effect of this analog bleed, what with the sheer number of discrete pixels at our disposal, but I don’t know if any emulators do so.)

John

May 7, 2023 at 4:34 pm

DOSBox offers filters called “scan”, “tv”, and “rgb”, which are intended to replicate the visual characteristics of old CRT monitors and televisions. (See https://www.dosbox.com/wiki/Scaler for sample images.) My understanding is that many other emulators offer similar filters. How well these filters work I couldn’t say. I’ve never used them. Most of the games I’ve emulated have been in 640×480 VGA and I don’t think they’d benefit from fake scan lines.

Gnoman

May 8, 2023 at 12:18 am

Not only do a lot of emulators provide that feature, at least some sourceports do as well. OpenXcom has an absolutely fantastic set, for example. There’s at least one modern “retraux” game (Super Amazing Wagon Adventure Turbo, which is designed with 4-bit aesthetics) that has a surprisingly good (optional) CRT filter.

It is also built into the higher-quality versions of the dedicated upscaler hardware I mentioned – devices you plug your low-res analog hardware into and get a crisp lag-free output in a modern digital format.

Nate

May 7, 2023 at 6:12 am

Good article. SGI also had an amazingly customized operating system in IRIX, as well as the various tools they sold for 3D modeling.

Ed: I noticed you used the term “neural networking”, but that doesn’t seem quite right. “Neural network” (or “neural net”) is the commonly used noun form.

Jimmy Maher

May 7, 2023 at 7:25 am

I looked at that too, but “neural network” doesn’t fit there, referring as it does to a single example of the form rather than the endeavor as a whole. “Neural networking” is the logical noun.

Alexey Romanov

October 8, 2023 at 6:38 pm

Then “machine learning”, maybe?

Jimmy Maher

October 10, 2023 at 11:04 am

Yes, that’s a good alternative. Thanks!

Alex Smith

May 7, 2023 at 3:46 pm

While I understand it is tangential to this excellent article, I am a little surprised the N64 does not rate a mention alongside the PlayStations and Saturns of the world seeing it was an SGI creation and Clark’s one successful attempt to push the company into the consumer market. This push ultimately failed after he left, when I presume McCracken’s lack of interest helped push the most important minds behind the system to leave and form ArtX to do the Gamecube with Nintendo.

Sarah Walker

May 7, 2023 at 7:19 pm

The N64 was strictly speaking a MIPS project that SGI inherited via their acquisition of the former.

Alex Smith

May 7, 2023 at 7:55 pm

Not quite. While the tech was MIPS, SGI drove the entry into video games. Tim Van Hook, who developed the Multimedia Engine at the core of the project, was a MIPS guy and the project was based on MIPS technology, but Clark personally approached first Sega and then Nintendo about a video game collaboration, and the deal with Nintendo was negotiated and consummated by SGI. This was all several years after SGI bought MIPS, and turning the tech into the N64 was done by the MIPS folks wholly while under SGI ownership.

Jimmy Maher

May 8, 2023 at 4:14 pm

Good catch. Not being much of a console guy, I’m afraid I overlooked that history. I added a paragraph to the article. Thanks!

Whomever

May 7, 2023 at 4:03 pm

Fun article. For those who remember the original Jurassic Park, the “It’s a Unix System!” was in fact an actual demo that SGI shipped with their OS.

Ralph Unger

May 8, 2023 at 2:24 am

Michael Creighton also had that in one of his novels. Using virtual reality you could walk down a row of file cabinets and open them to find what you are looking for. That is nuts. CD (Change Directory) works a lot faster, and it works in a terminal. No VR or the VR walking pad that he described would be needed. I wanna see a video of the the SGI demo of such a silly thing!

rocco

May 7, 2023 at 5:19 pm

Curious that you take “old white guy” as synonymous with millionaire or maybe nobility. If only!

Ralph Unger

May 8, 2023 at 1:50 am

Back in about 1998 I got two Voodoo2 Cards with sli and played mostly Decent for months. My monitors were 1024 X 768 but I never noticed that my card only ran games at 640 X 480. The motion was so fluid! $300 per card in 1998. A total of about $1,111.00 in today’s money. It was the last time I ran at the bleeding edge of PCs. My wife bought me a 386 running at 40 MHz when even my work computers were running at 25 MHz. My work computers cost about $10,000, but that was because I had to store audio files in RAM so they would play on cue.RAM was very expensive back then. I wonder what was the cost for the 368 40 MHz was at the time? A lot I would guess. She came home from work and found the new computer in small bits on the dining room table and I think she almost passed out. I put it all back together and it worked just fine. I think for a minute there, I was seriously flirting with divorce. My newest computer that I built 6 months ago only cost $350, but that was just CPU (Ryzen 5600) and Mem (32 G) and SSD (2 T) costs. I had a lot of old parts to start with, my case is from 2007.One of the early ATX cases. My keyboard is the same IBM PS2 keyboard that my wife bought me back in 1998.

Ralph Unger

May 8, 2023 at 1:59 am

How old am I? the first program I wrote, a simple Tank game, was stored on a paper tape roll that ran on a terminal that was connected to the local power companies mainframe. :-)

Jeff Thomas

May 8, 2023 at 10:16 pm

Heh, you’re in good company here, there are a lot of us industry dinosaurs. The first program I wrote was in HP-BASIC on a terminal at my dads desk at HP. I don’t know what kind of system it was attached to but he ‘saved’ my program to punchcards :-).

Peter Johnson

May 11, 2023 at 5:18 pm

My dad worked on electric forklifts and one of the customers was Evans and Sutherland. Around 1990 he brought home a big box with a strange display and a bunch of circuit boards they’d let him take. He wanted to see if the two of us could make something appear on the display, but it was really unusual and beyond our ability to reverse engineer the interface. We still had fun trying.

SierraVista

May 13, 2023 at 12:24 pm

Et tu, mind virus?

I love your blog, but this sort of disparagement (and it is disparagement) benefits neither the substance nor the flavor (as it ultimately reads as acrid on the palate) of your otherwise vibrant storytelling:

“… (sad as it is to say) their race and gender, definitely in the opportunities that were offered to them. This isn’t to disparage their accomplishments; they did, after all, still need to have the vision to grasp the brass ring of opportunity and the talent to make the most of it. Suffice to say, then, that luck is a prerequisite but the farthest thing from a guarantee.

Every once in a while, however, I come across someone who really did almost literally make something out of nothing. One of these folks is Jim Clark. If today as a soon-to-be octogenarian he indulges as enthusiastically as any of his Old White Guy… ”

Respectfully, please consider that people of all backgrounds, philosophical inclinations, appearances, and even those from the dusty desert southwest have contributed to the tapestry of stories you usually so eloquently weave here.

Lt. Nitpicker

May 18, 2023 at 2:41 am

Two nitpicks:

I wouldn’t consider the processors used in the 1977 Trinity VLSI. VLSI is a vague term that can be defined in several different ways, but it’s almost always defined as a chip containing (what was then) a large number of transistors, namely at least 100,000. None of these early 8 bit CPU designs came close to that number.

The Voodoo 1 supports 800×600, but it effectively wasn’t used by software because of memory limitations on non-professional versions. (The mode was technically usable in on normal cards, but only without the key 3D feature of Z-buffering, which made it unusable for almost all software. Some professional versions of the Voodoo 1 had additional frame buffer memory and could use this mode with Z-buffering) Even then, I feel that 800×600 was supported as more of a, “because they could”, than as a primary design goal from the beginning, so I would probably mention this support as a footnote to the “640×480” comment.

Lt. Nitpicker

May 18, 2023 at 3:07 am

If you want a reference for the 800×600 support, here’s a 3DFX document that mentions it.

http://www.o3one.org/hwdocs/video/voodoo_graphics.pdf

Jimmy Maher

May 18, 2023 at 11:07 am

Thanks! Footnote added.

Stu

June 6, 2023 at 10:39 am

It’s actually possible to upgrade the VRAM on some Voodoo 1 cards to 6MB, which allows the use of 800×600 without loss of features.

The “Bits und Bolts” YouTube channel demonstrates the process and offers a PCB: https://www.youtube.com/watch?v=pwGdw0eZVCQ

Walter Mitte

December 16, 2023 at 6:24 pm

Extravagent luxury spending is a “white guy” thing? Someone should probably tell that to, uh, basically every single professional athlete, because I think a lot of them aren’t actually white. While we’re at it, might want to drop a memo to the Saudi royal family, Kim Jong Un, and those Japanese guys who eat nyotaimori and blow hundreds of thousands on baccarat. Heck, if we’re going to get really enterprising here, let’s build a time machine and let Moctezuma II know that his royal palace either isn’t extravagent or isn’t his.

That line just came out of nowhere and seems really inappropriate in the middle of what would otherwise be a compelling and interesting piece of research. Gratutious racializing (or, for that matter, gendering – Madonna, Beyonce, and Mariah Carey, just to name the first three names that popped into mind when thinking “rich lady who was in front of a microphone at some point in her career”) gets so, so, so very tiresome, and just adds nothing but unnecessary stratification.